Data integration challenges arise when organizations struggle to combine data from multiple systems due to issues like data silos, inconsistent formats, API complexity, and scalability limitations. These challenges directly impact data accuracy, real-time access, and the quality of business decisions that depend on them.

According to the2025 IBM Institute for Business Value Report on Data Quality, over a quarter of organizations estimate they lose more than USD 5 million annually to poor data quality, and 7% report losses of USD 25 million or more.

Integration problems are upstream of most of that loss, which is why they deserve a structured response rather than another patchwork fix.

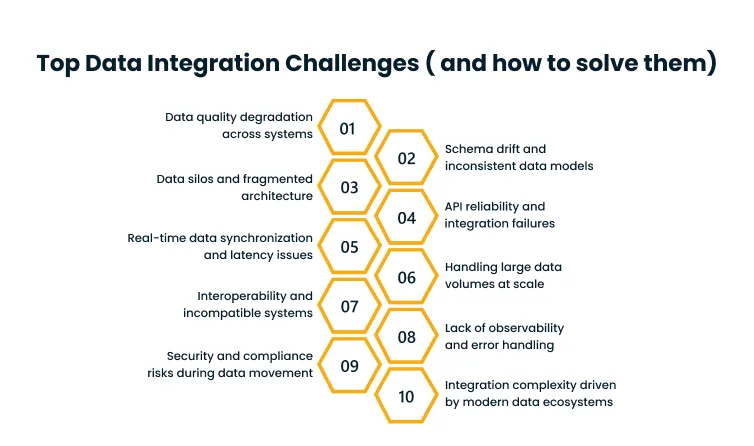

In this guide, we break down the ten most common data integration challenges we see across enterprise environments, explain why each one happens and what it costs the business, and outline the approaches that consistently resolve them.

Top Data Integration Challenges (and How to Solve Them)

For each one of the challenges below, we describe the problem in practical terms, explain why it tends to emerge, call out the business impact, and lay out the solution pattern that typically works.

1. Data quality degradation across systems

Source systems often lack validation at ingest, manual data entry introduces inconsistencies, and legacy systems carry forward data captured under older rules that no longer reflect current business definitions.

Duplicate records, missing values, and conflicting entries accumulate across CRM, ERP, support, and analytics systems. For example, the same customer exists three times with slightly different spellings, and revenue booked in one system does not match the number reported in another.

Analytics built on this data produce results nobody trusts. Decisions based on those analytics either get ignored or, worse, get acted on and lead to downstream errors that lead to budget wastage.

Build validation and cleansing into the ingestion pipeline so quality issues are caught before they spread. Define data quality rules per domain, monitor them continuously, and route violations to the owning team for resolution rather than silently correcting them.

Cygnet.One’sData Engineering and Management service helps enterprises build pipeline-level validation, data quality monitoring, and governance frameworks that treat quality as a continuous operational responsibility rather than a one-off cleanup project.

2. Schema drift and inconsistent data models

Source systems change their schemas without coordinating with the teams consuming the data. A field gets renamed, a data type changes, a new column appears, and downstream pipelines silently produce wrong results or fail outright.

Source APIs evolve, engineering teams ship independently, and the integration layer rarely has explicit contracts with upstream systems about what can and cannot change without notice.

Pipelines break in production, transformations produce incorrect outputs, and the team responsible for downstream data spends their time firefighting rather than building new capabilities.

- Introduce schema versioning so changes are tracked and consumers can adopt them deliberately.

- Establish data contracts between source and consumer teams that define what is guaranteed and what is not.

- Automate schema-compatibility checks so breaking changes are caught before they reach production.

3. Data silos and fragmented architecture

Data sits in dozens of disconnected tools, each managed by a different team with its own conventions. A unified view of the customer, the product, or the financial picture does not exist because no single system holds it.

As a result, every cross-functional question requires manual stitching. Analytics teams duplicate effort because they each rebuild integrations independently. AI use cases that depend on joined data never reach the training stage.

Move toward a unified data architecture where all operational data flows into a central lakehouse or federated access layer. Pair it with a governance model that defines ownership, access, and quality standards across domains.

According to the 2025 Gartner Survey on Data Management Practices for AI, organizations will abandon 60% of AI projects through 2026 due to the lack of AI-ready data. Silos are the single biggest reason data fails to become AI-ready in the first place.

4. API reliability and integration failures

APIs that enterprise integrations depend on break, throttle, or behave inconsistently. Documentation does not match behavior, rate limits surface unexpectedly, and authentication tokens expire without graceful handling.

It happens because of:

- Poor or outdated documentation

- Complex authentication flows across OAuth variants

- Version changes that are not communicated clearly to downstream consumers

Integrations spend more time being fixed than delivering value. Engineering capacity that should go into new capability gets absorbed by keeping existing connections alive.

Standardize API patterns across the organization so every integration follows the same authentication, retry, and error-handling model. Put a middleware layer between source APIs and consuming systems so retries, rate-limit backoff, and schema translation happen in one place.

Monitor API health proactively rather than discovering outages through failed pipelines.

Cygnet.One’s Enterprise Integration service provides middleware, API management, and event-driven architecture patterns that take this cross-cutting concern off each integration team and centralize it into shared infrastructure.

5. Real-time data synchronization and latency issues

Time lag between when data is created in a source system and when it becomes usable in analytics, reporting, or downstream workflows. Dashboards show yesterday’s state instead of the current one.

Most integration infrastructure was built for batch processing. Distributed systems introduce replication delay, mixed ingestion modes create inconsistent freshness across datasets, and synchronous patterns struggle to scale.

Stale data drives stale decisions. For example, fraud detection misses events because the data arrives too late, inventory systems show availability that no longer exists, and customer-facing experiences rely on a state that is hours old.

- Move high-value flows to event-driven architecture using streaming platforms like Apache Kafka, Amazon Kinesis, or Apache Pulsar.

- Use change data capture to stream updates from transactional databases in real time.

- Keep a batch for flows where latency does not matter and apply real-time patterns selectively where the business case justifies them.

6. Handling large data volumes at scale

Pipelines that worked fine at initial scale slow down or fail entirely as data volumes grow. Processing windows stretch past their SLAs. Jobs that ran in hours now take days.

Why it happens:

- Legacy ETL tools designed for single-node execution cannot parallelize effectively

- Pipelines built without partitioning or distributed processing hit hardware ceilings

- Storage layers optimized for transactional workloads buckle under analytical query patterns

Data freshness deteriorates, analytics become unreliable, and engineering teams spend increasing effort keeping pipelines alive instead of improving them.

Adopt distributed processing frameworks like Apache Spark or cloud-native equivalents that scale horizontally. Design pipelines around partitioning and parallelism from day one. Use cloud-native storage and compute that auto-scale with demand rather than over-provisioning for peak volumes.

7. Interoperability and incompatible systems

Systems across the enterprise do not speak the same language. For example, one stores dates as Unix timestamps and another as strings, one encodes customer identity as an email and another as a numeric ID, and formats range from JSON to XML to flat files to binary protocols.

Mix of legacy and modern systems, sector-specific formats that predate modern standards, and historical architectural decisions that no team owns anymore.

Every integration requires custom transformation logic. Maintenance burden scales linearly with the number of system pairs connected. Small format changes cascade into multiple pipeline rewrites.

Define canonical data models for the core entities in the business and map every system into and out of them. Adopt open standards like Parquet, Avro, or JSON Schema for data exchange. Use interoperability frameworks that handle format translation at the edge rather than embedding it into each pipeline.

8. Lack of observability and error handling

Teams do not know when pipelines fail until a downstream user complains about a broken dashboard or a missing report. Data freshness issues surface through support tickets rather than monitoring.

Pipelines get built without monitoring attached, logging is sparse and hard to search, and no one explicitly owns the health of a given data flow.

Silent failures erode trust in data. Users route around the analytics platform because they cannot tell if it is current. The team that should be preventing issues ends up explaining them after the fact.

Instrument every pipeline with health checks, data-freshness metrics, and row-count validation. Use observability tools like Monte Carlo, Datadog, or OpenLineage to track lineage and detect anomalies automatically. Define alerting thresholds and owner rotations so failures get attention before they reach users.

9. Security and compliance risks during data movement

Data moves through multiple hops between systems, and each hop is a potential exposure point. Copies proliferate, access controls drift, and sensitive data lands in places that were not part of the original compliance review.

Weak access controls, integration patterns that were not designed with compliance in mind, and ad-hoc data extracts that bypass governance entirely.

Breach risk, regulatory violations, and audit findings that are expensive to remediate. Customer trust erodes when disclosures become necessary.

Solution:

- Encrypt data in transit and at rest across every leg of the pipeline

- Enforce role-based access control on every data store and integration endpoint

- Maintain audit logs of every data movement

- Align integration patterns with the compliance frameworks that apply to your business, including GDPR, HIPAA, SOC 2, and sector-specific requirements

Cygnet.One’s Governance, Risk Management, and Compliance service helps enterprises establish the compliance frameworks, audit processes, and risk controls that keep integration pipelines aligned with regulatory requirements, including support for ISO 27001, SOC 2, and sector-specific mandates.

10. Integration complexity driven by modern data ecosystems

Integration gets harder as the number of tools in the stack grows. What worked when the environment had a dozen systems becomes unmanageable at two hundred. Every change somewhere ripples through dependencies elsewhere.

SaaS tool proliferation, hybrid and multi-cloud deployments, and constant system changes that no one has the capacity to track centrally.

Technical debt accumulates faster than it can be paid down. Pipelines become fragile, and every new integration adds disproportionately more complexity than the previous one.

Move away from point-to-point integrations toward a platform-based approach where all integrations flow through shared infrastructure with consistent patterns. Think in terms of a data fabric or data mesh where individual domains own their data products and the platform handles the cross-cutting concerns.

According to the 2025 IBM Institute for Business Value CEO Study, 50% of CEOs report that the pace of recent technology investments has resulted in disconnected systems, and 68% identify integrated enterprise-wide data architecture as critical for cross-functional collaboration.

Integration complexity has moved out of the IT department and onto the CEO’s agenda.

Why Data Integration Challenges Are Becoming More Complex

Integration was hard when the enterprise stack had a dozen systems. With two hundred SaaS tools, three cloud providers, and a growing number of real-time use cases, the complexity curve has bent sharply upward.

Several macro trends are driving this.

- Data sources have multiplied. Our typical enterprise engagements now span 150 to 300 distinct systems producing data, each with its own API, schema, and access pattern.

- Multi-cloud and hybrid environments have become the default rather than the exception, which means integration has to span networks, identity systems, and cost models that were not designed to work together.

- Real-time data expectations have moved from “nice to have” to baseline in customer-facing experiences, fraud detection, and operational dashboards.

- AI and analytics adoption has added an entirely new class of consumer that demands clean, joined, and complete data at volumes that legacy integration infrastructure was never built to handle.

The uncomfortable truth is that integration complexity is growing faster than most enterprises’ infrastructure maturity. The gap widens every year unless there is a deliberate investment in integration as a capability rather than a side effect of individual projects.

What You Should Do Next to Overcome Data Integration Challenges

The first step in solving integration challenges is to stop treating them as a backlog of one-off projects and start treating them as a capability that needs design, investment, and ownership.

We recommend a four-step approach:

1. Evaluate current integration gaps: Inventory the systems producing and consuming data, the flows between them, the known quality issues, and the freshness requirements. Most teams discover they have more integrations than they realized and less documentation than they assumed.

2. Define data flow requirements: For each consumption use case, map what data is needed, where it comes from, how fresh it needs to be, and what quality bar it has to clear. This creates the blueprint for prioritizing integration investment against actual business value.

3. Choose the right integration approach per flow: Not every integration needs the same pattern. ETL works for periodic analytical loads. APIs and middleware work for transactional integration. Streaming works for real-time flows. The mistake is picking one approach and forcing everything through it.

4. Prioritize real-time versus batch deliberately: Real-time introduces cost and complexity. Apply it where the business case justifies the tradeoff and stay with the batch where it does not. A thoughtful mix produces better outcomes than an all-real-time or all-batch architecture.

Cygnet.One’sData Engineering and Management service supports enterprises across this full journey, from integration assessment to pipeline development, schema governance, and data quality management, so teams can address root causes rather than treating symptoms one broken pipeline at a time.

Since integration at enterprise scale increasingly depends on cloud-native architecture and elastic compute, we work with clients through ourAWS partnership to design cloud data architectures that scale as data volumes grow and flex between real-time and batch patterns as use cases evolve.

Our focus is on helping teams move from fragmented systems toward a unified data ecosystem where integration is a solved capability rather than a recurring cost.

Conclusion

Enterprise data integration has moved from a plumbing concern to a strategic constraint on analytics, AI, and decision quality.

The organizations that treat it as infrastructure, with named owners, funded roadmaps, and measurable quality targets, consistently pull ahead of the ones that treat each integration as a one-off project delivered by whichever team had capacity that quarter.

The ten challenges above are the ones we see repeatedly. None of them is unsolvable, but they share a pattern. Each gets dramatically more expensive to fix the longer it is ignored, and each resists point solutions because the root causes span data, systems, and organizational design.

The enterprises that get ahead of this curve are the ones that invested early in a unified data architecture, standard integration patterns, observability by default, and a platform approach that scales as the ecosystem grows. The gap between them and the organizations still firefighting is widening year over year.

If your teams are struggling with integration complexity across fragmented systems, the path forward usually needs structured support across data engineering, cloud architecture, and integration strategy together rather than in isolation.

Cygnet.One’sData Analytics & AI combines all three into a single delivery capability.Book a demo to discuss how we can help you close the gap between the data you have and the insights you need.

The biggest data integration challenges we see across enterprise environments are:

1. Data quality degradation across systems

2. Schema drift between sources and consumers

3. Data silos created by disconnected tools

4. API reliability failures

5. Real-time synchronization latency

6. Scaling pipelines to handle growing data volumes

7. Interoperability between incompatible systems.

Enterprise data integration is difficult because the typical environment spans hundreds of systems, multiple cloud providers, legacy applications, and modern SaaS tools, each with different schemas, APIs, formats, and update cadences.

Common data engineering issues in integration include broken pipelines from schema changes, slow processing as data volumes grow, silent failures from weak observability, authentication complexity across heterogeneous APIs, data quality issues that propagate downstream, and mounting technical debt from point-to-point integrations that accumulate faster than they can be maintained.

Enterprises solve data integration challenges by treating integration as a platform capability rather than a per-project effort. This means standardizing on canonical data models, adopting event-driven patterns where real-time matters, and building observability and quality monitoring into every pipeline.

Common integration tools span several categories. ETL and ELT platforms like Fivetran, Matillion, and Informatica handle scheduled data movement. Streaming platforms like Apache Kafka and Amazon Kinesis handle real-time flows. Middleware platforms like MuleSoft and Dell Boomi handle API-driven integration. Observability tools like Monte Carlo and Datadog track pipeline health and data quality.

Real-time data integration is important because a growing number of use cases depend on fresh data to create value. Fraud detection, operational dashboards, personalization, inventory management, and customer-facing experiences all lose effectiveness when the underlying data is hours or days stale.