Most organizations experimenting with AI reach the same inflection point. The pilot worked initially, and the model performed well in a controlled environment. The data was clean, the scope was narrow, and the team was motivated.

Then the project moved toward production, and the complexity of integrating AI into real systems, with real data quality issues and real organizational dependencies, changed the equation. Scaling from pilot to enterprise deployment is where most AI programs slow or stop.

Enterprise AI implementation is the discipline of crossing that gap systematically. It covers the decisions, structures, and processes that separate organizations running productive AI at scale from those still iterating on proof-of-concept work.

This guide outlines a structured framework, the foundational components, and the governance considerations that enterprise AI programs need to sustain impact beyond the first deployment.

What is enterprise AI implementation?

Enterprise AI implementation is the systematic process of integrating artificial intelligence technologies into an organization’s core systems, workflows, and decision-making processes to generate measurable business outcomes.

It combines data infrastructure, trained models, business logic, and operational workflows into a unified system that functions reliably at scale.

The process covers four core activities, including:

- Defining an AI strategy aligned with business objectives

- Preparing data and infrastructure

- Developing and validating AI models, and

- Deploying those models into production with ongoing monitoring.

Unlike isolated AI experiments, enterprise implementation requires coordination across technology, operations, and leadership.

Organizations that execute it effectively reduce operational costs, accelerate decisions, and create compounding advantages that isolated pilots cannot replicate.

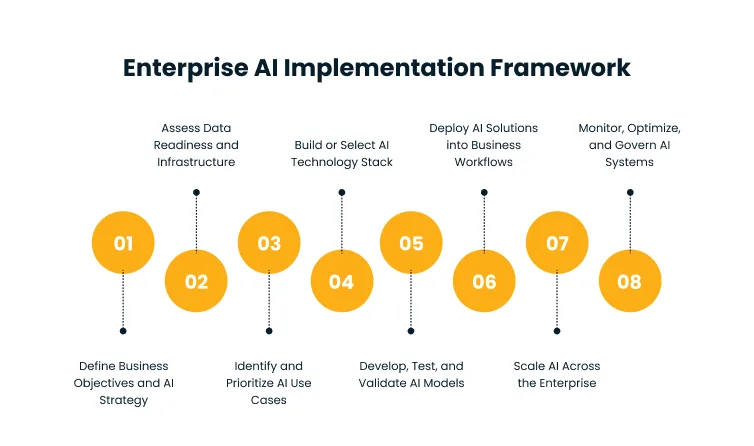

Enterprise AI implementation framework

Most AI initiatives fail because organizations underestimate the complexity of embedding AI into operating environments that were not designed with it in mind.

Organizations that move through the framework below deliberately and with attention to dependencies are far more likely to reach scalable AI deployment than those chasing speed.

Step 1: Define Business Objectives and AI Strategy

AI initiatives that begin with technology selection before problem definition routinely underdeliver. The reliable starting point is defining business outcomes like:

- What specific problem does the organization need to solve?

- What would a measurable improvement look like?

- Where does AI have the most direct path to creating that improvement?

Identifying those answers means mapping the business functions where AI can reduce cost, improve speed, or surface patterns that are not visible through manual processes.

Once mapped, each AI initiative can be assigned clear KPIs such as processing time reduction, forecasting accuracy improvement, or customer resolution rate. These metrics establish whether AI is working before it scales and create accountability at the leadership level that keeps AI programs from drifting into experimentation without outcomes.

The strategic layer sits above individual use cases. It defines which AI capabilities the organization is building toward, which business units carry the highest opportunity, and how AI investment is sequenced across a multi-year horizon. Without that layer, each AI initiative becomes an isolated project rather than a component of a broader capability.

According to a 2024 Gartner Survey on Business Value of Generative AI, 30% of generative AI projects will be abandoned after proof of concept, with poor data quality, inadequate risk controls, and unclear business value cited as the primary causes.

The absence of a defined business case is not a minor oversight but a leading predictor of program failure.

Step 2: Assess Data Readiness and Infrastructure

AI models are only as reliable as the data used to train and run them. Before any model development begins, organizations need a clear picture of what data they have, where it lives, how clean it is, and whether it is accessible at the speed and volume that AI workloads require.

Data readiness assessment covers several dimensions, each of which determines whether the data environment is ready for production AI workloads:

- Completeness: Are the right data fields captured consistently across systems?

- Consistency: Does the same event get recorded the same way across different platforms?

- Timeliness: Is the data current enough to support real-time or near-real-time applications?

- Accessibility: Can the data pipeline serve model training and inference without bottlenecks?

Infrastructure assessment runs alongside data review. The compute environment, storage architecture, and integration layer all affect whether AI models can run in production at the required scale.

According to the 2025 Gartner Survey on Data Management Practices for AI, organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026.

It reflects a structural problem that organizations that have not invested in data management practices before AI deployment consistently find that infrastructure gaps consume more time than model development itself.

Step 3: Identify and Prioritize AI Use Cases

Not every business problem is a good use case for AI, and organizations that try to apply AI broadly before they have the infrastructure or organizational readiness to support it tend to produce low-quality outputs across too many fronts.

Prioritization is a filtering discipline, and use cases should be evaluated on two dimensions:

1. Business impact: How significant is the outcome if AI works well here?

2. Feasibility: Do the data, infrastructure, and technical capability exist to make AI work in this area?

High-impact, high-feasibility use cases represent the right entry points. They deliver measurable ROI, build organizational confidence in AI, and generate data on implementation quality that informs how to approach more complex use cases later.

Quick wins are not about lowering ambition but sequencing correctly so that early deployment cycles produce learning and momentum rather than cost and frustration.

Step 4: Build or Select an AI Technology Stack

The AI technology stack spans multiple layers, including data ingestion and processing, model development environments, model serving infrastructure, and monitoring tooling.

Decisions made at this stage affect how quickly models can be deployed, how easily they can be maintained, and how scalable the AI program becomes as it expands.

Build versus buy is a real decision at each layer. For organizations with specialized requirements or proprietary data advantages, custom model development may be justified.

For most enterprise functions, existing AI platforms and pre-trained model foundations reduce time-to-deployment significantly without sacrificing performance on standard tasks.

The key evaluation criteria are:

- Integration capability with existing systems

- Scalability to support growing workloads, and

- The vendor’s track record with enterprise deployments of comparable complexity.

Organizations that select platforms before defining use cases often find themselves adapting their problems to fit the tools they chose.

Step 5: Develop, Test, and Validate AI Models

Model development in enterprise contexts differs from research environments in one critical way. Production constraints apply from the beginning in enterprise contexts.

A model that performs well on test data but cannot handle the volume, latency, or data distribution of a live production environment is not ready, regardless of benchmark performance.

Development follows an iterative cycle:

- Building an initial model

- Test it against real-world data samples

- Identify failure modes

- Adjust, and retest.

Validation must go beyond accuracy metrics. It should encompass:

- Reliability testing: Does the model perform consistently under varied conditions?

- Fairness testing: Does it produce systematically different outcomes for different groups in ways not justified by the underlying task?

- Stress testing: Does it maintain performance as data volumes increase?

Model acceptance criteria should be defined before development begins. Testing against predefined thresholds rather than discovering requirements during validation prevents scope creep and accelerates deployment decisions.

Step 6: Deploy AI Solutions into Business Workflows

A model that works in isolation may not integrate cleanly with the CRM, ERP, or customer support system it is supposed to enhance.

Deployment requires careful attention to API design, data flow between systems, latency requirements, and fallback behavior when the model is unavailable.

Teams need to understand how the AI output informs their decisions and where they retain judgment. AI that produces outputs no one trusts or uses is not deployed AI. It is an unused system that consumes infrastructure costs.

Change management, training, and clear protocols for how teams interact with AI-driven recommendations are essential components of successful deployment.

Cygnet.One’s Business Analytics and Embedded AI service addresses this integration challenge by embedding analytics and AI outputs directly into existing enterprise platforms, including ERP, CRM, and industry-specific systems, so that teams can act on AI recommendations within the workflows they already use rather than navigating between separate tools.

Step 7: Scale AI Across the Enterprise

Successful AI pilots are proof of concept, not proof of scale. Moving from one productive deployment to organization-wide adoption requires standardizing the practices that made the pilot work and removing the obstacles that would prevent other teams from replicating it.

MLOps is the operational infrastructure that makes scale possible. It covers the automated pipelines for retraining models as data distributions shift, the deployment frameworks that allow teams to roll out new model versions without manual intervention, and the monitoring systems that surface performance degradation before it affects business outcomes.

Organizations that try to scale AI without MLOps infrastructure find themselves doing by hand what needs to be systematized.

According to McKinsey’s “State of AI in 2025: Agents, Innovation, and Transformation” Report, nearly two-thirds of organizations have not yet begun scaling AI across the enterprise.

The gap between pilot success and enterprise-wide adoption is wide, and it is primarily organizational and operational rather than technical.

Cultural adoption requires intentional effort at the department level. Teams that were not involved in the pilot need on-ramps that match their workflows and roles. Mandating AI adoption without addressing the practical friction individual teams face produces surface compliance, instead of operational integration.

Step 8: Monitor, Optimize, and Govern AI Systems

AI models are not stable artifacts because they degrade over time as the data they were trained on diverges from current business conditions, a phenomenon called model drift.

An AI model that predicted customer churn accurately in one market environment may become unreliable as customer behavior, product pricing, or competitive dynamics change.

Monitoring for drift and retraining on updated data is a continuous operational responsibility.

Optimization goes beyond accuracy. As AI systems run in production, organizations collect operational data on where models underperform, which predictions are most consequential, and which failure modes carry the highest risk. That data should drive continuous improvement cycles.

Governance defines the rules under which AI systems operate, such as:

- What decisions can AI make autonomously?

- What decisions require human review?

- How are model outputs audited?

- What happens when a model produces an outcome that causes harm?

Regulatory compliance requirements are expanding across industries and jurisdictions, and organizations that build governance frameworks proactively are better positioned to adapt as regulations evolve than those that treat compliance as a constraint to manage after deployment.

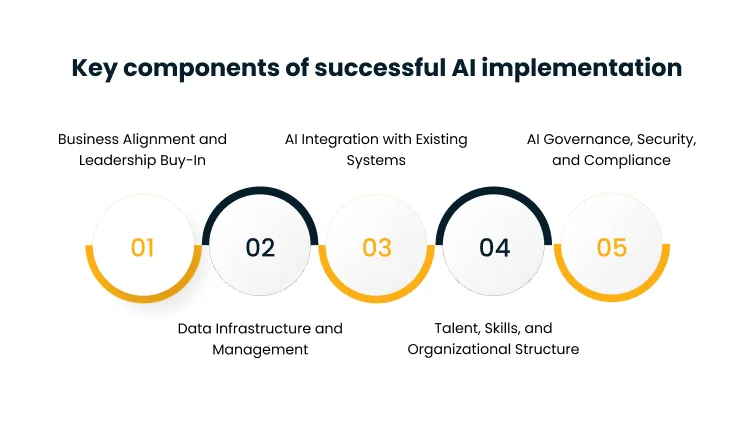

Key components of successful AI implementation

The five key components of successful AI implementation below determine whether the implementation framework produces results at all:

1. Business Alignment and Leadership Buy-In

AI implementation is a business program with a technology component, but most teams treat it as a technology program with a business component. The difference in understanding leads to misaligned accountability and the wrong measures of success.

When AI initiatives are owned by technology functions without strong business sponsorship, they tend to optimize for technical quality metrics that do not translate directly into the outcomes business leaders care about.

Executive sponsorship ensures that AI programs receive sustained investment, maintain strategic priority when competing with other initiatives, and have the authority to drive adoption across functions that may resist changing established workflows.

Without that sponsorship, AI initiatives tend to stall after initial deployment, because the organizational change required to make AI operational goes beyond what a project team can mandate.

Business alignment also means that every AI initiative is connected to a specific business outcome with a measurable definition of success. Programs without clear outcome metrics are vulnerable to being deprioritized when business conditions change, because there is no evidence base to defend their continued investment.

2. Data Infrastructure and Management

The relationship between data infrastructure and AI performance is direct. The quality, availability, and accessibility of data determine the ceiling on what any AI model can achieve.

Organizations with fragmented data environments, inconsistent data standards, or poor data governance cannot compensate through model sophistication because the constraint is upstream.

Building AI-ready data infrastructure means establishing reliable data pipelines that move data from source systems to the environments where AI workloads run, governance policies that define data ownership and access controls, and storage architectures that can support both model training and real-time inference.

Cygnet.One’s Data Engineering and Management service helps enterprises build these foundations systematically, addressing fragmented data systems through pipeline development, data governance frameworks, and data quality management practices that reduce the downstream risk AI programs face when data is inconsistent or inaccessible.

3. AI Integration with Existing Systems

Most enterprise AI applications do not replace existing systems rather, they sit alongside them, reading data from them and writing outputs back to them.

The integration layer between AI models and existing business systems is where implementation complexity often concentrates.

Systems built over the years to manage ERP, CRM, supply chain, or customer operations have data structures, API patterns, and update frequencies that were not designed with AI integration in mind.

Making AI work within those environments requires careful mapping of data flows, handling of schema differences, management of latency requirements across systems with different performance profiles, and coordination of update cadences between the AI model and the systems it interacts with.

Poor integration undermines adoption, because teams that have to reconcile AI outputs with system data manually will abandon the AI tool before they abandon the manual process.

4. Talent, Skills, and Organizational Structure

Enterprise AI requires a combination of skills that rarely exist in full within any single team.

- Data engineering builds and maintains pipelines.

- Data science develops and validates models.

- Machine learning engineering manages production deployments.

- Domain expertise ensures that model outputs are interpreted and applied correctly in business contexts.

Most organizations address this through a mix of internal hiring, upskilling existing staff, and strategic partnerships.

The question of whether to build centralized AI teams or distribute AI capability across business units is answered differently depending on organizational size, the diversity of AI use cases, and how closely AI applications need to be tied to specific domain knowledge.

Clear role definitions and collaboration structures between technical teams and business units are as important as the specific skills acquired. AI programs that produce excellent models but cannot translate them into workflow changes fail at the last mile.

5. AI Governance, Security, and Compliance

As AI systems take on more consequential roles in business decision-making, governance structures that define accountability, ensure fairness, and maintain transparency become operationally necessary rather than aspirational.

Governance failures in AI are often more visible and harder to contain than failures in traditional software systems, because AI errors can compound across large volumes of decisions before they are detected.

Security considerations specific to AI include:

- Protecting training data from manipulation (data poisoning)

- Ensuring that model outputs cannot be exploited by adversarial inputs, and

- Managing access to models trained on proprietary or sensitive data.

These are not purely technical concerns because they require policy frameworks that define what is and is not acceptable AI behavior and who is responsible for enforcing those boundaries.

According to the 2026 Gartner Survey on Global AI Regulations, organizations that deployed AI governance platforms are 3.4 times more likely to achieve high effectiveness in AI governance than those that do not.

The investment in formal governance infrastructure delivers a return that extends well beyond compliance. It determines whether AI programs can be trusted to scale.

Cygnet.One’s Governance, Risk Management, and Compliance service helps enterprises establish the compliance frameworks, audit processes, and risk controls needed to operate AI systems within regulatory and security boundaries, including support for ISO 27001, SOC 2, and sector-specific compliance requirements.

Conclusion

Enterprise AI implementation is a capability organizations build incrementally, with each successful deployment adding to the infrastructure, talent base, and organizational readiness that make the next deployment faster and more effective.

The organizations that sustain AI programs across multiple years consistently treat AI as a business discipline with the same rigor they apply to financial management or supply chain operations, not as a technology experiment that runs alongside the real business.

Implementation success is determined less by the sophistication of the first model deployed and more by whether the organization builds the foundations that allow AI to compound in impact over time.

For most enterprises, the gap between AI ambition and AI performance is a process and governance gap, and it closes through structured execution rather than experimentation.

If your organization has proven AI in pilots but is navigating the path to enterprise-wide deployment, Cygnet.One’s Business Analytics and Embedded AI service works with enterprises to close the gap between AI potential and operational reality.

Book a demo to discuss how Cygnet.One can support your AI implementation roadmap.

Enterprise AI implementation is the process of integrating artificial intelligence technologies into an organization’s core systems, workflows, and decision-making processes to generate measurable business outcomes. It spans strategy definition, data preparation, model development, deployment, and ongoing governance across the enterprise

Successful AI implementation requires aligning AI initiatives with specific business objectives, ensuring data readiness before model development begins, prioritizing high-impact and feasible use cases, and building MLOps infrastructure to support scaling. Executive sponsorship and clear outcome metrics are essential throughout.

The most common challenges are poor data quality and accessibility, difficulty integrating AI with legacy systems, insufficient organizational readiness to adopt AI-driven workflows, and the absence of governance frameworks. Scaling beyond a successful pilot is where most enterprise AI programs encounter the most friction.

Initial pilots typically take 2 to 3 months. Full-scale enterprise implementation spans several months to over a year, depending on the complexity of use cases, the state of data infrastructure, and the degree of organizational change required.

Clear business objectives tied to measurable outcomes, quality data with accessible pipelines, an initial use case with strong feasibility, and a cross-functional team that includes both technical and domain expertise. Executive sponsorship is also critical to sustain the program beyond the initial deployment.

MLOps (machine learning operations) is the operational infrastructure that manages model deployment, monitoring, and retraining at scale. It includes automated pipelines for updating models as data distributions shift and monitoring systems that detect performance degradation before it affects business outcomes. Without MLOps, scaling AI across an enterprise requires manual intervention at every stage, which limits how broadly and reliably AI can be deployed.