Analytics and operations teams usually notice something is wrong long before anyone labels it a platform issue. Reports that once ran quickly begin to stall. Dashboards load, but the numbers do not line up across teams. Over time, more logic is added to keep things moving, until the warehouse becomes difficult to change without breaking something else. Most of these systems were built for a different stage of business.

Data warehouse modernization reshapes how organizations manage and use their data. It goes beyond upgrading tools. It focuses on how data supports everyday decisions and ongoing operations. A modern warehouse turns data into a reliable foundation. This foundation can adjust as business needs continue to evolve, supporting modern data architecture

What Does Data Warehouse Modernization Involve?

Data warehouse modernization = architectural renewal + practical engineering updates.

Most modernization efforts focus on these core elements:

Data Restructuring

Redesigning schemas to support flexible queries and analytics. This includes decomposing monolithic models into modular structures that are easier to maintain and optimized for performance.

Cloud Shift

Migrating data and compute workloads to cloud platforms that support the separation of storage and compute. These platforms allow elastic scaling and make it possible to align cost with usage.

Schema Updates

This involves reviewing and revising table design, so analytics queries can run more efficiently. Partitioning and indexing strategies are then adjusted to improve performance without placing unnecessary load on the underlying platform.

ETL Modernization

This work focuses on redesigning ETL logic so that data is processed incrementally and runs more efficiently. Consequently, pipelines have become easier to maintain.

Data Lake Modernization

This approach brings data lakes into the environment. So, unstructured and semi-structured data can live alongside core warehouse tables. By doing this, teams reduce data silos. Additionally, they make it easier to support a wider range of analytics work.

Each of these steps addresses direct flaws in legacy setups. Plus, they position data platforms for extensibility. These foundations prepare teams for the challenges that arise during migration planning and execution.

What Limits Legacy Data Warehouses?

Legacy data warehouses often surface limitations when the load grows, or the reporting requirements broaden. These limitations come from design assumptions that made sense when the systems were first built. Over time, those assumptions stopped matching how workloads evolved.

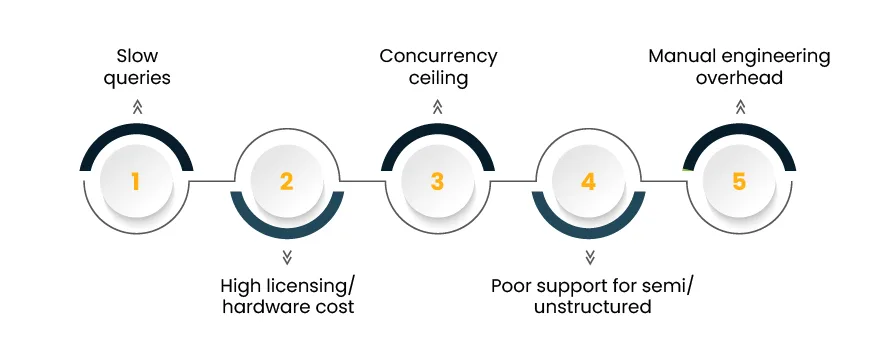

Common limitations include:

- Slow analytical queries that block business decisions

- High licensing and hardware costs

- Scalability ceilings that prevent growth in concurrent users

- Limited support for semi‑structured or unstructured data

- Manual processes that require ongoing engineering effort

These issues create operational bottlenecks. For example,

- Slow queries reduce agility in decision cycles

- High costs restrict experimentation

- Rigid schemas prevent integration of new data sources

These constraints are structural. So, teams often spend considerable effort managing workarounds rather than building new capabilities.

When these bottlenecks become frequent friction points, teams begin looking for modern architecture. They look for architectures that handle load reliably while enabling agile analytics and broad adoption.

What Modern Architectures Replace Legacy Warehouses?

Some architectures and tools that support modernization are:

Cloud‑Native Data Warehouses

These platforms provide dynamic scaling and separation of storage and compute:

- Snowflake

- Google BigQuery

- Amazon Redshift

These platforms allow teams to allocate resources as needed and reduce idle infrastructure.

ETL Modernization Frameworks

Modern ETL uses orchestration and modular pipelines instead of monolithic scripts. In this, tools may range from orchestrators like Apache Airflow to managed services like Fivetran or dbt. ETL modernization makes sure that data flows reliably. It also ensures that it supports incremental updates rather than full reloads.

Data Lake Modernization

Data lakes built on cloud storage support large volumes of diverse data formats. With table formats such as Delta Lake or Apache Iceberg, these lakes provide consistent semantics and ACID guarantees. This enhances analytics use cases.

Hybrid Approaches

Some organizations adopt a hybrid pattern that uses both a data lake and a cloud data warehouse. In this setup, raw or semi-structured data stays in the lake. On the other hand, curated tables live in the warehouse. This approach balances flexibility with performance.

Modern setups demand careful data migration planning to avoid disruptions. For the systems to perform reliably after the move, transitions involve:

- Rethinking pipelines

- Validating data lineage

- Adjusting governance

How Do You Assess and Plan Your Migration?

Planning for modernization begins with a data platform readiness assessment of the current environment. It requires examining both technical and business aspects of data operations.

A typical readiness assessment includes:

Inventory of Data Sources

List existing systems, formats, and interfaces.

Usage Patterns

Identify who uses what data and how frequently.

Quality Evaluation

Measure data accuracy, consistency, and completeness.

Compliance Review

Document regulatory and privacy requirements.

Performance Baselines

Capture response times and resource usage for key queries.

The results of this assessment feed into scoped planning, which may take the form of a phased timeline or structured migration plan.

Here is a simple planning table for reference:

| Activity | Description | Target Outcome |

| Data Cataloging | Document all data sources | Comprehensive inventory |

| Schema Mapping | Align current schema with target model | Clear transformation logic |

| Pipeline Audit | Review current ETL flows | Identify modernization candidates |

| Policy Assessment | Evaluate governance requirements | Compliance alignment |

| Performance Baseline | Measure query and job behavior | Target for improvement |

With a solid plan in hand, you can determine the right migration approach that aligns with your organization’s priorities.

What Migration Approaches Work Best?

Several approaches support modernization. Each has its place depending on business needs, risk profile, and resource availability.

Lift and Shift

This approach moves existing structures to a modern platform without redesigning them immediately. It delivers quick baseline improvements and reduces operational risk in the short term.

Rebuild

Rebuild involves:

- Redesigning schemas

- Restructuring pipelines

- Adopting new patterns

This method offers performance gains and long‑term flexibility but requires careful planning.

Hybrid Method

A hybrid method combines lift‑and‑shift for low‑risk components with targeted rebuild for critical paths. It balances speed and long‑term value.

Here is a comparison of these approaches:

| Approach | Risk | Time | Outcome Focus |

| Lift and Shift | Low | Short | Stability first |

| Rebuild | Medium | Long | Optimized and flexible |

| Hybrid | Medium | Medium | Incremental value |

These tactics deliver clear gains in cost and performance when they are guided by informed decisions and a phased execution strategy.

What Cost and Performance Benefits Follow?

Modernization yields measurable benefits that affect both operational efficiency and business outcomes.

Some outcomes seen after successful modernization include:

- Faster Query Performance

Users spend less time waiting for insights.

- Elastic Cost Control

Pay for use instead of pre‑allocated hardware.

- Improved Concurrency

More users can access systems without contention.

- Support for Diverse Analytics

Real‑time and historical analytics coexist.

For example, companies that adopt cloud data warehouses often see improved response times for key dashboards and reduced resource waste due to auto‑scaling.

To sustain these wins, organizations must adopt long‑term practices that maintain performance and control costs through ongoing evaluation and refinement.

How Do You Maintain Modernized Warehouses Long‑Term?

Maintenance is a continuous engineering practice. Just like application code requires updates, data platforms require monitoring, governance, validation, and adaptation as usage evolves.

Key practices include:

- Monitoring and Alerts

Track pipeline health and query performance in real time.

- Governance Frameworks

Apply consistent policies for access, lineage, and traceability.

- Cost Audits

Schedule periodic reviews to detect inefficiency or wasteful patterns.

- Schema Evolution Practices

Plan schema changes so that downstream systems are unaffected.

- Performance Benchmarks

Compare current performance against historical patterns to detect drift.

Data teams should treat these activities as part of their regular operational rhythm instead of sporadic exercises.

Here is a simple maintenance checklist:

| Activity | Purpose |

| Observability Setup | Track system behavior |

| Governance Policies | Maintain trust and compliance |

| Cost Review | Optimize spend |

| Performance Testing | Prevent regressions |

| Documentation Updates | Keep knowledge current |

Start your project with this checklist in mind. It helps organizations move from reactive maintenance to proactive improvement.

Key Takeaways and What to Do Next

Data warehouse modernization is an evolutionary process that changes how data supports decisions and innovation. Modern systems deliver flexibility and cost efficiency that legacy platforms cannot sustain.

To begin your journey:

- Assess your current state with clear criteria.

- Choose the migration approach that suits your needs.

- Embrace post‑migration practices that embed performance and clarity into your operations.

Use the readiness checklist above to evaluate where your team stands and determine the next steps. With thoughtful planning and continuous engineering attention, your modernized data warehouse can become a lasting source of business value.