Most enterprises that have invested in AI over the past five years have something to show for it, such as a proof of concept, a working prototype, or a model deployed in a controlled environment.

What many of them do not have is a scalable AI capability that delivers measurable business value across the organization.

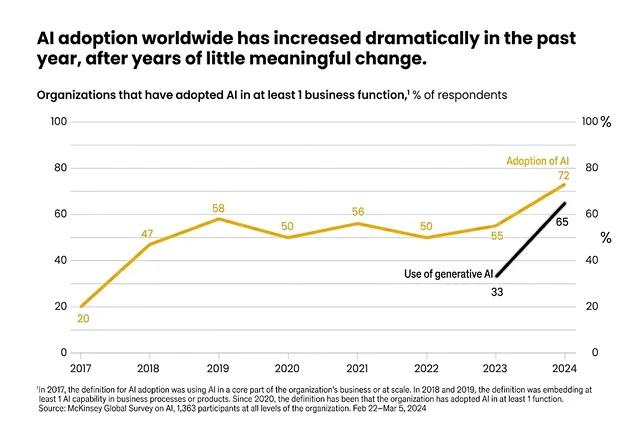

According to the 2024 McKinsey State of AI Report, 72% of organizations adopted AI in at least one business function, up from roughly 50% for the previous six years.

Adoption, however, does not equal impact. The organizations that move from adoption to outcome are those that built their AI programs on a structured strategy, grounded in clear business goals and executed in the right sequence.

An AI strategy roadmap is the structure that makes that difference. This guide walks through the seven steps and key components required to build an effective AI strategy roadmap that works beyond the pilot stage, from defining business goals through governance of production systems.

Step-by-Step AI Strategy Roadmap Framework

Organizations that treat AI as a sequence of isolated experiments rarely reach scale. A roadmap addresses this by sequencing decisions correctly, like business goals before technology selection, validated use cases before infrastructure investment, and controlled pilots before organization-wide deployment.

Each step below creates the conditions that make the next step executable, and skipping any step typically forces a return to it later at greater cost.

Step 1: Define Business Goals & AI Vision

AI strategy begins with defining business goals. Technology selection comes later, once the specific business problems AI is meant to solve have been identified at a level of detail that allows them to be measured.

The first step is establishing a direct connection between each AI initiative and the corresponding strategic objective, like revenue growth, cost reduction, customer experience improvement, or operational efficiency.

Vague goals produce unaccountable outcomes. A target like “reduce customer churn by 15%” or “automate 30% of manual invoice processing workflows” creates a measurable baseline against which AI performance can be evaluated throughout implementation, and gives business owners a clear accountability metric that extends beyond technical model performance.

AI vision is distinct from near-term goals. Goals are specific and time-bound. Vision articulates the role AI will play in the organization over a three-to-five-year horizon and guides decisions about where to invest, what to build internally, and what to acquire or partner for.

Without a defined vision, AI strategy defaults to a sequence of tactical responses to individual business unit requests and loses the coherence required to build durable organizational capability over time.

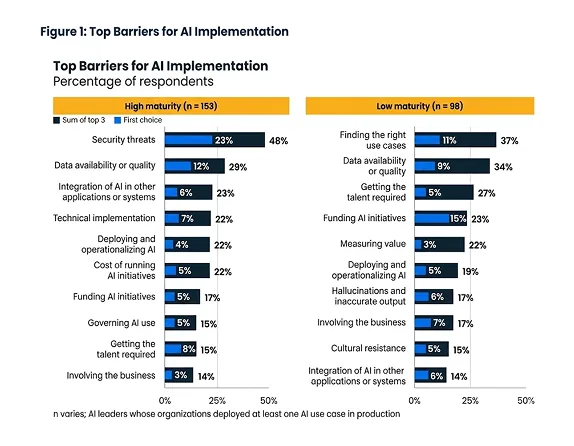

Step 2: Assess AI Maturity

Before committing to a roadmap timeline or a technology stack, organizations need an honest assessment of where they currently stand across three dimensions

- Data

- Technology, and

- Talent.

A beginner-stage organization typically has fragmented data across multiple systems, limited internal data science capability, and no established model deployment infrastructure.

An intermediate-stage organization has functional data pipelines and some in-house AI experience but lacks the governance and scaling mechanisms to move pilots into production reliably.

An advanced-stage organization has integrated data infrastructure, operational MLOps capability, and established governance frameworks for responsible AI deployment.

According to the 2025 Gartner AI Maturity Survey, 45% of leaders in high-maturity organizations keep AI in production for at least three years, compared to 20% in low-maturity organizations.

That gap in production longevity is a direct result of investing in the right foundational capabilities before scaling.

Organizations that skip this assessment often invest in advanced AI capabilities before their foundational systems can support them. The models do not fail, but the data pipelines, integration layers, and talent structures the models depend on do.

Setting realistic expectations early prevents over-investment in solutions that the organization is not yet ready to operate.

Step 3: Identify High-Impact Use Cases

Not all AI use cases merit the same priority. The ones that belong on an early roadmap are those that satisfy two criteria simultaneously:

- Clear ROI potential

- Accessible data.

Clear ROI potential means the business value of the outcome is measurable and attributable to the AI solution.

Customer support automation that reduces average handling time, demand forecasting that reduces inventory carrying costs, and fraud detection that reduces financial loss exposure are all examples where value can be quantified and tracked over time.

Accessible data means the data required to train and validate the model already exists in a structured, usable form. Use cases that require extensive data collection, labeling, or transformation before a model can be trained extend timelines and increase early-stage risk significantly.

Use cases that score high on both criteria should anchor the early roadmap. Complex projects with unclear ROI or sparse data should be deferred until the organization has validated its delivery process on simpler, higher-confidence use cases.

Demonstrating value early is more strategically valuable than attempting ambitious projects that stall before reaching production.

Step 4: Build Data & Infrastructure Foundation

AI systems perform at the level of the data they are trained on. An organization can have the right use cases, the right talent, and the right tools and still produce unreliable models if the underlying data quality is poor.

Building the data foundation involves three parallel tracks:

- Data pipelines that ingest, clean, and transform data consistently

- Storage systems that make data accessible to both technical and non-technical teams

- Governance frameworks that enforce data quality standards, access controls, and lineage tracking

Infrastructure choices at this stage should account for scale. Cloud platforms provide the compute elasticity and storage flexibility that AI workloads require as they grow beyond initial pilots.

Organizations that build data foundations on legacy on-premises infrastructure encounter capacity and connectivity constraints when attempting to scale, often requiring a costly re-architecture that delays the roadmap.

Data governance at this stage is a technical requirement. Models trained on poorly governed data produce outputs that are unreliable, difficult to audit, and potentially non-compliant with applicable regulations.

Getting governance in place before the first model reaches production is significantly less costly than retrofitting it after live systems are affected.

Step 5: Develop & Pilot AI Solutions

The pilot phase is where roadmap assumptions meet operational reality. Rather than attempting a full production deployment, organizations should begin with small, controlled pilots that test a specific hypothesis in a defined scope, such as a single business process, a single data source, or a single team.

Minimum viable models are the right output for pilots. The goal is to generate enough evidence to validate the use case and the approach before committing the resources required for full deployment.

A pilot that produces evidence of value creation, however modest, is worth more than a comprehensive model that spent six months in development without stakeholder validation.

Stakeholder buy-in is a practical outcome of well-executed pilots. When business users can see AI working on their actual data and actual processes, the organizational resistance that often slows AI scaling decreases.

Documenting pilot results in business terms, like cost savings, time reduction, error rate improvement, rather than model performance metrics, is the most effective way to build the internal support the scaling phase requires.

Step 6: Scale AI Across the Organization

Scaling AI is as much an organizational challenge as a technical one. The processes, governance structures, and change management approaches required to move AI from one business unit to the entire organization are more demanding than anything required in the pilot phase.

Technically, scaling requires MLOps practices, such as standardized model training and deployment pipelines, automated monitoring, version control, and continuous retraining protocols that keep models performing as business conditions change.

Without MLOps, each new deployment becomes a manual project that consumes engineering resources disproportionate to the value it delivers.

Organizationally, scaling requires cross-functional collaboration. AI solutions that integrate into core business workflows, like order management, customer service, and financial reporting, cross-team boundaries by definition.

The business units that own the workflows, the data engineering teams that manage the underlying data, and the AI teams that build the models must operate from a shared understanding of timelines, integration requirements, and success criteria.

Scaling without that shared plan produces a fragmented AI estate: many individual models with varying quality, documentation, and governance levels, creating the kind of maintenance overhead that erodes the efficiency gains AI was designed to deliver.

Step 7: Monitor, Optimize & Govern AI Systems

Deploying an AI model marks the beginning of an operational commitment. Production AI systems require active management across performance, compliance, and ethics dimensions that persist for as long as the model is in use.

Model performance degrades over time as real-world data distributions shift away from the training data on which the model was built. This is model drift, and it is inevitable in any AI system operating in a dynamic environment.

Monitoring systems must track model outputs against performance baselines and trigger retraining or recalibration when drift exceeds acceptable thresholds.

According to Gartner’s 2024 Analysis of GenAI Project Failures, at least 50% of generative AI proof-of-concept projects were abandoned by the end of 2024 due to poor data quality, inadequate risk controls, or unclear business value, which are problems that robust governance frameworks are specifically designed to prevent.

Governance frameworks define the rules under which AI systems operate:

- What data can they access

- How their decisions are documented

- Who is accountable for outcomes, and

- How compliance with applicable regulations is maintained.

Establishing governance after deployment creates a retroactive compliance challenge more disruptive than building it into the deployment process from the start.

Bias monitoring deserves explicit attention alongside performance monitoring. AI systems can produce systematically unfair outcomes without intent, and without active monitoring, those outcomes may persist undetected for extended periods, creating regulatory and reputational exposure that compounds over time.

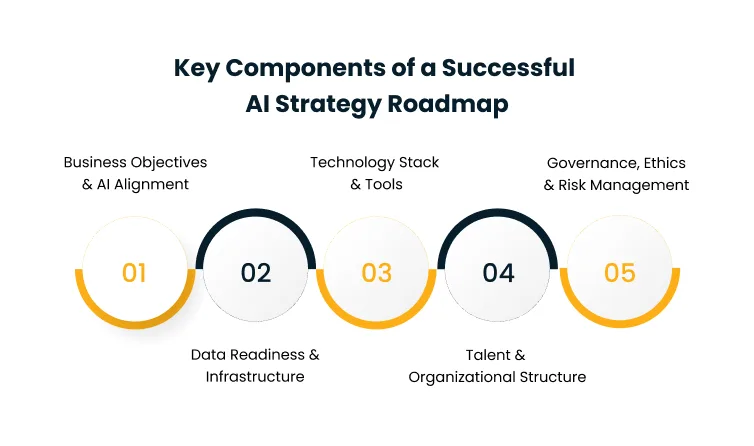

Key Components of a Successful AI Strategy Roadmap

A step-by-step framework answers how to sequence AI adoption. The components below address what structural conditions must be in place for that sequence to produce durable results.

These components function less like project deliverables and more like operating conditions, while their presence or absence determines whether the roadmap produces lasting organizational AI capability or a temporary lift that fades as conditions change.

1. Business Objectives & AI Alignment

AI investment that is not anchored to a specific business objective has no reliable mechanism for evaluating whether it is working.

Without a defined objective, AI projects tend to be measured against technical metrics, like model accuracy and inference latency, rather than business metrics like revenue impact, cost reduction, or customer retention.

Leadership alignment must exist before this component can function. When senior leadership has not explicitly committed to AI as a strategic priority and defined the outcomes it is expected to produce, AI teams operate without a clear mandate. Individual projects may succeed technically while the organization fails to extract the strategic value the investment was intended to create.

Defining KPIs before implementation and assigning accountability for those KPIs to business owners is the mechanism that keeps AI aligned with business objectives as the program scales.

2. Data Readiness & Infrastructure

The quality of an AI system’s outputs is bounded by the quality of its training data. Organizations consistently underestimate the effort required to bring data to a state where AI systems can use it effectively.

Data readiness requires accuracy, data that reflects the current state of the business and has been validated for correctness. It requires consistency, data that follows uniform formats and definitions across systems and business units.

It requires accessibility, data that can be retrieved by the teams and systems that need it, at the latency the use case demands.

Infrastructure readiness means the compute and storage systems that will train, deploy, and serve AI models can handle the workloads they generate, both at initial deployment and as usage scales.

Investing in data engineering capabilities, storage architecture, and governance tooling before AI projects begin is consistently more cost-effective than retrofitting these foundations after models are in production.

Cygnet.one’s Data Engineering and Management Services help enterprises establish the pipelines, governance frameworks, and storage architecture required to support production-grade AI workloads before the first model reaches deployment.

3. Technology Stack & Tools

Technology selection for AI is a common source of overinvestment and misalignment. Organizations that select tools based on industry attention rather than what matches their actual use cases and team capabilities end up with underutilized platforms, integration complexity, and skill gaps that slow delivery.

The relevant decisions cover machine learning platforms, model serving infrastructure, data pipeline tooling, and monitoring and observability systems. Each choice has implications for cost, deployment speed, and the skills required to operate the stack.

The build-versus-buy decision runs through each layer. Organizations with smaller AI teams and well-defined use cases typically benefit from managed cloud AI services that reduce operational complexity.

Those with larger teams and more differentiated requirements may justify custom solutions where additional control delivers measurable value.

Both are defensible choices, making either without assessing team capability and use case requirements against cost and complexity produces predictable mismatches.

4. Talent & Organizational Structure

AI execution depends on human capability. The core technical roles, such as data scientists, machine learning engineers, and data engineers, are in high demand and short supply.

Organizations that approach talent strategy reactively, hiring only when a specific project creates an immediate need, consistently encounter delivery delays that compress timelines and inflate costs.

Beyond technical roles, AI teams require domain experts who understand the business processes the models are meant to improve. A demand forecasting model built without input from the supply chain team it is intended to serve will optimize for the wrong variables.

Cross-functional collaboration between AI practitioners and business domain experts is a structural requirement, not an optional enhancement.

The organizational design question, centralized AI center of excellence versus distributed AI capability across business units, involves genuine trade-offs.

Centralized models build expertise faster and standardize practices more effectively. Distributed models develop closer alignment with individual business unit needs.

Many organizations adopt a hybrid approach as AI capability matures, starting with centralization and distributing execution once standards and infrastructure are established.

5. Governance, Ethics & Risk Management

AI governance functions as a design requirement that shapes how AI systems are architected, trained, monitored, and decommissioned. Organizations that treat it as a post-deployment compliance check consistently face more disruptive and expensive problems than those that build governance in from the start.

Transparency requirements define what documentation must exist for each model:

- What data it was trained on

- What objectives it was optimized for, and

- What limitations apply to its outputs?

These serve both regulatory compliance and internal accountability purposes.

Fairness and bias requirements define how models must be evaluated before deployment and how monitoring must continue after.

Regulations in financial services, healthcare, and employment contexts increasingly require that AI systems demonstrate fairness across demographic groups and that organizations can produce evidence of that assessment.

According to the 2026 Gartner Analysis of AI Projects in Infrastructure and Operations, 57% of leaders whose AI initiatives failed said they expected too much, too fast, an outcome that governance and risk management frameworks are specifically designed to prevent by establishing realistic checkpoints at each stage of deployment.

Risk management connects governance requirements to organizational accountability, defining who is responsible for each obligation and what remediation process applies when a threshold is exceeded.

Conclusion

AI capability compounds when it is built deliberately.

Organizations that invest in the foundational elements first, such as aligned business objectives, data readiness, appropriate technology, capable teams, and proactive governance, find that successive AI initiatives are faster to execute and more likely to produce the business outcomes the roadmap was built to achieve.

Moving from AI strategy to production-grade capability requires both a clear framework and the technical expertise to execute it at each phase.

Cygnet.one’s Data Analytics & AI Services support enterprises through every stage of AI adoption, from maturity assessment and use case prioritization to model development, scaling, and governance.

Book a demo to start building your AI roadmap.

An AI strategy roadmap is a structured plan that outlines how an organization adopts, implements, and scales AI in alignment with its business goals. It sequences the decisions, from goal-setting and maturity assessment to use case selection, infrastructure development, and governance, required to move AI from experimentation to production value.

Without a defined strategy, AI investments tend to be misaligned with business priorities, use cases are selected without clear ROI criteria, and organizations build capabilities in the wrong sequence. A strategy ensures AI initiatives are evaluated against measurable objectives, resources are deployed where they generate the highest return, and governance is established before compliance exposure becomes a problem.

Initial results from well-scoped pilot projects are typically visible within two to three months. Full-scale implementation across multiple business functions generally takes six to eighteen months, depending on workload complexity, the organization’s starting level of data readiness, and the extent of infrastructure investment required. Enterprise-wide programs that include significant legacy system integration can take longer.

The most commonly cited challenges are poor data quality, a shortage of skilled AI talent, unclear business objectives, and difficulty moving successful pilots into production at scale. Governance and compliance requirements add complexity in regulated industries. Organizations that underestimate the maturity assessment step often discover these challenges only after committing significant resources to use cases their current infrastructure cannot support.

AI success is measured through the business metrics defined before implementation began, revenue growth, cost savings, operational efficiency improvements, or reduction in error rates. Technical model performance metrics such as accuracy and latency are relevant inputs but are not the primary measure of strategic success. Programs that track business impact consistently from pilot through scale produce more defensible ROI evidence than those that measure model performance in isolation.