Every enterprise has data. Very few can reliably use it. Raw data accumulates across dozens of disconnected systems, inconsistently formatted, poorly documented, and accessible only to the engineers who know where to look.

The gap between data collected and data usable is an engineering problem. Analytics initiatives stall on unreliable inputs. AI projects collapse under poor data quality or incomplete pipelines. Reporting teams rebuild the same transformations manually because there is no shared infrastructure underneath them.

Data engineering services close that gap. They design and build the technical systems that transform raw organizational data into clean, structured, reliably flowing inputs that analytics, BI, and AI teams can actually depend on.

For enterprises managing growing data volumes across distributed environments, the question is not whether to invest in data engineering but whether the architecture is designed well enough to scale.

This guide covers what data engineering services involve, the types available, how to choose the right provider, and why modern enterprises treat data infrastructure as a strategic priority.

What is a data engineering service?

Data engineering services are the practices, tools, and processes used to design, build, and maintain the technical infrastructure that transforms raw data into accessible, structured formats for analytics and business use.

These services span the full data lifecycle, which includes ingestion from multiple sources, transformation through ETL and ELT processes, storage in data warehouses or lakes, and pipeline orchestration to keep data flowing reliably.

For enterprises managing large volumes of data across distributed systems, data engineering services form the operational foundation that makes analytics, reporting, and AI initiatives viable at scale.

What are the types of data engineering services?

The category of data engineering services covers a wide range of technical capabilities, and different organizations need different combinations depending on data volumes, source complexity, latency requirements, and business goals.

Understanding what each type of service involves and where it is best applied helps organizations make sound infrastructure decisions rather than defaulting to the most visible option.

The three models below represent the most common service types and the contexts where each performs best.

1. Batch vs Real-Time Data Processing

Batch and real-time processing represent two approaches to data movement, and most enterprise architectures depend on both.

Batch processing collects and handles data in scheduled intervals, such as end-of-day financial reconciliation, weekly reporting aggregations, or monthly analysis runs.

Data accumulates over a defined period and is processed as a group. This model is efficient and cost-effective for workloads where timing flexibility exists, and the exact latency of results does not affect business outcomes.

Real-time processing handles data as it arrives. Event streams are ingested continuously, processed immediately, and results are available within seconds.

This model is necessary for workloads where latency has direct business consequences, including fraud detection on payment transactions, live operational dashboards, and recommendation engines that personalize user experiences in the moment.

The right choice depends on what downstream systems actually require. Most production environments combine both models, routing high-frequency operational data through streaming pipelines while batch jobs handle historical aggregation and archival workloads.

2. Cloud Data Engineering Services

Cloud platforms have fundamentally shifted how enterprises approach data infrastructure. AWS, Azure, and Google Cloud each offer managed data engineering primitives, including serverless ETL services, managed data warehouses, and scalable storage layers.

Cloud data engineering services build production-grade pipelines on top of these foundations, handling the configuration, optimization, and operational complexity that raw cloud tooling alone does not resolve.

The business case is strongest for organizations with variable data volumes, teams that need to move quickly, or businesses that want to avoid on-premise infrastructure investment.

Scalability becomes a configuration change rather than a procurement cycle. According to a 2024 Gartner forecast on public cloud end-user spending, worldwide public cloud spending is projected to reach $723 billion in 2025, reflecting the scale at which enterprises are committing workloads to cloud-native architectures.

3. Big Data Engineering Solutions

Big data engineering addresses workloads that are too large, too fast, or too varied for conventional database systems to handle.

Frameworks like Apache Hadoop and Apache Spark enable distributed computation across clusters of commodity hardware, allowing organizations to process petabyte-scale datasets without centralizing all compute in a single system.

The distinguishing characteristic is design philosophy. Big data architectures are built for horizontal scalability, parallel reads across large data partitions, and fault tolerance embedded at the infrastructure level. These are decisions made at the architecture stage.

Relevant use cases include the following.

- Processing clickstream or event data from consumer applications with billions of daily interactions

- Running computational models across large genomic, financial, or scientific datasets

- Aggregating and analyzing telemetry from IoT device networks distributed across locations

Organizations do not always need big data engineering. Where data volumes are manageable through standard cloud warehousing, introducing distributed computing adds complexity without proportional benefit. Knowing when this class of solution applies is part of what a qualified provider brings.

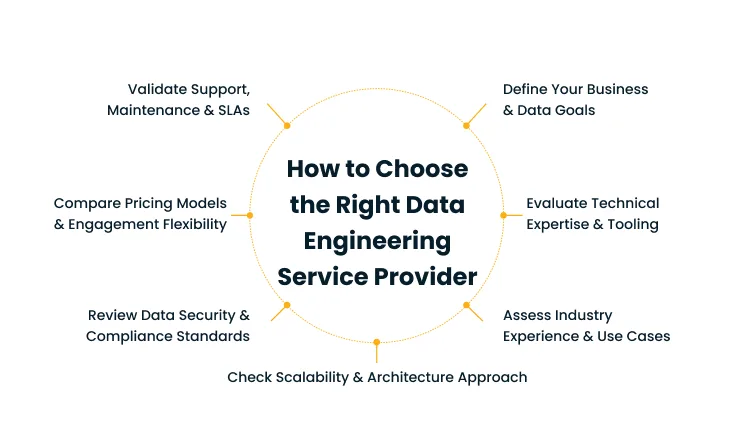

How to choose the right data engineering service provider

Choosing a data engineering service provider is an architectural decision with long-term infrastructure implications. The systems a provider builds will shape the data capabilities available to the organization for years, and reversing poor choices requires significant time, cost, and operational disruption.

1. Define Your Business and Data Goals

Before evaluating any provider, define what the engagement needs to achieve in concrete terms. Vague goals produce scoped proposals

that cannot be meaningfully compared. Specific objectives create clearer evaluation criteria.

Working through these questions before any provider conversation sharpens the scope considerably.

- Which data sources need to be connected, and what is the current reliability of each?

- What downstream consumers will use the data, and what latency do they require?

- Is the primary objective to consolidate existing infrastructure, build new capabilities, or both?

- What in-house data engineering capacity exists today, and where does it fall short?

Providers who ask these questions during scoping are worth paying attention to. Providers who skip tooling recommendations without understanding the use case are demonstrating something about how they approach engagements.

2. Evaluate Technical Expertise and Tooling

The data engineering stack is wide, and the depth of expertise varies considerably across providers. A firm with solid SQL and basic ETL experience delivers different outcomes than one with hands-on production experience in distributed compute, streaming pipelines, and cloud-native orchestration.

Specific areas to evaluate include the following.

- Apache Spark, Flink, or Beam for complex transformation and large-scale batch processing

- Airflow, Dagster, or Prefect for production pipeline orchestration and scheduling

- Snowflake, Databricks, BigQuery, or Redshift as cloud data platform environments

- Terraform and CI/CD frameworks for infrastructure automation and pipeline testing

Ask for specific architecture examples. A provider who can describe the trade-offs in a past orchestration tool migration has real operational experience. A provider who lists tools without specifics may not.

3. Assess Industry Experience and Use Cases

Data engineering requirements vary by sector. Financial services organizations face different data governance and latency requirements than healthcare systems or retail platforms. A provider with industry-specific experience brings knowledge of those constraints that purely technical expertise does not cover.

Ask for case studies from the same sector. If none exist, ask how the provider has managed analogous constraints in adjacent industries. Absence of direct experience is a risk to account for in project planning, not an automatic disqualifier. Knowing the risk allows the organization to offset it with appropriate oversight.

4. Check Scalability and Architecture Approach

The systems built today need to handle the data volumes and use cases of three years from now. Providers who design for present requirements without headroom for growth produce architectures that require rebuilds sooner than expected.

Useful indicators of a scalability-first approach include the following.

- Modular designs that separate ingestion, transformation, and serving layers

- Infrastructure sized with appropriate headroom rather than optimized purely for current load

- Prior experience scaling systems through significant volume increases or expanding to multi-cloud environments

Architecture decisions made early are significantly more expensive to reverse than to make correctly from the start.

5. Review Data Security and Compliance Standards

Data infrastructure handles sensitive assets, and the security posture of the provider’s design carries regulatory and operational consequences. Compliance frameworks in finance, healthcare, and government impose constraints that need to be met before deployment.

Key areas to assess include the following.

- Encryption standards applied at rest and in transit

- Role-based access control implemented at the data layer

- Data masking and anonymization approaches for sensitive attributes

- Audit logging and lineage tracking for regulatory review

- Alignment with relevant frameworks such as GDPR, HIPAA, or SOC 2

A provider that treats security as a configuration layer added at the end of a project is creating risk that the organization carries long after the engagement closes.

6. Compare Pricing Models and Engagement Flexibility

Three pricing models are standard in data engineering engagements.

1. Fixed-scope project delivery: Defined output, timeline, and cost. Works when requirements are stable and fully understood upfront.

2. Time-and-materials: Billed by effort consumed. Suited to exploratory work, evolving requirements, or ongoing pipeline enhancements.

3. Dedicated team engagement: An embedded team operating as an extension of the in-house data function. Appropriate when data engineering is a sustained organizational capability rather than a one-time build.

Each model carries distinct risks. Fixed-scope engagements are exposed to scope creep. Time-and-materials require active cost oversight. Dedicated teams require the organization to manage an external group with the same clarity it applies to internal staff. The right model depends on how well requirements are defined and how long the engagement needs to run.

7. Validate Support, Maintenance, and SLAs

Production data pipelines fail. The question is how quickly the team responsible for those systems identifies failures and restores service. SLA terms should reflect the criticality of the systems in scope, because a four-hour gap in a fraud detection feed carries materially different consequences than a delayed weekly report.

Before committing to a provider, verify the following.

- Defined response SLAs for critical and non-critical pipeline failures

- Whether monitoring is proactive or only activated on escalation

- Availability of 24/7 on-call coverage for production environments

- The handover and documentation process for pipeline knowledge transfer

Why data engineering services matter for modern enterprises

Enterprise data environments have grown significantly more complex over the past decade. Organizations now produce data continuously across dozens of systems, including CRM platforms, transactional databases, IoT devices, third-party APIs, and customer-facing applications.

Without the infrastructure to collect, process, and distribute this data reliably, it accumulates as raw noise rather than converting into business insight. Data engineering services provide the technical foundation that makes the difference between the data the organization talks about and the data it can actually use.

1. Handling Rapid Data Growth Across Systems

The volume and variety of enterprise data grow faster than most organizations’ ability to manage it effectively. New data sources are added continuously. Mobile applications, payment platforms, supply chain sensors, and marketing automation tools each produce streams that need to be captured, normalized, and routed to the right downstream systems.

The problem extends beyond volume. Data from different sources arrives in different formats, with different schemas, at different frequencies.

Without a consistent ingestion and transformation layer, teams spend a disproportionate share of their time cleaning and reconciling data rather than using it.

Data engineering services create the infrastructure that absorbs new data sources without requiring manual intervention each time one is added.

2. Enabling Scalable Data Pipelines

A pipeline that works for a hundred thousand records may not hold up at a hundred million. The infrastructure decisions made during initial build determine whether scaling is an operational task or a fundamental rebuild.

Well-designed pipelines are built with explicit scalability assumptions. Partitioning data for parallel processing, designing idempotent ingestion layers that handle duplicate records without downstream errors, and separating compute from storage so each can scale independently are foundational choices made at the architecture stage.

The business consequence of poorly designed pipelines extends beyond the data team. Delayed analytics outputs, degraded reporting, and blocked AI initiatives all trace back to an infrastructure that cannot sustain the load placed on it.

Cygnet.one’s data engineering and management practice designs scalable pipeline architectures with governance frameworks that prioritize data accuracy, compliance, and role-based accountability, combining advisory, architecture, and operational services adapted to the organization’s specific data ecosystem.

3. Powering Advanced Analytics and AI Initiatives

Machine learning models and advanced analytics are only as good as the data they operate on. Inconsistent inputs, schema drift, and missing values at the data layer undermine model accuracy, inflate retraining cycles, and erode business confidence in analytical outputs.

According to a 2024 Gartner survey on data and analytics leadership, 61% of organizations are evolving or rethinking their data and analytics operating model because of AI.

The infrastructure change driving that evolution is data engineering. Clean, well-governed data delivered by reliable pipelines is the prerequisite for AI systems that produce useful outputs at scale.

Cygnet.one’s data analytics and AI Service delivers the data integration, warehousing, and analytics infrastructure that connects raw enterprise data to forecasting, business intelligence, and AI capabilities, with over 20 years of enterprise delivery experience across retail, healthcare, finance, and manufacturing.

4. Improving Data Quality and Reliability

Decisions made on bad data produce bad outcomes regardless of the analytical sophistication applied. Data quality is an engineering discipline with tangible business consequences, not a governance aspiration.

Data engineering services implement quality checks at multiple points in the pipeline. Validation at ingestion catches malformed or out-of-range values before they propagate downstream.

Schema enforcement prevents structural drift from breaking downstream consumers. Anomaly detection surfaces unexpected changes in volume, distribution, or freshness that signal upstream system problems.

According to a 2025 Gartner study on AI-ready data, organizations will abandon 60% of AI projects by 2026 that lack AI-ready data infrastructure. Poor data quality at the pipeline level surfaces as a strategic problem once organizations move beyond basic reporting into machine learning and advanced analytics.

5. Breaking Down Data Silos

Organizational data is typically fragmented. Sales systems do not connect to finance data. Customer support logs exist independently of product usage records. Marketing attribution operates from a different source than the one used by growth analytics.

Silos produce conflicting reports, slow cross-functional decision-making, and prevent organizations from building unified views across customer, operational, and financial data. They are architectural problems with organizational consequences.

Data engineering services build the integration layer that connects these systems. This requires establishing clear data contracts between sources, creating shared canonical data models, and building pipelines that make data from one system reliably available where it is needed across the organization.

A unified data layer does not require centralizing everything in one place. It requires making data findable, consistent, and accessible.

6. Enhancing Operational Efficiency

Manual data workflows introduce multiple failure points. Human error increase with repeated transformation steps.

Delays compound when workloads exceed manual capacity. Knowledge about how data flows becomes concentrated in specific individuals, creating operational fragility when those individuals are unavailable.

Automation addresses all three. Scheduled and event-triggered pipelines execute transformations consistently without human involvement.

Monitoring systems catch failures before they propagate. Version-controlled pipeline code means institutional knowledge about data flows is captured in the system rather than held by specific team members.

The efficiency benefit extends beyond the data team. When analysts spend less time managing data preparation and more time on analysis, and when engineers are not repeatedly debugging the same manual processes, the productivity return from the same resource base increases materially.

Conclusion

The maturity of an organization’s data engineering capability increasingly determines the ceiling on what its analytics, AI, and operational systems can deliver.

Organizations with well-designed data infrastructure find that building analytics products, training ML models, and generating operational intelligence all become faster and more reliable over time.

Those without it spend a disproportionate share of capacity managing the symptoms of a weak foundation.

The provider selection decision matters more than most organizations treat it. The architecture built in the next engagement shapes what the data function can deliver for years.

The criteria covered in this guide, from scalability and data quality to security posture and SLA terms, are worth applying carefully before committing to a direction.

Data engineering is infrastructure. Getting it right the first time is an investment. Rebuilding it later is a cost.

If your organization is working through a data infrastructure build or modernization, Cygnet.one’s data engineering team can help you scope the right architecture for your current systems and future requirements.

Book a consultation to explore how we design scalable, cloud-native data pipelines and enterprise data platforms built around your specific analytics and AI goals.

Data engineering services are used to design and maintain the infrastructure that collects, transforms, and delivers data across an organization. They support analytics and business intelligence by building reliable data pipelines, enabling AI and machine learning by ensuring clean and structured data inputs

Data engineering focuses on building and maintaining the systems that store, move, and transform data. Data science focuses on analyzing data to generate insights, predictions, and models.

Common data engineering tools include Apache Spark and Apache Flink for large-scale data transformation, Apache Airflow, Dagster, and Prefect for pipeline orchestration, and cloud platforms such as Snowflake, Databricks, BigQuery, and AWS Redshift for storage and querying.

Cloud-based data engineering services are accessible to organizations of most sizes. Cloud platforms like AWS, Azure, and Google Cloud allow smaller businesses to access enterprise-grade data infrastructure without upfront hardware investment or large internal engineering teams. Services can be scoped to a specific use case and scaled as data volumes grow, making them practical for companies at an early stage of data maturity.

Implementation timelines depend on the complexity of the data environment, the number of sources to integrate, and the maturity of existing infrastructure. A focused pipeline build for a single use case can be completed in four to eight weeks. A full enterprise data platform migration with multiple source systems, data warehouse configuration, and governance frameworks typically takes several months to implement in phases.