Choosing a managed IT services provider used to be a procurement decision driven mostly by price and headcount substitution.

However, it’s no longer that simple, and the provider you select now sits inside the operational core of the business, owns the response when systems fail, holds privileged access to your data, and decides how quickly the IT environment scales when growth or compliance pressure changes the rules.

The selection mistake most enterprises make is treating MSP evaluation as a feature comparison. Two vendors with similar service catalogs can deliver dramatically different outcomes once the contract is signed, and the differences only surface during incidents, audits, or scaling events when switching cost is highest.

According to the 2025 Gartner Forecast on Worldwide IT Spending, worldwide IT services spending will exceed $1.87 trillion in 2026, surpassing software and communications categories for the first time, with managed services and IaaS as the leading growth contributors.

The category is large enough that selection discipline now matters as much as the underlying technology choice.

In this guide, we walk through a structured framework for evaluating a managed IT services provider, the comparison methodology that separates surface-level pitches from deliverable capability, and the recurring mistakes we see in stalled MSP relationships.

How To Evaluate A Managed IT Services Provider Step By Step

A structured evaluation framework helps avoid the two most common failure modes in MSP selection.

The first is signing with the wrong-fit provider because the discovery happened in sales conversations rather than against documented requirements. The second is signing with a technically capable provider that does not match the organization’s operational requirements, governance posture, or growth plan.

Step 1: Define Your IT Scope And Business Requirements

The single most expensive evaluation mistake is starting vendor conversations before the internal scope is documented.

Without a scope document, every provider sounds capable, every demo looks impressive, and the actual fit only becomes visible after onboarding.

Defining scope upfront forces internal stakeholders to align on what the MSP is being hired to do and gives the evaluation a stable yardstick that does not shift with each vendor pitch.

A complete scope document covers the dimensions below.

- Service domains in scope: Infrastructure management, cloud operations, end-user support, cybersecurity, application managed services, or a defined subset.

- Coverage windows: 24×7, business hours plus on-call, or follow-the-sun, with named time zones.

- Technology stack: Current ERP, CRM, cloud platforms, network, endpoint, and any specialized systems the provider must support.

- Compliance scope: Regulatory frameworks (ISO 27001, SOC 2, HIPAA, GDPR, sector-specific) under which the provider must operate.

- Internal retained capabilities: What stays in-house versus what transfers, including governance, security oversight, and architecture decisions.

- Business outcomes: Uptime, time-to-resolution, cost predictability, audit readiness, or specific transformation goals tied to the engagement.

Step 2: Evaluate Service Capabilities And Specialization

Service catalogs look similar across MSPs at the marketing level, but capability differences only show up when the conversation goes one layer deeper into how each service is delivered, which platforms the provider has direct certified depth on, and where the team’s actual case experience concentrates.

A provider that has supported five SAP environments in regulated industries will deliver a different engagement than one that has primarily run Microsoft 365 helpdesks for SMBs, even if both list “infrastructure management” in their service catalog.

The dimensions worth probing in capability evaluation include the following.

- Depth of certified expertise on your specific platforms (AWS, Azure, GCP, SAP, Oracle, Salesforce, ServiceNow).

- Specialization in your industry vertical and the regulatory or operational nuances that come with it.

- Track record on engagement profiles similar to yours by size, complexity, and geographic footprint.

- Coverage of adjacent capabilities the engagement may need over the contract term, such as cloud modernization, cybersecurity, or application managed services.

Step 3: Assess Service Level Agreements (SLAs) And Response Times

SLAs are where vendor commitment becomes legally measurable. The discipline at this step is to read the SLA document, not the SLA summary slide.

Headline numbers like “99.99% uptime” and “15-minute response” can hide significant carve-outs in the fine print, including how downtime is calculated, which incident severities are covered, and what the financial penalty actually is when the SLA is breached.

The SLA review checklist we apply covers the following.

- Uptime guarantee: The percentage and the calculation method, including planned-maintenance exclusions.

- Response time by severity: Separate commitments for P1, P2, P3, P4, with documented severity criteria.

- Resolution time: Committed time to fix versus time to acknowledge, which are very different things.

- Service credits or penalties: What the provider pays when an SLA is missed, and the cap on cumulative liability.

- Reporting cadence: Frequency, format, and depth of SLA performance reports delivered to your team.

- Escalation paths: Named on-call leads, escalation tiers, and the contractual right to invoke executive escalation when an incident is not progressing.

Step 4: Review Security, Compliance, And Risk Management Approach

The provider you choose holds privileged access to your environment, which means their security posture becomes your security posture by extension.

According to the 2024 IBM Cost of a Data Breach Report, the global average cost of a data breach reached $4.88 million in 2024, a 10% increase over 2023 and the largest spike since the pandemic.

The figure compounds when the breach traces back to a third-party provider, because the regulatory and reputational exposure includes both the breach itself and the due-diligence question of why the vendor was selected.

The security and compliance review must cover the following areas.

- Active certifications and the audit reports behind them (ISO 27001, SOC 2 Type II, HIPAA, PCI DSS, sector-specific frameworks).

- Identity and access management approach, including least-privilege enforcement, MFA on all privileged accounts, and access review cadence.

- Incident response process, including detection, containment, customer notification timelines, and post-incident reporting.

- Data handling and residency policies, including encryption standards, data location, and rules around cross-border data movement.

- Subprocessor management, including which third parties handle your data and what their security posture is.

- Insurance coverage for cybersecurity incidents and the claims experience the provider has had.

Cygnet.One’s Cybersecurity practice covers this layer end to end with advisory and strategy, identity and access management, application and infrastructure security, data and network security, and advanced threat protection across ISO 27001, SOC 2, and sector-specific frameworks, so the security posture is a built-in element of the managed services engagement rather than a separate workstream the buyer has to assemble.

Step 5: Analyze Pricing Models And Cost Transparency

Pricing models for managed IT services vary significantly, and each one creates different incentive alignment with the customer.

The model that fits depends on workload predictability, growth trajectory, and how much variability the buyer can absorb in the monthly run-rate.

The four common pricing models break down as follows.

- Fixed-fee per period: Predictable monthly cost regardless of ticket volume, which works well for stable environments with known support load.

- Per-device or per-user: Scales linearly with the asset count under management, which fits environments where headcount and device count drive demand.

- Usage-based or consumption-based: Billed against actual incidents, hours, or compute consumption, which fits variable workloads but introduces month-to-month unpredictability.

- Hybrid or tiered: Fixed core fee plus variable charges for incidents above a threshold, which balances predictability with consumption fairness.

Beyond the model itself, the cost transparency questions worth asking include what is excluded from the base fee, what triggers an out-of-scope charge, how price changes are communicated and capped over the contract term, and what the offboarding cost looks like if you choose to switch providers later.

Step 6: Evaluate Scalability And Flexibility

The IT environment you have today will not be the one the MSP supports three years from now. Scalability evaluation has two dimensions, change handling and raw volume capacity, and both matter equally.

A provider that can double headcount on demand but cannot adapt their delivery model when you migrate from on-prem to cloud is not actually scalable for your needs.

The scalability dimensions worth assessing include the following:

- Volume scalability: Capacity to support significant growth in users, devices, transactions, or geographic footprint without service degradation.

- Technology adaptability: Track record of supporting platform migrations and modernization beyond pure steady-state operations.

- Operating model flexibility: Willingness to adjust coverage windows, escalation paths, or service composition as your business changes.

- Geographic coverage: Presence in regions where your business is expanding, including local language support and time zone alignment.

- Contract flexibility: Ability to add, modify, or remove service lines mid-contract without punitive renegotiation.

Step 7: Review Tools, Automation, And Monitoring Capabilities

A provider running modern monitoring, automation, and AI-assisted operations sees issues before they become incidents and resolves them with less labor cost. A provider running on email and ticket queues finds out about issues when users complain.

According to the 2024 IBM Cost of a Data Breach Report, organizations using AI and automation extensively in security prevention reduced average breach costs by $2.2 million compared to those that did not.

The pattern extends beyond security into the broader operations layer. The capabilities worth verifying directly include the following:

- 24×7 monitoring across infrastructure, applications, network, and endpoint, with documented mean-time-to-detect targets.

- Automated remediation for known incident classes, with the percentage of tickets resolved without human intervention as a measurable KPI.

- AI-assisted operations for log analysis, anomaly detection, and capacity forecasting.

- Self-service customer portal with real-time visibility into ticket status, SLA performance, and asset inventory.

- Integration with your ITSM, identity, and security tooling, so the MSP operates inside your stack rather than running a parallel one.

Cygnet.One’s Infrastructure Management practice combines 24×7 monitoring, AI-driven anomaly detection, automated remediation across multi-cloud environments, and tiered helpdesk support with capacity forecasting, so proactive resolution becomes the default operating mode rather than an aspiration.

Step 8: Validate Vendor Experience And Client Success

A provider that can produce three referenceable clients in your industry, who will speak candidly about the engagement, is dramatically more credible than one with a glossy case study deck and no live references.

The validation steps that produce a signal include the following:

- Request three referenceable clients in your industry or with comparable engagement profiles, and conduct the calls directly.

- Ask references about specific incidents, including how the provider performed under pressure rather than in a steady state.

- Verify that the team proposed for your engagement is the same team that delivered the referenced work.

- Probe the engagement journey end to end, including onboarding speed, SLA performance over the first six months, and how renegotiations were handled.

- Look for documented post-incident reviews and continuous improvement evidence beyond surface-level satisfaction scores.

How To Compare MSP Vendors And Choose The Right Partner

Individual vendor evaluations only become useful when they roll up into a structured comparison.

Without that structure, the final decision tends to favor the provider whose presentation was most recent or whose sponsor inside the buying organization is loudest. The four practices below are how we keep MSP selection grounded in evidence and aligned across the stakeholders the engagement will impact.

1. Create A Vendor Comparison Matrix

A vendor comparison matrix turns the eight evaluation steps above into a single scoring instrument that every shortlisted provider gets graded against.

The matrix should weight categories by the strategic importance for your organization, not weight everything equally. For an enterprise where security is the highest-stakes dimension, the matrix should reflect that with a higher weight on security than on the pricing model, so the final score lands where the organization’s actual priorities sit.

The matrix at minimum should include columns for each provider, weighted scores per evaluation category, qualitative notes on differentiators and red flags, and a final composite score.

The discipline of forcing each evaluator to score against the same matrix surfaces disagreements early, when they can be debated, rather than late, when one stakeholder’s preferred provider is already informally selected.

2. Run Pilot Projects Or Proof Of Concept

Pilots are how diligence converts from documented capability to demonstrated capability. A two-to-six-week pilot on a contained scope, with explicit entry and exit criteria, validates whether the provider’s actual delivery matches their pitch.

Pilots also surface the operational fit questions that vendor evaluation cannot answer on paper, such as how the provider’s team communicates with yours, how onboarding feels in practice, and how quickly issues are diagnosed under real conditions.

For example, a pilot could cover one business unit’s endpoint management, a single application’s monitoring and incident response, or a focused cybersecurity assessment with deliverables.

The exit criteria should be defined before the pilot begins, including which KPIs the provider needs to demonstrate, by how much, and what each outcome means for the broader engagement decision.

3. Align Stakeholders Across IT, Security, And Business Teams

MSP selection touches more functions than the IT team that runs the procurement. Security needs visibility into the provider’s posture and access controls.

Finance needs to validate the pricing model against budget volatility tolerance. Business owners need to understand what changes for end users when the new provider takes over. Skipping any of these stakeholder loops produces decisions that look right inside IT but get overruled or quietly resisted once the engagement starts.

The alignment practice that works is a steering committee with named representatives from IT, Security, Finance, Compliance, and the most affected business units, meeting at each gate of the evaluation.

Each representative signs off explicitly at shortlisting, pilot completion, and final selection. Disagreements caught at the steering committee are cheap. Disagreements caught after contract signature are expensive.

4. Score Vendors Based On Business Impact

Feature-by-feature scoring tends to favor providers with broad catalogs over providers with deep delivery on the capabilities that matter most.

The correction is to score each provider on the business outcomes they will measurably move, rather than on the number of services they list. A provider with a tighter catalog but a stronger track record on uptime and incident response in your industry will outperform a broader provider whose depth is shallower across more areas.

The outcome dimensions worth weighting most heavily include operational reliability, security posture, cost predictability, scalability fit with your three-year plan, and the strength of the cultural and governance alignment.

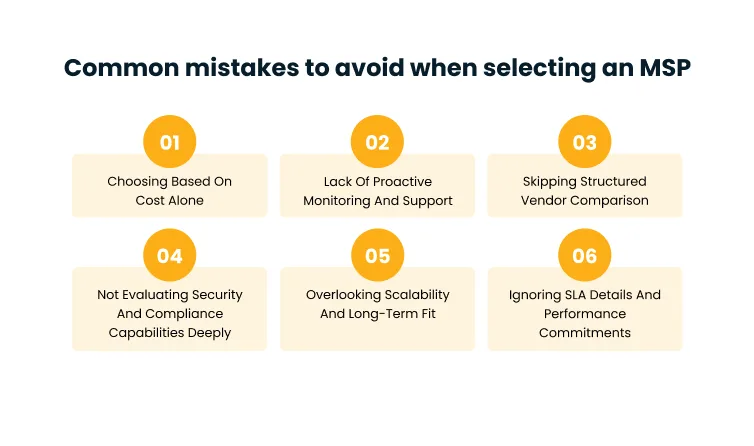

Common Mistakes To Avoid When Selecting An MSP

Even with a structured framework, certain mistakes recur often enough across MSP engagements that we treat them as failure patterns rather than one-off errors.

The six below are the ones we see most often in stalled or repeatedly renegotiated relationships, often becoming the reason organizations switch providers within the first two years.

1. Choosing Based On Cost Alone

Price-led MSP selection produces the worst total cost of ownership over the contract term, because the underbid provider typically recovers margin through scope reductions, slower response, or change orders that surface mid-contract.

The selection conversation needs to anchor on outcomes per dollar rather than dollars in isolation, which means a slightly higher headline rate from a provider with stronger SLAs and proactive monitoring often beats a lower rate from a provider whose true cost lands above the contract once incidents and scope creep are tallied.

2. Ignoring SLA Details And Performance Commitments

Buyers routinely accept SLA documents without reading the calculation methodology behind them. The pattern shows up later as confusion when the provider claims SLA compliance during a quarter, but the customer experienced multiple outages.

The fix is to negotiate the SLA document line by line during selection, including how downtime is measured, which severity levels are covered, what the credits look like at each breach threshold, and what the cumulative liability cap is.

SLAs that cannot be enforced practically are not SLAs, regardless of what the headline numbers say.

3. Not Evaluating Security And Compliance Capabilities Deeply

Surface-level security evaluation is one of the most expensive shortcuts in MSP selection. Asking “are you SOC 2 certified?” gets a yes from most providers.

Asking “show me the SOC 2 Type II report and walk me through the exceptions” produces a much more honest conversation. The same depth applies to identity and access controls, incident response procedures, and subprocessor disclosure.

The cost of a third-party-driven breach is high enough that this evaluation deserves the same rigor as the underlying contract negotiation.

4. Overlooking Scalability And Long-Term Fit

MSPs that fit perfectly for the current state often become the bottleneck eighteen months later, when the business adds a region, migrates to a new platform, or absorbs an acquisition.

Selecting against a three-year fit rather than a current-state fit avoids the disruption of switching providers exactly when stability matters most.

Scalability evaluation should include both volume scaling and capability adaptability, with weight on whether the provider has demonstrably handled comparable transitions for prior clients.

5. Lack Of Proactive Monitoring And Support

Reactive MSPs find out about incidents when users open tickets. Proactive MSPs see anomalies in monitoring data before users notice and resolve them in the background.

The difference shows up in user experience, executive visibility, and ultimately in the length of the contract relationship.

The discriminator during selection is to ask for the provider’s mean-time-to-detect data, the percentage of incidents resolved before user impact, and the automation coverage on routine tasks.

Providers without those numbers are running reactive operations regardless of what the marketing says.

6. Skipping Structured Vendor Comparison

The fastest way to make a poor MSP selection is to skip the comparison matrix and rely on relative impressions of each vendor.

Without a structured framework, decisions get anchored to whichever vendor presented most recently, whichever sponsor inside the buying organization is most influential, or whichever proposal had the cleanest design.

The comparison matrix is what protects the decision from those biases. Skipping it consistently produces selections that look defensible at the time and become hard to justify within twelve months.

Conclusion

MSP selection is a multi-year operational decision dressed up as a procurement event. The provider you choose owns the response when systems fail, holds the keys to your environment, and shapes how quickly your IT operations adapt to whatever growth, compliance, or transformation pressure shows up next.

Treating the selection with the rigor of an operational decision, rather than the speed of a procurement one, is what separates engagements that compound value from engagements that get renegotiated within a year.

According to the 2024 Deloitte Global Outsourcing Survey, 80% of executives plan to maintain or increase investment in third-party outsourcing, with the dominant rationale shifting from cost reduction to capability access and strategic capacity.

The implication for MSP buyers is that selection criteria need to evolve alongside that shift, weighting strategic fit, security, and adaptability over the price-led comparisons that defined the last generation of outsourcing decisions.

If you are scoping a managed IT services partnership, the conversation you usually start with is your retained-capability scope, your SLA and security requirements, and the operational outcomes the engagement needs to move.

Book a demo with our team to walk through how we run enterprise MSP engagements end to end, from infrastructure and cloud management to cybersecurity and managed application services.

FAQs

A managed IT services provider, or MSP, is a third-party partner that takes ongoing operational responsibility for some or all of an organization’s IT environment under defined service agreements

Use a structured eight-step evaluation framework that covers scope definition, service capabilities, SLAs, security and compliance, pricing models, scalability, tools and automation, and vendor experience. Combine the evaluation with a vendor comparison matrix, a contained pilot, and stakeholder alignment across IT, security, and business teams.

Most managed IT services providers cover infrastructure management, cloud services across AWS, Azure, and GCP, IT service management, managed cybersecurity, disaster recovery and business continuity, and IT process automation.

Costs vary by service scope, environment scale, and pricing model. Common models include fixed-fee per period, per-device or per-user pricing, usage-based pricing, and hybrid or tiered models. Mid-market engagements typically run from a few thousand dollars per month for focused scopes to mid-six-figures monthly for full-stack enterprise arrangements.

Service scope alignment, SLA structure and enforceability, security and compliance posture, pricing transparency, scalability across volume and technology change, tooling and automation depth, and validated client success. Beyond the individual factors, the biggest predictor of engagement success is structured comparison across providers and stakeholder alignment across IT, security, finance, and the business teams the MSP will serve.