Most organizations have more data than they know what to do with. Sales teams pull customer information from one system. Finance pulls it from another. Marketing has its own dashboard.

By the time anyone tries to answer a simple question across the business, the answer is buried under three reconciliation spreadsheets and a week of back-and-forth.

This is where data engineering does the work. It is the practical discipline of building reliable pipelines that move data from where it is generated to where it is needed, in a shape that is actually usable. Without it, analytics teams spend most of their time cleaning data instead of analysing it, and AI projects stall because the models cannot be trained on broken inputs.

This blog walks through the most common data engineering use cases, breaks them down by processing type, and shows how to identify and implement the ones that will actually move your business forward.

What Is Data Engineering, and Why Does It Matter for Modern Businesses

Data engineering is the discipline of designing, building, and maintaining the systems that move and prepare data for analysis. It sits between the source systems where data is created and the tools where it gets used.

Data Engineering Explained in Simple Terms

At its core, data engineering does three things. It takes raw data from many sources, transforms it into a consistent and usable format, and stores it somewhere that analysts and AI models can access reliably.

The work happens through three main components:

- Ingestion pipelines that pull data from databases, applications, APIs, sensors, and third-party platforms

- Transformation processes that clean the data, fix inconsistencies, apply business rules, and convert it into shapes that make sense for analysis

- Storage layers such as data warehouses for structured reporting and data lakes for raw or semi-structured data

The outcome is straightforward. Data becomes accessible, accurate, and ready to answer questions instead of staying locked in the systems that produced it.

Role of Data Engineering in Analytics and AI

Analytics and AI both depend on data engineering, though in slightly different ways.

For analytics and business intelligence, data engineering builds the pipelines that feed dashboards and reports. When a sales leader looks at quarterly performance or a CFO checks cash flow, the numbers they see have moved through engineered pipelines that pulled them from source systems, harmonized them, and loaded them into a reporting layer.

For AI and machine learning, data engineering provides the structured datasets that models need for training and inference. A fraud detection model is only as good as the transaction data it learns from. A recommendation engine is only as accurate as the customer behavior data feeding it.

According to a 2025 IBM Think report on data integration challenges, more than half (53%) of surveyed executives said difficulties integrating AI infrastructure with legacy systems derailed target outcomes. The pipelines, in other words, often decide whether AI projects succeed or stall.

The downstream impact shows up in two places: the accuracy of insights improves because the underlying data is consistent, and analysts spend less time cleaning data and more time interpreting it.

Why Businesses Rely on Data Engineering for Decision-Making

Decisions made on stale or inconsistent data tend to be the wrong decisions. A retailer that bases inventory orders on last week’s sales data instead of yesterday’s ends up with the wrong stock. A bank that flags fraud based on overnight batch processing misses fraud that happens at 10 a.m.

Data engineering changes the equation by delivering insights faster and keeping data consistent across teams. When marketing, finance, and operations are all looking at the same numbers, debates shift from “whose data is right” to “what should we do about it.”

Two outcomes follow from this:

- Operational efficiency: Manual data wrangling drops, and teams act on insights instead of debating their accuracy.

- Better planning: Forecasts and strategy decisions get built on data that reflects what is actually happening in the business right now.

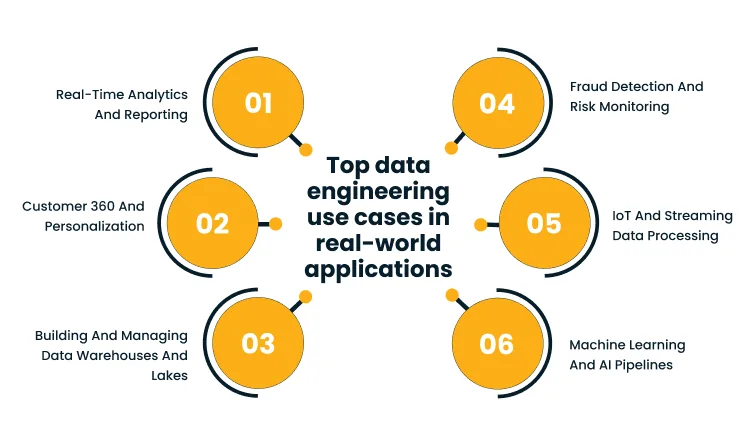

Top Data Engineering Use Cases in Real-World Applications

Data engineering shows up across nearly every industry and function. The use cases below are the ones that consistently deliver value, regardless of company size or sector.

1. Real-Time Analytics and Reporting

Real-time analytics processes data the moment it is generated, so dashboards, alerts, and reports reflect what is happening right now rather than what happened yesterday.

Common applications include:

- KPI dashboards that update continuously as transactions and events flow through the business

- User behavior tracking on websites and apps, where clicks and conversions get reflected in analytics within seconds

- Operational monitoring for logistics, manufacturing, and supply chain visibility

The value is speed. Decisions get made when they can still affect the outcome. A pricing team that sees demand spike in the next hour can adjust prices before competitors react. An operations team that spots a delivery delay can reroute before the customer notices.

2. Building and Managing Data Warehouses and Lakes

Data warehouses and data lakes are the storage backbone for enterprise analytics. Warehouses hold structured, query-ready data optimized for reporting. Lakes hold raw and semi-structured data that can be processed later for different uses.

In practice, the use case looks like this:

- An enterprise data warehouse consolidates data from finance, sales, HR, and operations into a single source of truth for reporting

- A data lake captures everything from server logs to social media data to IoT sensor readings, ready to be processed for whatever question comes up next

The benefit is unified access at scale. Storage is cheap and elastic, and teams across the business can pull from the same well instead of building duplicate copies of data in spreadsheets and shadow databases.

3. Customer 360 and Personalization

A customer 360 view brings together everything a business knows about a customer into one profile. That includes purchase history, support interactions, marketing engagement, account preferences, and product usage.

Applications include:

- Unified customer profiles that combine CRM, transactional, and behavioral data

- Personalized product recommendations based on individual purchase patterns

- Targeted marketing campaigns that respond to actual customer behavior rather than broad segments

- The payoff shows up in engagement and retention.

According to the 2023 McKinsey report on the economic potential of generative AI, leaders are vying for their share of up to $17.7 trillion in value potential from data and analytics, with customer-facing use cases consistently among the highest-impact opportunities. Customers respond better to experiences that feel relevant, and businesses get sharper insights into what actually drives loyalty.

4. Fraud Detection and Risk Monitoring

Fraud detection works by spotting patterns that look unusual compared to normal activity. The challenge is that fraud is rare, fast, and constantly evolving, so the underlying data pipelines need to handle high volumes with very low latency.

Common applications include:

- Transaction monitoring in banking and payments, where every transaction gets scored against fraud models in real time

- Account takeover detection that flags unusual login patterns or device changes

- Risk analysis for credit, insurance, and compliance, where engineered data feeds into both rules-based systems and machine learning models

The value is direct: financial losses go down, and compliance with regulations like AML and KYC becomes easier to evidence.

5. IoT and Streaming Data Processing

IoT devices generate continuous streams of data, often in massive volumes. Manufacturing sensors, connected vehicles, smart meters, and medical devices all produce telemetry that needs to be processed as it arrives.

Applications include:

- Predictive maintenance, where sensor data from machinery flags potential failures before they happen

- Energy and utilities monitoring, where smart meter data enables real-time grid management

- Connected vehicle and fleet analytics, where location and performance data drive routing and safety decisions

The outcome is operational efficiency at a level that batch processing cannot deliver. Issues get flagged and acted on as they emerge, not after the damage is done.

6. Machine Learning and AI Pipelines

ML and AI models depend on engineered data pipelines for both training and inference. Training pipelines prepare large historical datasets in the right shape. Inference pipelines deliver fresh data to deployed models so they can make predictions on current inputs.

In practice:

- Training datasets get assembled from multiple sources, cleaned, labeled where needed, and versioned

- Feature stores hold reusable, pre-computed inputs that multiple models can draw from

- Inference pipelines deliver real-time data to deployed models for scoring

The benefit is models that perform better and ship faster. When the pipeline is solid, data scientists can focus on improving models instead of fixing broken inputs.

Data Engineering Use Cases by Processing Type

Beyond use cases, data engineering can also be split by how data gets processed. Most enterprises end up using a mix of all three approaches below, depending on the latency the use case demands.

1. Batch Data Processing Use Cases

Batch processing handles data in scheduled chunks, usually overnight or hourly. It is the most cost-efficient way to process large volumes when latency is not critical.

Typical applications:

- Daily and weekly business reports

- ETL processes that move and reshape data overnight for next-day reporting

- Monthly billing, payroll, and financial close processes

The benefits are cost and reliability. Batch jobs run on predictable schedules, can process huge datasets efficiently, and use compute resources during off-peak hours.

2. Real-Time Data Processing Use Cases

Real-time processing handles data as it is generated, with end-to-end latency measured in seconds or milliseconds.

Applications include:

- Live operational dashboards

- Fraud detection and security monitoring

- Real-time pricing, inventory, and personalization engines

The benefit is responsiveness. Decisions and actions happen while the event is still relevant.

3. Data Transformation and Pipeline Automation

Data transformation converts raw data into structured, analysis-ready formats. Pipeline automation handles the orchestration, so this work runs reliably without manual intervention.

Common applications:

- Automated data cleansing that fixes formatting issues, removes duplicates, and standardizes values

- Pipeline orchestration tools that schedule jobs, manage dependencies, and recover from failures

- Continuous integration for data, where changes to schemas or transformations get tested before they hit production

The benefit is fewer broken pipelines and fewer manual fire drills. According to the 2023 McKinsey article on the evolution of the data-driven enterprise, legacy technology and high computational demands mean only a fraction of available business data gets ingested, processed, and analyzed in real time, which leaves significant value on the table. Automation across the pipeline is what closes that gap.

How to Identify the Right Data Engineering Use Cases

Not every use case is worth building. The ones worth pursuing share three traits: they solve a real business problem, the data infrastructure can support them, and the impact is large enough to justify the work.

1. Aligning Use Cases With Business Goals

Start with the problem, not the technology. A use case that does not connect to a business outcome will not get adopted, no matter how technically elegant the pipeline is.

The approach is straightforward:

- Identify the business problems where slow, missing, or fragmented data is causing pain

- Focus on outcomes that can be measured, such as revenue, cost, customer retention, or risk reduction

- Get sign-off from the business owner who will actually use the output

2. Evaluating Data Infrastructure and Readiness

Some use cases need infrastructure that does not exist yet. A real-time fraud detection system cannot run on a warehouse that refreshes once a day. Knowing what you have and what you need prevents projects from getting stuck halfway through.

Factors to assess:

- Data availability: Is the data being generated? Is it accessible? Is the quality good enough?

- Scalability: Can current systems handle growing volumes, or will the use case break them?

- Tooling and skills: Does the team have the platforms and expertise to build and operate the pipeline?

3. Prioritizing High-Impact Use Cases

Once a list of viable use cases exists, the next question is which ones to build first. The honest answer usually comes down to impact divided by effort.

Useful priority categories:

- Revenue impact: Use cases that directly drive sales, retention, or pricing decisions

- Efficiency gains: Use cases that cut manual work or reduce operational costs

- Risk reduction: Use cases that prevent fraud, ensure compliance, or improve security

A common pattern is to start with one or two high-impact, lower-complexity use cases to build momentum, then move to harder ones once the team has wins under its belt.

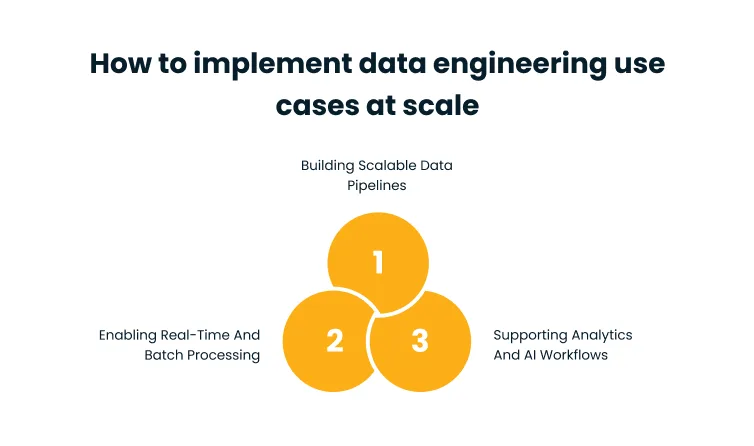

How to Implement Data Engineering Use Cases at Scale

Building one pipeline is straightforward. Building dozens that work reliably across the enterprise is a different problem. Scale requires modular architecture, automation, and integration with the rest of the data and AI stack.

1. Building Scalable Data Pipelines

Pipelines that handle today’s volume often break under tomorrow’s. Designing for scale from the start saves a lot of rework later.

The practical approach:

- Modular design: Build pipelines as composable components rather than monoliths, so individual pieces can be updated or replaced without rebuilding everything

- Cloud-based systems: Use elastic compute and storage so capacity scales up and down with demand

- Observability: Built-in monitoring and alerting from day one, so issues get caught before they affect downstream users

Cygnet.One’s Data and AI services help organizations design and deploy modular, cloud-native pipelines that grow with the business rather than getting in its way.

2. Enabling Real-Time and Batch Processing

Most enterprises need both batch and real-time processing, often for different parts of the same use case. A hybrid architecture handles both without forcing teams to choose.

The approach is to treat batch and streaming as complementary:

- Use streaming for low-latency needs like fraud, monitoring, and personalization

- Use batch for high-volume, less time-sensitive workloads like financial reporting and historical analysis

- Build a unified data layer that both approaches feed into, so downstream consumers see consistent data regardless of how it arrived

3. Supporting Analytics and AI Workflows

Pipelines do not exist in isolation. They feed BI tools, ML platforms, and increasingly, generative AI applications. Tight integration with these downstream systems is what turns a pipeline into a business outcome.

Practical steps:

- Connect pipelines to BI tools, so reports refresh automatically with fresh data

- Feed feature stores and ML platforms so data scientists can train and deploy models without rebuilding inputs

- Apply governance and lineage tracking so teams know where data came from and how it was transformed

Conclusion

Data engineering use cases are how organizations turn raw data into something the business can actually use. The use cases that matter most will vary by industry and company, but the underlying logic is consistent: identify the business problems where better data would make a real difference, build the pipelines to deliver it, and design for scale from the start.

The next steps are practical. Pick two or three use cases where slow or fragmented data is causing measurable pain. Audit the infrastructure to confirm you can support what you want to build. Then start with a focused pilot, prove the value, and expand from there.

Cygnet.One’s Data and AI services help enterprises move from scattered data to scalable pipelines, with real-time processing, analytics integration, and AI-ready outputs built in. To see how this could apply to your organization, book a demo.

FAQs

Beginner-friendly use cases include simple ETL pipelines, automated reporting dashboards, and consolidating data from two or three source systems into a single view. These projects deliver visible value quickly without requiring streaming infrastructure or advanced ML pipelines, and they help teams build confidence with the tooling before tackling harder problems.

The fundamentals stay the same, but the priorities shift. Retail focuses on customer analytics and inventory optimization. Financial services prioritize fraud detection and regulatory reporting. Healthcare emphasizes patient data integration and compliance. Manufacturing relies heavily on IoT and predictive maintenance. Each industry shapes its data engineering investment around its biggest operational and regulatory challenges.

Common tools include Apache Spark for large-scale processing, Apache Kafka for streaming, Airflow or Dagster for workflow orchestration, dbt for transformation, and cloud platforms like AWS, Azure, or Google Cloud for storage and compute. The right mix depends on existing infrastructure, team skills, and the latency requirements of the use cases being built.

Reliable, accessible data is the foundation of a data-driven culture. When teams can trust that the numbers they see are accurate and current, they stop debating data and start using it. Data engineering removes the friction that pushes people back to gut-feel decisions, which, over time, shifts how the organization operates.

The biggest challenges are usually data quality, integration complexity, and skills gaps. Pulling data from legacy systems is often harder than expected. Maintaining quality at scale takes ongoing investment. And finding engineers with the right cloud and streaming experience is competitive. Infrastructure costs and security requirements add further constraints.

Technical metrics include pipeline reliability, data freshness, query performance, and reduction in manual data handling. Business metrics matter more in the long run: faster decision-making, improved operational efficiency, increased adoption of analytics across teams, and direct outcomes like reduced fraud losses or higher conversion rates. The strongest measures combine both.