Most enterprises today have an AI initiative running somewhere. Many have several. What far fewer have is a clear answer to which of those initiatives is actually changing how the business operates, and which are still demos waiting for a budget cycle to expire.

The gap between activity and outcome defines the current AI moment. Investment is no longer the constraint, but the direction is. Use cases that look promising on a strategy deck often run into messy data, unclear ownership, or KPIs nobody agreed to in advance, and they quietly stall in the space between proof-of-concept and production.

According to the 2025 McKinsey State of AI Report, 78% of organizations now report using AI in at least one business function, up from 72% in early 2024 and 55% the year before.

Adoption has crossed the threshold where the question is no longer whether to use AI, but where it pays back fastest and which use cases earn the right to scale across the organization.

In this guide, we walk through the AI use cases in business that consistently deliver measurable value across industries, the underlying categories of AI applications that anchor them, and the framework we apply to separate high-impact opportunities from expensive experiments.

Top AI use cases in business across industries

AI use cases in business look different in every sector because the underlying constraints differ. A hospital optimizing radiologist throughput is solving a different problem than a bank scoring credit applicants in real time, even when the underlying machine learning techniques overlap.

The strongest enterprise AI programs anchor each use case in an industry-specific pain point, such as diagnostic accuracy, equipment uptime, fraud loss, or conversion rate, rather than in the technology itself.

The five categories below are where we see the highest density of production AI today and the clearest line from model output to business impact.

1. AI in Healthcare

Healthcare AI is concentrated in clinical decision support and operational efficiency. On the clinical side, models trained on imaging data flag suspected pathologies in radiology, ophthalmology, and dermatology with sensitivity that meaningfully shifts triage workflows.

Early-warning models score patient deterioration risk in ICUs hours before vital signs degrade visibly, changing how nursing teams allocate attention across a ward.

Risk-stratification models for chronic disease populations identify which patients are most likely to be readmitted, enabling targeted intervention before a costly admission becomes inevitable.

On the operational side, AI is rewriting how hospitals plan capacity. Length-of-stay prediction, no-show forecasting, and staffing optimization models reduce the gap between admitted demand and available beds.

Revenue cycle automation handles claims coding, denial prediction, and prior authorization volumes that used to absorb significant administrative headcount.

The reason these use cases scale faster than clinical models is that they operate on structured operational data the hospital already owns, and they sit upstream of revenue, which makes the business case unambiguous.

According to the 2025 Deloitte Agentic AI in Healthcare Survey, 59% of early-adopter health systems expect cost savings above 20% within two to three years, compared with only 13% of organizations still in a watch-and-wait posture.

The gap is a leading indicator of where the productivity dividend will land. Health systems that built foundational data infrastructure early are now compounding returns across clinical, operational, and revenue cycle use cases simultaneously.

2. AI in Manufacturing

Manufacturing was one of the first sectors where AI moved past pilots, largely because the data sources, sensor streams, MES logs, and vision feeds already existed in usable form.

Predictive maintenance is the headline use case: vibration, temperature, and acoustic models predict component failure days or weeks before it happens, converting unscheduled downtime into planned maintenance windows where parts and labor can be staged ahead of time.

Quality inspection is the second pillar, with computer vision models catching surface defects, dimensional deviations, and assembly errors that human inspectors miss at line speed.

Production optimization is now where most of the operational lift is concentrated. Models that tune setpoints in real time across furnaces, mixers, filling lines, and CNC equipment reduce yield loss and energy use simultaneously.

Demand-aligned production scheduling reduces work-in-progress inventory and shortens lead times to customers. Energy optimization models, increasingly important as power costs become volatile, cut consumption without reducing throughput by orchestrating equipment cycles against tariff windows.

According to a 2025 McKinsey study on rewiring maintenance with gen AI, one industrial operator using a gen AI maintenance copilot cut unscheduled downtime by as much as 90%, with maintenance labor costs falling by a third and technicians gaining 40% more capacity.

Once predictive maintenance is reliable, planners can shorten safety stocks, finance can release tied-up working capital, and reliability engineers can shift from firefighting to root-cause analysis on the small set of failures that still surprise the model.

3. AI in Retail and E-commerce

Retail AI sits closest to the customer, which is why the use cases are denser and the feedback loops are faster. Personalized recommendations on product, search, and merchandising drive conversion uplift that compounds over millions of sessions.

Demand forecasting tightens inventory planning across SKUs and stores, reducing the twin costs of stockouts and end-of-season markdowns.

Dynamic pricing models adjust to competitor moves, weather signals, inventory positions, and elasticity curves within hours instead of weeks, reclaiming margin that static pricing leaves on the table.

Customer sentiment models read reviews, support transcripts, and social channels to flag product issues before they reach a churn cliff or a regulatory complaint.

Returns prediction models identify which orders are likely to be sent back and prompt either a different product recommendation or a sizing intervention before the package ships, attacking one of the largest hidden cost lines in e-commerce.

Visual search and conversational shopping experiences are now reshaping discovery itself, particularly in fashion and home categories where verbal queries underrepresent what shoppers actually want.

The retailers seeing the strongest results are not the ones with the most models, but the ones who connect those models to a single decision layer across assortment, pricing, promotion, and replenishment, so the recommendations actually change what shoppers see and what stores stock.

Without that connective tissue, individual models produce dashboards that planners read and ignore.

4. AI in BFSI

Banking, financial services, and insurance run on probabilistic decisions at scale, which makes them a natural fit for AI.

Fraud detection is the most mature use case: transaction-level models flag anomalous patterns in real time, blocking suspicious activity within milliseconds while keeping false positives low enough to preserve customer experience at the point of sale.

Credit scoring extends beyond traditional bureau data into cash-flow patterns, transactional history, and alternative signals, expanding the addressable population without raising default risk in segments that traditional underwriting could not serve confidently.

Risk and compliance use cases are where AI is now compounding hardest. Models that triage AML alerts, classify suspicious activity reports, and screen transactions for sanctions exposure are cutting investigative cycle times sharply while improving the precision of escalation.

Insurance carriers are deploying claims triage models that route low-severity claims to straight-through processing and reserve adjuster attention for cases that genuinely require it.

Customer service automation across voice bots, chat assistants, and intelligent routing is reducing handle time on the high-volume, low-complexity tail of inbound traffic without degrading resolution rates.

The integration pattern matters as much as the model in BFSI. Predictions that do not flow back into core banking, policy administration, or case management systems sit in dashboards that frontline staff bypass under volume pressure.

Cygnet.One’s Business Analytics and Embedded AI service is built around this constraint, embedding model outputs directly into the ERP, CRM, and core platforms business users already work in, so AI becomes a native part of the decision rather than a separate tool somebody has to remember to open.

5. AI in Marketing and Sales

Marketing and sales teams use AI to do three things faster

- Segment

- Target, and

- Personalize.

Lookalike modeling identifies high-conversion audiences from first-party data, replacing broad demographic targeting with behavioral precision. Lead scoring ranks the pipeline by conversion likelihood so sales effort lands on accounts where it actually changes outcomes, rather than being distributed evenly across a list.

Content personalization tailors landing pages, emails, product feeds, and recommendations to individual session signals, lifting conversion without proportional content production cost.

Campaign optimization closes the loop. Bid algorithms allocate spend across channels in real time, attribution models reconcile multi-touch journeys to identify which interactions actually drove revenue, and lifetime-value models reshape acquisition strategy around long-term return rather than first-purchase economics.

Generative AI is now collapsing the cost of variant production for ad copy, subject lines, and landing pages, which lets marketing teams test orders of magnitude more combinations than the manual process supported.

The marketing teams getting the most from AI are the ones who treat it as a measurement and decisioning layer first and a content factory second. Generation without measurement produces volume that erodes brand consistency and trains audiences to ignore you.

Measurement without generation produces analytical capacity that no creative pipeline can keep up with. The combination, sequenced correctly, is where the compounding return lives.

Types of AI applications in business

Underneath the industry use cases, AI applications cluster into a small number of recurring archetypes.

Recognizing the archetype matters because each one has a different data requirement, a different deployment pattern, a different talent profile, and a different measurement framework.

Treating personalization like automation, or decision intelligence like forecasting, is one of the most common reasons enterprise AI programs underperform their business case.

1. Automation and Process Optimization

Automation is the entry point for most enterprise AI programs because it has the clearest line to cost savings and the lowest organizational change burden.

Document-heavy workflows such as invoice processing, claims intake, KYC onboarding, and trade finance documentation combine OCR, NLP, and rule-based extraction to convert unstructured paperwork into structured data without manual touchpoints.

Intelligent routing models decide which tickets, applications, or transactions need human review and which can be straight-through processed, which is where the volume gains actually come from.

Process mining sits alongside automation, identifying where bottlenecks actually live in a workflow before automation is applied. The pairing matters because automating a broken process just produces the same defects faster.

Workflow orchestration, increasingly powered by agentic patterns that chain together multiple AI calls and structured tools, is where automation moves from single-task to multi-step.

The use cases that scale combine all three:

- Process mining to find what to automate

- Intelligent extraction to handle the unstructured inputs, and

- Orchestration to handle the cross-system coordination.

Cygnet.One’s Hyperautomation practice approaches this end-to-end, combining process mining, intelligent document processing with up to 97% accuracy, and RPA across functions like accounts payable, customer service, and procurement, so automation is sequenced where it actually compounds rather than applied to whichever process the loudest stakeholder nominated.

2. Predictive and Forecasting Applications

Forecasting models translate historical patterns into probabilistic views of the future. Demand forecasting drives inventory and production planning across retail, manufacturing, and consumer goods.

Churn prediction identifies which customers are leaving before they leave, giving retention teams a window to act with offers or service interventions. Equipment failure prediction underpins predictive maintenance. Cash-flow forecasting reshapes how treasury teams plan working capital across short and long horizons.

The reliability of these models depends on data depth more than algorithmic sophistication. Two years of clean historical data with consistent labels almost always outperforms five years of fragmented data run through a more complex model.

The use cases that scale are the ones where forecast accuracy is tied to a specific operational decision, a reorder quantity, a retention offer, a maintenance ticket, a hedging position, so the model’s output is consumed automatically by a downstream system rather than read in a dashboard and discounted by the planner.

3. Customer Experience and Personalization

Personalization use cases turn behavioral data into individualized experiences at the moment of interaction. Recommendation engines surface relevant products and content in product detail pages, search results, and email.

Conversational AI across voice assistants, chatbots, and in-app copilots handles inbound queries with context drawn from CRM, transaction history, and prior session signals.

Next-best-action models prompt sales and service representatives with the right offer, response, or escalation path in real time, converting tacit experience into a system-level capability that scales with headcount.

Personalization that feels intrusive or irrelevant erodes trust faster than no personalization at all, and the failure modes are easy to miss because they show up in slow-burn churn rather than immediate complaints.

The teams getting this right anchor every model output to a clear customer signal, such as recency, intent, or sentiment, rather than to a generic propensity score, and they monitor for personalization fatigue with the same rigor they apply to click-through. Personalization works best when it feels like service, rather than surveillance.

4. Decision Intelligence and Insights

Decision intelligence sits one layer above predictive models. It combines forecasts, business rules, optimization constraints, and contextual data to recommend or trigger specific actions rather than just predict outcomes.

Pricing optimization, network planning, supply chain orchestration, workforce scheduling, and capital allocation are common applications. The output is not a number but a decision, a price, a route, a shift assignment, a credit limit, that flows directly into an operational system and changes what happens next.

Real-time analytics is the substrate that makes this work. Streaming data pipelines, low-latency feature stores, and embedded BI surfaces let decisions happen at the cadence the business actually moves rather than at the cadence a quarterly review enables.

Cygnet.One’s Insights Driven Business Transformation approach builds this decisioning layer on top of integrated data sources, self-service BI, and reporting dashboards across BFSI, retail, manufacturing, and healthcare, so analytics translates into operational change rather than slide content for the next steering committee.

How to identify the right AI use cases in business

Identifying the right AI use cases in business is where most programs either compound or stall. The temptation is to start with the technology, a new model, a new platform, an internal LLM, and look for problems that fit it.

However, the best practice is to start with the business problem, audit the data that supports it, and only then ask which AI technique earns its place.

The framework below is the one we apply with enterprise teams when sequencing an AI portfolio, followed by the failure patterns we see most often when teams skip steps.

Step-by-Step Framework

Skipping any one of the steps below almost always forces a return to it later, usually after capital has been committed to a use case that the data or the organization is not ready to support. Each step creates the conditions that make the next step executable.

1. Define a clear business problem and objective:

Begin with a measurable goal that a CFO can write into a budget line and a business owner can be held to. For example:

- Reduce SMB churn by 15% within twelve months

- Cut invoice processing time by 60% in accounts payable

These are objectives that survive contact with reality. Vague goals like “improve operations with AI” or “explore generative AI” produce unaccountable outcomes and pilots nobody can call successful or unsuccessful with conviction.

The objective should be specific enough that we can identify the operational decision the model will inform, the metric that decision moves, and the baseline against which improvement will be measured throughout deployment.

Without that specificity, every status review becomes a debate about scope rather than a conversation about progress.

2. Identify and evaluate available data sources:

Audit data availability, quality, lineage, and access for the candidate use case before any technical design begins.

Most enterprises discover at this step that the data they assumed they had is either inaccessible behind another team, insufficient in volume, structured in ways that do not support the intended model, or only partially labeled.

Use cases die more often from data gaps than from model limitations, which is why this audit happens before architecture decisions rather than after.

The output of this step is a data readiness assessment that tells us whether the use case can launch on existing data, needs focused engineering to make data usable, or should wait until upstream investments mature. Honest answers here save quarters of rework.

3. Determine where AI adds the most value:

Focus on problems where pattern recognition, prediction, or personalization outperforms what a rule-based system, a well-designed report, or a workflow tool would accomplish.

Some problems are better served by simpler automation, and recognizing that early avoids a six-month engagement to reproduce what a SQL query and a workflow tool would have delivered in two weeks.

The signal that AI is the right tool is when the problem involves high-dimensional patterns humans cannot reason about manually, prediction at a scale or cadence humans cannot match, or personalization at a volume that breaks template-based approaches.

If the problem fails all three tests, the right answer is usually not AI, and saying so protects the budget for the use cases that genuinely need it.

4. Choose the right AI technique or model:

Match the technique to the problem rather than to the team’s current excitement. Tabular forecasting calls for gradient-boosted models. Document extraction calls for vision-language models. Conversational use cases call for fine-tuned LLMs or retrieval-augmented systems. Computer vision calls for purpose-trained networks.

The cost difference between techniques is significant, a gradient-boosted model costs orders of magnitude less to run at scale than an LLM doing the same tabular task.

Matching the technique to the problem prevents over-engineering a solution that a simpler approach would have solved at a fraction of the inference cost, and frees the LLM budget for the use cases that genuinely need generative capability.

5. Assess feasibility, cost, and ROI potential:

Estimate the build-and-run cost honestly, including data preparation, integration, monitoring, retraining, inference compute, and the change management required for adoption.

Compare against the realistic value the use case will return in year one and year two. Most early business cases overstate value because they ignore adoption friction and understate cost because they ignore ongoing operations.

A useful discipline is to model two scenarios:

- An optimistic case where adoption hits the target in six months, and

- A realistic case where it takes twelve.

If the use case still pays back in the realistic case, it deserves the next step. If only the optimistic case clears the bar, the assumptions need pressure testing before any commitment.

6. Prioritize use cases based on impact vs effort:

Plot the candidate portfolio on an impact-effort grid. High-impact, low-effort use cases anchor the early roadmap, not because they are the most ambitious, but because they build the political capital and operational muscle the program needs for the harder use cases that come later.

Ambitious projects with sparse data or unproven integration paths wait their turn. Demonstrating value early is more strategically valuable than attempting flagship projects that stall before reaching production, because each visible win improves the data quality, the talent retention, and the executive willingness to fund the next investment.

Early wins also reduce the cost of subsequent use cases by exercising the integration patterns once.

7. Develop a pilot or proof of concept:

Build a contained version of the use case on a single business unit, region, or product line with explicit entry and exit criteria.

The pilot exists to validate assumptions about data, model performance, integration complexity, user adoption, and operational readiness before the investment scales.

Pilots without exit criteria become permanent science projects that consume budget without ever being graded, and the longer they run without a verdict, the harder it becomes to either kill or scale them.

The exit criteria should be defined before the pilot starts and include:

- Which KPIs does the model need to move

- By how much

- Over what time window, and

- What happens at each outcome, including the option to stop.

8. Measure results and refine the approach:

Track the KPIs defined in step one against the pilot baseline rather than against an arbitrary “success” threshold defined after the fact.

Refine the model, the data pipeline, the user interface, and the integration layer based on what the pilot surfaces.

Most pilots reveal at least one assumption that was wrong, whether about data quality, user behavior, integration latency, or the operational workflow the model is supposed to support.

Treating those revisions as part of the plan rather than as setbacks keeps the program moving and prevents the “back to drawing board” cycle that consumes the second half of stalled AI engagements.

9. Scale successful AI use cases across the organization:

Move from pilot to production with a clear scaling plan that addresses governance, model monitoring, drift detection, retraining cadence, and change management across business units that did not participate in the pilot.

The operational disciplines that turn a working model into a durable capability are easy to underestimate from inside a successful pilot and expensive to retrofit after the fact.

Scaling also surfaces standardization decisions, what platform, what feature store, what observability stack, that affect every future use case.

Making those decisions deliberately at the first scale-out is significantly cheaper than reconciling incompatible choices across multiple business units two years in.

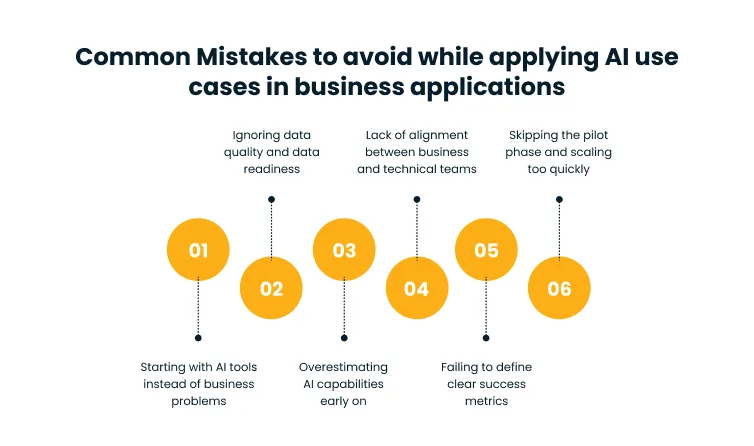

Common mistakes to avoid

The failure patterns we see in stalled AI programs are remarkably consistent across industries and company sizes. According to a 2025 Gartner press release on AI-ready data, through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data, a finding that maps directly to the mistakes below.

1. Starting with AI tools instead of business problems:

Tool-led initiatives produce demos rather than outcomes. A team excited about a new model, platform, or vendor pitch reaches for a use case to justify the investment, and the resulting project lacks a defined business problem to anchor it.

Without that anchor, success is undefined, the business owner is missing, and adoption stalls at the moment the pilot needs to convert into a production system, somebody is accountable for.

The best practice is to start with the operational problem worth solving, define the measurable outcome, and only then evaluate which technique fits. Tools that survive that filter earn their budget. Tools that do not, however impressive the demo, do not.

2. Ignoring data quality and data readiness:

Poor data produces unreliable models, and most enterprises underestimate how much of an AI program is actually a data engineering program.

Inconsistent labels, missing values, fragmented systems, and undocumented lineage create defects that surface late in development, when correcting them is expensive and demoralizing.

Teams routinely discover data problems six weeks into a model build, lose another twelve weeks fixing them, and emerge with a pilot that runs but never scales because the underlying foundation is not durable.

Cygnet.One’s Data Engineering and Management services address this upfront with maturity assessments, data quality automation, and AI-ready pipelines, so use cases launch on a foundation that supports them rather than one they have to renegotiate mid-flight.

3. Overestimating AI capabilities early on:

Expecting transformative results in the first quarter sets up disappointment that is structurally hard to recover from.

AI models compound value over multiple iterations, and the gap between a working pilot and a production system that delivers measurable business impact is often six to twelve months of integration, change management, and refinement work that is invisible from the demo.

The failure mode is rarely that the model underperforms. The failure is that executive expectations were calibrated to the demo rather than to the production trajectory.

Setting realistic expectations early, including the trough between pilot success and operational return, protects the program through the period where every successful AI program looks like it might be failing.

4. Lack of alignment between business and technical teams:

When the business owner and the data science team disagree on what success looks like, the model gets built but never gets deployed because nobody owns the integration, the change management, or the operational handover.

Alignment failures rarely show up at kickoff, where everyone is enthusiastic, and the framing is intentionally broad. They surface at the handoff between proof-of-concept and production, which is the most expensive moment to discover them.

The correction is to write the success definition jointly at project start, with both the business owner and the technical lead signing off, and to revisit it at every gate. Disagreements caught early are cheap. Disagreements caught at handoff usually mean the project restarts from scratch.

5. Failing to define clear success metrics:

Without baseline KPIs, there is no way to prove a model worked or justify the next round of investment. “Improve customer experience” is not a metric. “Reduce average handle time by 25% on tier-one support tickets while keeping CSAT above 4.2” is.

The discipline of defining success up front, in numbers that a business leader recognizes and the operational system can already measure, is what converts an AI program from an R&D line item into a budgeted capability.

The corollary matters equally, the metric should be measurable in the existing reporting infrastructure, not require a six-month BI build to track.

Metrics that depend on a parallel measurement system rarely get measured.

6. Skipping the pilot phase and scaling too quickly:

Direct-to-production deployments without a contained validation step amplify failure modes that a pilot would have surfaced cheaply.

Edge cases that did not appear in development, integration issues that emerge at full data volume, latency problems that show up under peak load, and adoption resistance that scales with organizational reach all hit at once and at full cost.

The pilot phase is not a delay imposed by cautious leadership, it is the lowest-cost insurance an AI program can buy.

Skipping it is rarely a deliberate strategic choice. It usually happens because the program lost momentum and a stakeholder pushed for “real” deployment to demonstrate progress, which is exactly the moment when the pilot’s discipline is most needed.

Conclusion

The enterprises pulling ahead on AI are not the ones with the most models or the largest data science teams.

They are the ones who picked a small set of high-leverage use cases, sequenced them against data and organizational readiness, and treated each one as an operational change program rather than a technology delivery.

The compounding curve favors patience over breadth, and the gap between disciplined and undisciplined AI programs widens every quarter.

The next eighteen months will widen that gap further. Organizations that have moved AI into the operating layer will keep extending the lead because each successful use case improves the data, the talent, and the institutional muscle the next one depends on.

The decision worth making now is not which model to build, but which three use cases will earn the right to scale first and which discipline the program will use to get them there.

If we are scoping the AI use cases that should anchor your roadmap, the conversation we usually start with is your data readiness, your prioritized portfolio, and the operational changes each use case will trigger.

Book a demo with our team to walk through how we sequence enterprise AI from problem definition to production-grade scale.

FAQs

The most common AI use cases in business include personalized customer recommendations, demand and sales forecasting, fraud detection, predictive maintenance, intelligent document processing, marketing campaign optimization, and conversational customer support.

Healthcare, manufacturing, retail and e-commerce, BFSI, and marketing-driven businesses see the highest concentration of production AI today.

We start by anchoring each candidate use case to a measurable business problem, then audit data availability and quality, estimate ROI honestly, and plot the portfolio on an impact-versus-effort grid. High-impact, low-effort use cases anchor the early roadmap. Ambitious projects with sparse data or unproven integration paths are deferred until the foundation supports them.

The recurring challenges are poor data quality, missing data engineering capability, weak alignment between business and technical owners, undefined success metrics, and scaling pilots without validation.

A focused pilot typically runs eight to twelve weeks. A full production deployment with integration, monitoring, and retraining infrastructure usually takes three to six months for moderate-complexity use cases, and longer for use cases that require new data pipelines or significant change management. Scope and infrastructure maturity drive the timeline more than model complexity.