What if your biggest AI risk is not the model you choose, but the data engineering company you trust to prepare the foundation beneath it?

Most enterprises already have the data. The harder problem is making that data usable across cloud platforms, legacy systems, SaaS tools, and business applications that were never built to work together. Without the right engineering layer, even strong analytics and AI investments can get slowed down by unreliable pipelines, inconsistent definitions, weak governance, and fragmented infrastructure.

The urgency is real. According to Business Research Insights, only 31% of firms say their data is ready for AI. That means many enterprises are not blocked by ambition. They are blocked by the quality, scalability, and reliability of their data foundation.

This guide compares the top data engineering companies to consider in 2026 and explains how to choose the right partner for your environment.

What Is a Data Engineering Company?

A data engineering company helps organizations design, build, and manage scalable data systems that enable analytics, AI, and business intelligence. These firms specialize in data pipelines, modern data architectures, and cloud-based platforms that turn raw, fragmented data into reliable, queryable infrastructure.

The work sits upstream of everything else. Before a BI dashboard can surface insights, before a machine learning model can train, before a report can be trusted, the data must be collected, cleaned, structured, and made accessible. A data engineering company builds and maintains the systems that make all of that possible.

The best ones don’t just execute technical tasks. They design architectures that scale with your business, adapt to new data sources, and don’t need to be rebuilt every time your requirements change.

Key services include:

- Data pipeline development and orchestration

- Data architecture design (lakehouse, warehouse, mesh)

- Big data processing and real-time analytics

- Cloud data platform implementation

- Data governance and quality management

Why Enterprises Need a Data Engineering Partner Today

Most enterprises don’t have a data shortage. They have a fragmentation problem. Data sits in separate systems that were never designed to talk to each other, and the gap between collecting data and actually using it keeps widening.

The Shift from Siloed Data to Unified Data Platforms

The typical enterprise picture looks like this: CRM data in Salesforce, operational data in an ERP, clickstream data in a lake nobody fully governs, product telemetry somewhere else. None of it connects cleanly, and the result is a fragmented picture that makes both reporting and AI adoption unreliable.

A unified data platform fixes this by creating a single source of truth — ingesting from all sources, transforming consistently, and serving a single governed layer to analysts, data scientists, and business users. Building that requires engineering, not just tooling.

Business Impact of Strong Data Engineering

The commercial case is well-documented. According to McKinsey’s “Insights to Impact” report, companies running data-driven sales growth engines report EBITDA increases in the range of 15 to 25 percent. That kind of impact doesn’t come from buying the right software; it comes from having the infrastructure to actually use data when decisions need to be made.

Real-time analytics, AI deployment, and automation all depend on the same thing: data that is clean, accessible, and current. Without a strong data engineering foundation, these capabilities stall at the pilot stage and rarely make it to production.

Common Challenges Enterprises Face

Most enterprises don’t struggle with vision; they struggle with execution. The recurring blockers are:

- Lack of scalable platforms that hold up as data volumes grow

- Poor visibility across systems, making it hard to trust any single report

- Legacy infrastructure not designed for cloud workloads, real-time processing, or AI

- Inability to operationalize AI because model inputs are inconsistent or unavailable

These aren’t technology problems in isolation. They’re engineering problems, and the core reason enterprises turn to external partners rather than trying to build this entirely in-house.

What Services Do Top Data Engineering Companies Offer?

Top data engineering companies cover six core service areas. The scope varies by provider, but these are the capabilities that matter most when evaluating a partner.

Data Pipeline Development and Orchestration

A data pipeline moves data from where it originates to where it needs to be transformed, validated, and ready for use. Building one sounds straightforward until you’re managing dozens of sources, handling schema changes, and ensuring pipelines don’t silently fail.

Top providers design for both batch and real-time use cases:

- Batch pipelines process large volumes of historical data on a schedule, useful for overnight reporting and model retraining

- Real-time pipelines process data as it arrives, essential for fraud detection, live dashboards, and event-driven applications

Common tools include Apache Airflow for orchestration, Kafka for high-throughput streaming, and dbt (data build tool) for transformation logic within the warehouse. The difference between a well-engineered pipeline and a fragile one is rarely the tools. It’s the design decisions and operational discipline behind them.

Data Architecture Design (Warehouse, Lake, Lakehouse, Mesh)

The architecture choice is one of the most consequential decisions in any data engineering engagement. Each model serves a different purpose:

- Data warehouse: stores structured, processed data optimized for querying and BI. Best when you have defined schemas and well-understood analytical needs. Snowflake, BigQuery, and Redshift are the dominant platforms.

- Data lake: stores raw data at scale in its native format, structured and unstructured. Flexible and cost-efficient, but hard to govern without careful management.

- Data lakehouse: combines lake storage scale with warehouse query performance and governance. Now, the dominant architecture for organizations running both analytics and AI workloads. Built on Delta Lake, Apache Iceberg, or Databricks.

- Data mesh: a decentralized approach where domain teams own and publish their own data products. Architecturally complex, but scales well in large enterprises with many independent business units.

Choosing the right one isn’t purely technical. It depends on team maturity, compliance requirements, and how the data will be used downstream.

Big Data Processing and Distributed Systems

When data volumes exceed what a single server can handle, distributed processing takes over. Apache Spark is the industry standard for large-scale batch processing. It parallelizes computation across clusters and handles petabyte-scale workloads. For high-velocity event data in real time, frameworks like Kafka Streams and Apache Flink are the standard choice.

The engineering challenge isn’t running these systems. It’s tuning them for performance, managing cluster resources efficiently, and building pipelines that hold up under production load, not just in a test environment.

Cloud Data Platform Implementation

Most enterprises build on AWS, Azure, or GCP. Each has its own ecosystem of managed data services:

- AWS: Glue for ETL, Redshift for warehousing, S3 for storage, Kinesis for streaming

- Azure: Synapse Analytics, Data Factory, ADLS

- GCP: BigQuery, Dataflow, Pub/Sub

Choosing between serverless and managed services involves trade-offs between cost, control, and operational complexity. An experienced data engineering partner helps navigate those trade-offs based on actual workload patterns, not what’s easiest to implement.

Data Governance, Quality, and Observability

Data that nobody trusts is data nobody uses. Governance, quality, and observability are what make data reliable at scale:

- Governance: defines ownership, access rights, classification, and retention policies

- Quality frameworks: validate formats, ranges, and completeness, and alert when something breaks

- Lineage tracking: shows where each data point came from and how it was transformed, critical for debugging and regulatory audits.

Poor data quality has real financial consequences. One industry estimate puts the average cost at $15 million per year per organization, a figure that compounds as more systems depend on the same unreliable inputs.

Cygnet.One’s Data Analytics & AI services include governance and quality frameworks built for enterprise compliance requirements, with lineage tracking and monitoring embedded across the platform.

MLOps and AI Data Pipelines

AI doesn’t usually fail because of a bad model. It fails because of bad data. MLOps is the discipline of building and maintaining the data infrastructure that keeps models reliable in production. That means:

- Versioning training datasets so model inputs are traceable

- Building feature stores that serve consistent inputs at both training and inference time

- Monitoring for data drift before it affects model performance

- Automating retraining pipelines when performance degrades

Data engineering companies with MLOps depth don’t just build the initial pipeline. They design systems that stay accurate over time without constant manual intervention.

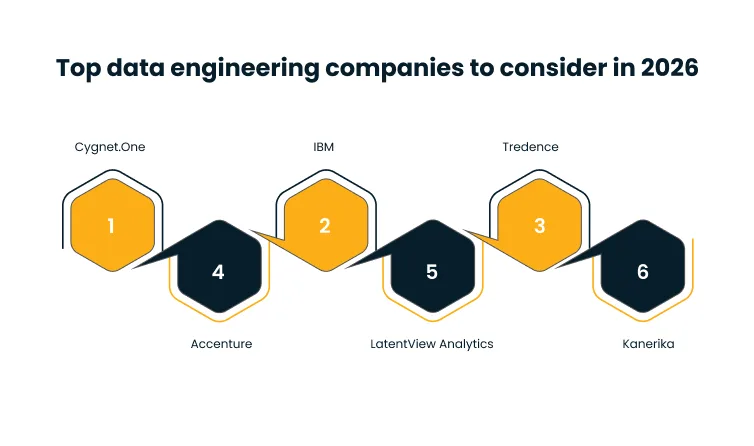

Comparison Table: Top Data Engineering Companies in 2026

Use this table to shortlist vendors quickly. For early filtering, focus on cloud expertise and engagement model. For deeper evaluation, compare industry experience and key differentiators.

| Company | Core Specialization | Key Services | Cloud Expertise | Industry Focus | Engagement Model | Ideal Size | Key Differentiator | Pricing |

| Cygnet.One | AI-first data engineering | Pipelines, lakehouse architecture, governance, MLOps, cloud migration | AWS (Advanced Partner), Azure, GCP | BFSI, retail, manufacturing, healthcare | Project, dedicated, hybrid | Mid-market to enterprise | AI-ready architectures built from the ground up; end-to-end digital transformation | Custom |

| Accenture | Global technology consulting | Full-stack data engineering, AI, cloud migration, analytics | AWS, Azure, GCP | Finance, health, retail, energy | Large-scale project, managed | Large enterprise | Scale and global delivery capability | Premium |

| IBM | Enterprise data and AI platforms | Data fabric, governance, hybrid cloud, Watson integration | IBM Cloud, AWS, Azure | Finance, government, healthcare | Project, managed services | Large enterprise | Deep governance and regulatory compliance frameworks | Premium |

| LatentView Analytics | Analytics-led engineering | Data pipelines, BI, consumer analytics, cloud platforms | AWS, Azure, GCP | CPG, retail, technology | Project, dedicated | Mid-market to enterprise | Analytics-first engineering with strong consumer insights capability | Mid-range |

| Tredence | AI and advanced analytics | ML engineering, data platforms, supply chain analytics | AWS, Azure | Retail, CPG, manufacturing | Project, dedicated | Mid-market to enterprise | Domain-specific AI solutions with industry accelerators | Mid-range |

| Kanerika | Hyperautomation and data integration | ETL, data integration, RPA, cloud data platforms | AWS, Azure, GCP | Finance, healthcare, logistics | Project, hybrid | Mid-market | Hyperautomation-first approach combining data engineering with RPA | Mid-range |

Top Data Engineering Companies to Consider in 2026

Each profile below follows the same structure, so you can compare vendors consistently. The goal is to give you enough technical and commercial detail to shortlist with confidence, not just a surface-level overview.

1. Cygnet.One

Cygnet.One is a global technology company with a dedicated Data Analytics & AI practice, working primarily with mid-market and enterprise organizations across BFSI, retail, manufacturing, and healthcare. Its core positioning is AI-ready data infrastructure, pipelines, and architectures designed from the start to support machine learning workloads, not just analytics.

As an AWS Advanced Tier Partner, its cloud engineering runs deepest on AWS but extends across Azure and GCP.

Key technical capabilities:

- End-to-end data platform development: ingestion, transformation using dbt (data build tool) and Spark, lakehouse storage on Delta Lake and Apache Iceberg, and the analytics layer on top

- Real-time pipeline engineering: streaming pipelines using Kafka and Apache Flink for fraud detection, operational dashboards, and IoT data processing

- Cloud-native architecture design: AWS (Glue, Redshift, S3, Kinesis), Azure (Synapse, Data Factory, ADLS), and GCP (BigQuery, Dataflow, Pub/Sub); serverless vs. managed trade-offs based on actual workload patterns

- MLOps and AI pipelines: feature stores, automated model retraining, dataset versioning, and data drift monitoring in production

- Governance and quality: lineage tracking, data contracts, schema validation, and observability monitoring for enterprise compliance

- Industry accelerators: pre-built solution patterns for BFSI and retail with domain-specific compliance logic already embedded

Pros:

- Single partner across the full data lifecycle, ingestion through to analytics and AI deployment

- AI-first architecture means less rework when initiatives scale beyond pilot

- AWS Advanced Tier partnership is verified expertise, not just platform familiarity

Cons:

- Less suited to purely advisory engagements without implementation

- Custom pricing requires a scoping conversation before budget estimation

- Deepest track record in BFSI and retail, validate domain experience for other verticals

Best for: Large enterprises modernizing legacy infrastructure for analytics and AI; organizations that need one partner across data engineering, analytics, and deployment; cloud-first companies building on AWS.

2. Accenture

Accenture runs one of the largest data engineering and AI practices globally. Its scale means it can staff and manage multi-year, multi-region transformation programs that smaller providers cannot, across the full spectrum of enterprise industries and geographies.

Key technical capabilities:

- Full-stack data engineering: ingestion, transformation, warehousing, and orchestration across AWS, Azure, and GCP using both proprietary accelerators and open-source tooling

- Cloud migration frameworks: structured patterns for moving legacy on-premise infrastructure to cloud-native architectures across common enterprise stacks

- AI and ML platform development: model training pipelines, feature engineering infrastructure, and MLOps frameworks at enterprise scale

- Governance and compliance: lineage, access controls, and regulatory compliance tooling across multi-jurisdiction environments

- Managed analytics services: ongoing platform management, monitoring, and optimization post-implementation

Pros:

- Delivery scale few providers can match, simultaneous workstreams across geographies

- Certified expertise across AWS, Azure, and GCP

- Proprietary accelerators reduce build time on common implementation patterns

Cons:

- Premium pricing puts it out of reach for most mid-market organizations

- Large program structures can slow decision cycles

- Less suited to organizations that need a focused, lean engineering team

Best for: Global enterprises running multi-year, multi-region data transformation programs that require large program management capability.

3. IBM

IBM’s position is built around its data fabric architecture, a unified approach that connects distributed data sources, enforces governance policies, and makes data accessible across hybrid environments without requiring full migration to a single platform.

Key technical capabilities:

- Data fabric implementation: connects data across on-premise, private cloud, and public cloud using a unified metadata and governance layer, without physical data consolidation

- Hybrid cloud engineering: data architectures spanning IBM Cloud, AWS, and Azure, with strength in environments where sovereignty or regulation prevents full public cloud migration

- Watson integration: embeds IBM’s AI platform directly into pipelines for organizations already running Watson workloads

- Enterprise governance: lineage, policy enforcement, privacy controls, and compliance tooling across complex multi-system environments

- Data virtualization: query across distributed sources without physical data movement, reducing replication overhead and latency

Pros:

- Data fabric approach suits organizations that cannot consolidate data into a single platform

- Strong governance tooling for finance and government sectors

- Hybrid cloud flexibility for sovereignty-constrained environments

Cons:

- IBM-heavy implementations create platform dependency that is costly to unwind.

- Less suited to fully cloud-native, open-source-first approaches

- Implementation complexity can extend timelines in large hybrid environments

Best for: Large enterprises in regulated industries with significant existing IBM infrastructure; organizations with data sovereignty requirements that prevent full public cloud migration.

4. LatentView Analytics

LatentView operates at the intersection of analytics consulting and data engineering. Its engineering work is tightly coupled to its analytics capability, which means it excels when the primary objective is BI and consumer insights rather than AI infrastructure.

Key technical capabilities:

- Pipeline development: batch and near-real-time ingestion using Airflow, Spark, and dbt (data build tool) for transformation, primarily on AWS and Azure

- BI and analytics engineering: semantic layers, data models, and self-service BI tooling built on top of the data infrastructure

- Consumer analytics data products: customer segmentation, demand forecasting, and campaign attribution for CPG and retail use cases

- Cloud platform implementation: AWS (Redshift, Glue, S3), Azure (Synapse, Data Factory), and GCP (BigQuery)

Pros:

- Analytics-first engineering means infrastructure is built with the end use case in mind

- Strong CPG and retail domain expertise reduces scoping time and implementation risk

- Mid-market pricing without large consulting firm overhead

Cons:

- Narrower MLOps and AI infrastructure depth than engineering-first providers

- Limited regional coverage outside North America and India

- Less suited to organizations whose primary goal is AI operationalization

Best for: Mid-market CPG and retail organizations where BI and consumer analytics are the primary drivers; teams that want engineering and analytics from a single provider.

5. Tredence

Tredence focuses on AI-driven analytics and data engineering in retail, CPG, and manufacturing. Its library of industry-specific accelerators, pre-built data models, pipeline templates, and ML use case frameworks is its primary differentiator for organizations in those verticals.

Key technical capabilities:

- ML engineering and AI pipelines: feature engineering, model training on AWS SageMaker and Azure ML, and inference pipeline management

- Lakehouse architecture: Delta Lake and Apache Iceberg on AWS and Azure, with Spark-based processing for large-scale batch workloads

- Supply chain analytics: data infrastructure for demand forecasting, inventory optimization, and supplier performance analytics

- Industry accelerators: pre-built data models and pipeline templates with domain-specific logic validated in production

- DataOps: CI/CD pipelines for data, automated testing, and production monitoring

Pros:

- Industry accelerators genuinely reduce implementation time for retail and CPG

- ML infrastructure was considered from the start, not added later

- Focused delivery model without large program overhead

Cons:

- Narrow vertical coverage, validate domain experience carefully outside retail, CPG, and manufacturing.

- Less suited to governance-heavy data infrastructure requirements

Best for: Retail and CPG enterprises operationalizing AI with industry-specific data infrastructure; manufacturing organizations building supply chain analytics capability.

6. Kanerika

Kanerika combines data engineering with robotic process automation (RPA) and intelligent process automation (IPA) in the same engagement. This makes it well-suited to organizations that need to modernize data infrastructure and automate business processes simultaneously, without running separate workstreams with separate vendors.

Key technical capabilities:

- ETL pipeline development: batch and near-real-time pipelines using Airflow and dbt (data build tool) across AWS, Azure, and GCP

- Enterprise data integration: connects ERP, CRM, and operational systems using API-based integration and change data capture (CDC) patterns

- RPA and process automation: UiPath and Automation Anywhere workflows are integrated directly with data pipelines, so data outputs trigger automated business process actions

- Hyperautomation architecture: end-to-end automation combining data engineering, RPA, and workflow automation into a single system rather than disconnected point solutions

Pros:

- Data engineering and hyperautomation in a single engagement reduce vendor coordination

- Delivery model calibrated for mid-market organizations without large internal data teams

- Competitive pricing relative to the breadth of capability

Cons:

- MLOps depth is narrower than engineering-first providers

- Less suited to organizations whose primary need is pure data platform engineering at scale

Best for: Mid-market organizations combining data modernization with business process automation; organizations with significant manual process overhead downstream of data outputs.

Data Engineering Company vs. Data Consulting Firm: What’s the Difference?

One builds, the other advises. In practice, the line blurs, but understanding the distinction helps you avoid hiring the wrong type of partner for where you actually are.

Execution vs. Strategy Focus

A consulting firm’s core output is a document, a roadmap, an architecture recommendation, or a gap analysis. That work has genuine value early in a transformation. But it doesn’t build anything.

A data engineering company’s core output is working infrastructure, pipelines that run, platforms that scale, and architectures that are live and tested. Engineering firms may do advisory work, but execution is their primary accountability.

When You Need a Data Engineering Partner

You need an engineering partner when you’ve moved past “what should we do” and into “we need to build this.” Specifically:

- You’re implementing a new cloud data platform or lakehouse architecture

- You’re migrating from on-premise systems to a modern data stack

- Your current pipelines are failing, slow, or don’t scale

- You’re deploying AI, and the underlying data infrastructure isn’t ready

When Consulting Alone Is Not Enough

A strategy without an execution partner stalls. The pattern is common: an organization commissions a data strategy, receives a well-reasoned document, then discovers that implementing it requires engineering capability that neither the internal team nor the consulting firm has. The document sits.

Engineering partners are accountable for the system working, not just for the recommendation.

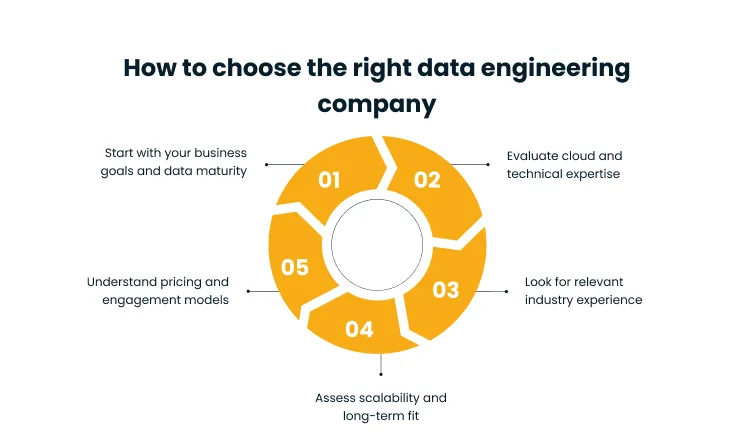

How to Choose the Right Data Engineering Company

The right partner depends on where your data environment is today, not just where you want it to go. These five criteria give you a structured way to evaluate any provider before committing.

1. Start with Your Business Goals and Data Maturity

Be honest about where you are before evaluating where you want to go. The right partner for an organization just beginning to centralize its data is not the right partner for an enterprise already running a lakehouse and moving toward AI operationalization.

Early-stage organizations need partners who set strong architectural foundations without over-engineering for scale they don’t have yet. More mature organizations need partners who can work within complex existing systems and build toward advanced analytics without breaking what’s already working.

2. Evaluate Cloud and Technical Expertise

Verify; don’t just accept claims that any shortlisted partner has hands-on experience with your cloud of choice. Ask about specific managed services, native integrations, and cost optimization approaches they use on that platform.

Cloud platform expertise has to be verified, not assumed. Ask about specific managed services, native integrations, and cost optimization approaches they use on your cloud of choice. Beyond cloud, ask about modern data stack experience: Airflow, Spark, Kafka, Delta Lake, dbt (data build tool).

3. Look for Relevant Industry Experience

Domain knowledge reduces implementation risk in ways that are hard to quantify until something goes wrong. A partner with financial services experience understands reconciliation workflows, auditability requirements, and transaction data volumes. One with healthcare experience understands HIPAA and the complexity of integrating clinical and claims data.

Industry experience shows up in three places: the accelerators they’ve already built, the compliance frameworks they come in with, and the questions they ask during scoping that a generalist firm wouldn’t think to ask.

4. Assess Scalability and Long-Term Fit

An architecture that works at current data volumes may not hold up at 5x or 10x. A pipeline that handles three sources cleanly may break when you add fifteen. Evaluate how a partner thinks about scalability as a design constraint from day one, not something to address later.

Three questions worth asking in any evaluation:

- How do you handle schema evolution when upstream sources change?

- How do you build for observability so failures surface before they affect downstream users?

- What is your approach to technical debt on data infrastructure?

5. Understand Pricing and Engagement, Models

Data engineering engagements typically come in three forms:

- Fixed-scope projects: work well when requirements are clearly defined upfront

- Dedicated team models: better suited to environments where needs evolve continuously, and ongoing platform management is required

- Hybrid models: combine project-based delivery with retained support, useful when you need initial build capacity alongside longer-term optimization

The right model depends on how your data environment is changing and how much internal ownership you want to build over time. Neither fixed nor dedicated is inherently better; the fit depends on your team’s maturity and how stable your requirements are.

Why Choosing the Right Data Engineering Partner Matters

The partner you choose shapes what your organization can do with data for the next several years, not just the next project. Three factors make this decision more consequential than most technology investments.

It Becomes the Foundation for AI and Automation

AI initiatives fail at the data layer more often than the model layer. Models trained on inconsistent inputs produce inconsistent outputs. Pipelines that break silently take automation down with them. The data infrastructure built today will either support or constrain every AI initiative that follows.

It Directly Impacts Decision-Making Speed and Accuracy

Fragmented data systems force decisions onto stale exports, disconnected reports, and whichever system a particular team happens to have access to. Well-engineered infrastructure compresses that cycle — real-time pipelines surface current information, and unified platforms ensure every team works from the same source of truth. The organizations that close that gap consistently outperform those that don’t.

It Future-Proofs Your Data Strategy

Architectures built on open formats, modular components, and cloud-native services adapt as data technology evolves. Those built on proprietary tooling or monolithic designs create the next problem while solving the current one.

Why Cygnet.One Is the Right Data Engineering Partner for Enterprises

Choosing a data engineering company is ultimately about finding a partner that can carry the work beyond the initial build, into the analytics, AI, and automation layers that create business value. Here is what Cygnet.One brings to that engagement.

AI-First Data Engineering Approach

Cygnet.One designs data platforms with AI readiness as a structural requirement, not an afterthought. Pipelines are built to support feature engineering, model training workflows, and real-time inference from the start, which means organizations avoid the costly rework that happens when AI initiatives outgrow infrastructure that wasn’t designed for them.

Strong AWS and Cloud Expertise

As an AWS Advanced Tier Partner, Cygnet.One has verified, hands-on expertise across the AWS data ecosystem, Glue for ETL, Redshift for warehousing, S3 for storage, Kinesis for streaming, and SageMaker for ML workloads. That partnership status reflects demonstrated technical capability and delivery track record, not just platform familiarity. Cygnet.One also works across Azure and GCP, giving organizations running multi-cloud environments a consistent engineering partner across all three.

Proven Experience with Global Enterprises

Cygnet.One has delivered data engineering engagements for large, complex organizations across BFSI, retail, manufacturing, and healthcare. That depth of experience shows up practically, in how engagements are scoped to account for multi-system integration, in compliance frameworks that come pre-built for regulated industries, and in an understanding of the organizational dynamics that determine whether a data transformation actually gets adopted.

End-to-End Capability Across the Data Lifecycle

Data engineering is the foundation, not the finish line. Cygnet.One covers the full journey through its Data Analytics & AI practice, from raw ingestion and pipeline development through to analytics, BI, and AI deployment. Organizations working with Cygnet.One doesn’t have to manage handoffs between an engineering partner, an analytics partner, and an AI partner. One team carries the work end-to-end.

Scalable, Secure, and Future-Ready Architectures

Cygnet.One builds on open standards: Delta Lake, Apache Iceberg, cloud-native managed services, which means architectures don’t create vendor lock-in and can evolve as data volumes grow and new technologies emerge. Governance and compliance requirements are embedded at the architecture level, not bolted on after the fact.

Conclusion

Most enterprises don’t struggle with the decision to invest in data engineering; they struggle with knowing where to start and which partner to trust with the infrastructure that everything else will depend on.

The comparison table gives you a starting point for shortlisting based on cloud expertise, industry experience, and engagement model. The evaluation criteria in each section give you the right questions to pressure-test any provider before committing. Start with a clearly scoped engagement, a single domain, a specific pipeline, or a bounded platform component, and validate delivery capability before scaling the relationship.

The organizations building strong data foundations now will be the ones positioned to move faster on analytics, AI, and automation as those capabilities mature. The infrastructure decision is not optional; only the timing is.

If you’re ready to evaluate what the right data engineering architecture looks like for your environment, book a demo with Cygnet.One team.

FAQs

A data engineering company designs, builds, and manages systems that move data from source to destination, covering pipelines, storage, transformation, and governance. The output is infrastructure that makes data consistently available for analytics, AI, and business decision-making.

Costs range from $20K for small projects to over $200K for enterprise implementations, depending on data volume, system complexity, and integrations. Pricing models include fixed-scope projects, time-based billing, and dedicated team arrangements

Data engineering builds the infrastructure that makes data accessible and reliable. Data analytics uses that infrastructure to generate insights. Engineers work on pipelines and architecture. Analysts work on reports, dashboards, and business recommendations

Small projects take 4–8 weeks. Mid-sized implementations run 2–4 months. Large enterprise transformations take 6–12 months or more, typically delivered in phases to manage risk and maintain momentum.

Not always early on, but the need grows as data volume and complexity increase. Companies scaling rapidly or moving toward AI adoption benefit from external expertise before infrastructure decisions become difficult to reverse.