Every enterprise evaluating AI consulting providers has navigated a version of the same situation. A vendor demonstrates impressive models, presents case studies from adjacent industries, and proposes a roadmap that sounds exactly right.

Six months later, the engagement hasn’t delivered what was promised, the model isn’t in production, and the internal team understands less about why than they should.

According to the 2025 Gartner Forecast on Worldwide GenAI Spending, worldwide GenAI spending is projected to reach $644 billion in 2025, up 76.4% from 2024. The investment is accelerating, but the ability to evaluate consulting partners rigorously enough to protect that investment is not.

In this blog, we cover what AI consulting services actually include, when they generate the most business value, and how to evaluate providers against criteria that predict delivery performance rather than presentation quality.

By the end, you’ll have a specific framework for selecting an AI consulting partner and a concrete reference point for evaluating Cygnet.One against it.

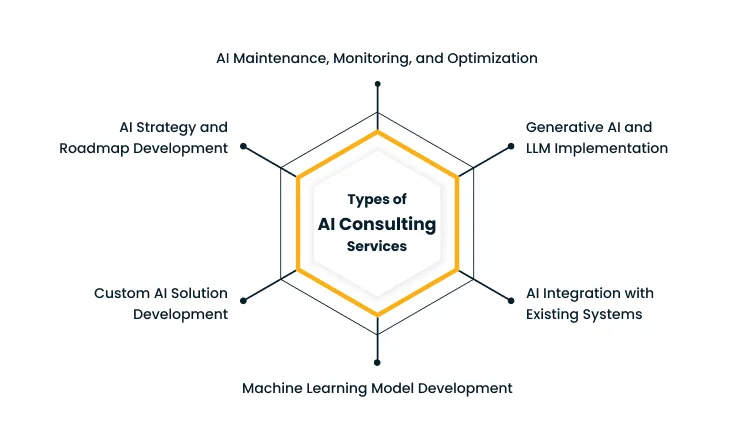

Types of AI Consulting Services

AI consulting covers a wide range of engagement types, from a four-week strategy sprint to a multi-year managed AI service. Understanding the types helps enterprise buyers scope an engagement around the capability gap they actually have, instead of contracting for capabilities they do not need or missing ones they do.

1. AI Strategy and Roadmap Development

Strategy engagements answer the questions an enterprise should resolve before any model is built. For example:

- Which business problems are worth solving with AI

- Which are better served by simpler automation?

- Where does the data already exist, and where will it need to be sourced?

- What sequence of deployments will produce compounding returns over the next two to three years?

Typical deliverables include:

- A scored use case prioritization matrix

- A 12 to 24-month phased roadmap

- A capability gap assessment

- An investment envelope

- KPI definitions per use case, and

- A target operating model for AI governance.

Engagement length is usually four to eight weeks. The deliverables should be detailed enough that a CFO can fund the program and a CTO can begin execution without further scoping work.

According to the2026 Gartner Study on Data and Analytics Foundations for AI, organizations with successful AI initiatives invest up to four times more (as a percentage of their revenue) in their data quality, governance, AI-ready people, and change management than those whose initiatives stall.

A roadmap that sequences foundational investment ahead of model development is what produces this kind of compounding return over a multi-year horizon.

2. Custom AI Solution Development

Off-the-shelf AI products solve generic problems. Custom development is for use cases that depend on proprietary data, specific business logic, or workflows that no vendor’s pre-built model accommodates.

For example, recommendation engines tuned to a specific retailer’s customer behavior, fraud detection models trained on a bank’s transaction patterns, predictive maintenance systems calibrated to a manufacturer’s specific equipment profile.

A typical custom development engagement runs three to six months from kickoff to first production deployment, with discovery in weeks one to four, model development in weeks five to twelve, and integration plus user acceptance testing in the remainder.

Deliverables should include the trained model, the data pipelines, the inference infrastructure, monitoring dashboards, retraining playbooks, and the documentation that lets internal teams maintain the system after handover.

Cygnet.One’sBusiness Analytics and Embedded AI service approaches custom AI delivery with embedded integration as a default, building model outputs directly into the ERP, CRM, and industry-specific platforms business users already work in, rather than as standalone tools they have to switch into.

3. Machine Learning Model Development

Machine learning model development is the technical core of most AI engagements. It covers data preparation, feature engineering, model selection, training, validation, and production deployment.

Consultants here bring expertise in choosing the right algorithm for the problem, structuring the training pipeline so models can be retrained as data shifts, and building the monitoring infrastructure that catches performance degradation before it affects business outcomes.

Ask any prospective partner to walk through a recent ML engagement from data ingestion through production monitoring.

The answer should cover their feature store choices, validation thresholds, deployment cadence, and how they handle rollback when a new model underperforms in production. A team that cannot describe these specifics is not delivering production ML at scale.

4. AI Integration with Existing Systems

Most of the difficulty in enterprise AI comes from making the model work inside a stack that was designed years before AI was on the roadmap.

ERP systems, CRM platforms, supply chain tools, and customer support systems each have their own data structures, APIs, and update cadences that were not built with AI integration in mind.

Integration consulting covers:

- Data mapping between source systems and the AI inference layer

- Schema reconciliation across systems with conflicting field definitions

- Latency management for synchronous predictions inside transactional flows, and

- Workflow design that surfaces AI outputs inside the systems business users already work in.

Sample deliverables include the integration architecture, the API contracts, the fallback design for when the model is unavailable, and the user acceptance test plan that validates AI outputs against existing system data before go-live.

Cygnet.One’s Hyperautomation service combines RPA, AI, and workflow orchestration to integrate AI outputs across enterprise platforms such as SAP, Salesforce, and Oracle, with intelligent document processing and process mining bundled as part of the integration toolkit.

5. Generative AI and LLM Implementation

Generative AI engagements have shifted from proof-of-concept work to production deployment over the past 18 months.

Common patterns include:

- Retrieval-augmented generation systems that ground LLM outputs in proprietary documentation

- Agentic workflows that chain LLM calls with structured tools to complete multi-step tasks, and

- Domain-specific models fine-tuned on enterprise data for higher accuracy and lower cost than general-purpose APIs.

Evaluate prospective partners on the GenAI-specific capabilities below:

- A working evaluation harness for LLM outputs, with concrete metrics for accuracy, hallucination rate, and latency

- Experience with at least two model providers (OpenAI, Anthropic, AWS Bedrock, Azure OpenAI, Google Vertex) and the ability to recommend across them based on the use case

- Cost optimization track record, including prompt compression, caching strategies, and tiered model routing

- Guardrail design for prompt injection, output safety, and PII handling

Cygnet.One’sAWS Generative AI service covers production deployment of foundation models through Amazon Bedrock, custom fine-tuning through SageMaker, and agentic workflows through Bedrock AgentCore, with managed infrastructure that handles security, compliance, and scaling beyond pilot.

6. AI Maintenance, Monitoring, and Optimization

AI models in production are not static. Data distributions shift, business conditions change, and prediction accuracy drifts away from baseline performance over time.

Maintenance engagements cover the operational responsibilities that keep AI systems trustworthy and reliable in production. These include monitoring for:

- Model drift and data quality issues

- Scheduled retraining on updated data

- Inference cost optimization as usage scales, and

- Incident response when model outputs cause business impact.

A maintenance engagement is typically structured as a monthly retainer with defined SLAs around model performance, retraining cadence, and incident response time.

Without this discipline, the AI system that worked at launch becomes a liability within 12 to 18 months as the data the model was trained on diverges from current business reality.

How AI Consulting Services Help Businesses Grow?

The justification for hiring a consulting partner is the difference in business outcomes between a self-led AI program and one that benefits from focused expertise. The four growth levers below are where well-executed AI consulting engagements consistently produce measurable returns.

1. Automating Business Processes

The clearest return on AI consulting comes from automating high-volume, rule-based work that has historically required human judgment.

For example, document processing, invoice classification, customer ticket routing, fraud screening, and quality inspection are common targets.

Consultants design the automation so the AI handles high-confidence cases automatically and routes ambiguous cases to human reviewers, which protects accuracy without sacrificing the volume gains.

Outcomes typically include a 30 to 60% reduction in manual processing time, faster cycle times for downstream workflows, and lower cost-per-transaction in operations that scale with revenue.

2. Improving Decision-Making with Data

Most enterprises have more data than they use. AI consulting engagements turn that data into models that surface patterns analysts cannot see manually.

Demand forecasting is accurate enough to drive inventory decisions, customer churn models that flag accounts to retain before they cancel, and pricing optimization that reflects real-time competitive dynamics each move decision quality from intuition to evidence.

The business impact compounds because better decisions today produce better data for the next round of model training, which is why the second and third deployments in an AI program tend to deliver faster than the first.

3. Enhancing Customer Experience

Customer experience improvements from AI come from two directions:

- Faster response times through conversational AI handling routine support volume around the clock, with escalation paths to human agents for complex cases.

- More relevant interactions through recommendation systems that personalize product discovery based on individual behavior patterns.

Sentiment analysis on support transcripts surfaces friction points that would otherwise stay invisible to product teams.

Consultants help select which customer journey moments are worth instrumenting with AI and which are better served by simpler design changes, which avoids spending AI budget on problems that do not need AI.

4. Reducing Costs and Increasing Efficiency

AI engagements that reduce costs do so by attacking specific operational line items. Predictive maintenance lowers unplanned downtime in manufacturing.

Energy optimization reduces utility costs in data centers and facilities. Demand-driven workforce scheduling cuts labor costs in retail and hospitality.

Each of these requires the kind of domain-specific modeling and integration work that consultants are built to deliver.

The savings are quantifiable and recurring, which makes the engagement easy to justify to a CFO who wants to see the payback period before the next budget cycle.

When Should You Hire an AI Consulting Services Company?

Internal teams can build AI capability over time, and many should. The question is when external expertise accelerates the program enough to justify the cost and ROI. The signals below are the most reliable indicators that a consulting engagement will produce better outcomes than another internal hiring round.

1. When You Have Data but Lack Actionable Insights

The signal here is that BI dashboards exist and are well-maintained, but business decisions are still being made without the data inputs the dashboards surface.

Reports describe what happened. AI models predict what will happen and prescribe what to do about it.

A consulting engagement focused on use case identification and rapid model development can convert dormant data infrastructure into operational impact within a single quarter, particularly when the underlying data is already clean and accessible.

2. When Manual Processes Are Slowing Down Operations

The signal is when transaction volumes are growing but cycle times are flat or declining. For example, underwriters reviewing applications by hand, support teams triaging tickets one at a time, and finance teams reconciling invoices manually.

Each has an AI-augmented version that scales without proportional headcount. Consulting partners bring the experience of having automated similar processes in comparable enterprises, which compresses the discovery and design phase from quarters to weeks and lowers the risk of building automation that the business ultimately cannot adopt.

3. When You Want to Implement AI but Lack In-House Expertise

Hiring senior AI talent in-house is competitive, expensive, and slow. The alternative is a consulting engagement that brings senior practitioners onto the program immediately, with a structured upskilling track for internal staff as the engagement progresses.

This pattern works particularly well for enterprises with strong domain expertise but no existing ML team. The consulting partner contributes the technical depth, the internal team contributes domain context, and knowledge transfer happens as part of the delivery rather than as a separate workstream after launch.

According to the 2026 Gartner CFO Survey on AI and Digital Talent, acquiring and developing AI and digital talent is the top near-term challenge cited by CFOs.

The shortage shows up in salary inflation, extended time-to-hire, and high attrition once AI engineers are onboarded, all of which favor an engagement model where senior practitioners arrive on day one rather than 9 to 12 months in.

4. When Scaling AI Solutions Across the Organization

A successful AI pilot is a different problem from an enterprise-wide AI program. Scaling requires standardized MLOps infrastructure, consistent model governance, reusable data pipelines, and change management across business units that did not participate in the pilot.

Consultants who have done this elsewhere bring playbooks for the standardization work, which is largely operational and easy to underestimate from the inside. The risk of skipping this expertise is fragmentation.

Different teams build incompatible solutions, and the cost of consolidating them later exceeds the cost of doing it right the first time.

5. When Existing AI Initiatives Are Not Delivering ROI

Some AI programs reach production but fail to produce the financial returns the business case promised. The reasons may vary from inadequate model accuracy, incomplete integration, poor adoption, or the original business case may have been miscalibrated.

A four to six-week diagnostic engagement with an external partner can identify which of these is the root cause and recommend whether to fix the existing system, redirect the use case, or sunset it and reallocate the budget.

According to the 2026 Gartner Survey on AI in Infrastructure and Operations, only 28% of AI use cases in infrastructure and operations fully succeed and meet ROI expectations, while 20% fail outright, with 57% of leaders attributing failure to expecting too much, too fast. Initiatives stuck in this band are expensive to keep running and inexpensive to surface with an external review.

6. When You Need a Clear AI Strategy and Roadmap

Enterprises with multiple uncoordinated AI initiatives running in parallel often discover that none connect to a coherent strategy.

A roadmap engagement consolidates the in-flight work, prioritizes against business value, sequences future initiatives, and establishes the governance structure that prevents future fragmentation.

This is high-leverage work because every downstream investment becomes more efficient once the strategy is in place. Most enterprises see the strategy engagement pay for itself within the first two AI investments it shapes.

According to the 2025 Gartner Survey on AI Maturity in Organizations, 45% of high-maturity organizations keep AI initiatives operational for at least three years, compared to only 20% in low-maturity organizations.

The maturity gap is largely structural rather than technical, which is why a strategy engagement disproportionately pays back over the long horizon.

7. When Time-to-Market Is Critical for AI Adoption

Some AI initiatives have a hard window. For example, a competitor launches a feature, a regulator imposes a deadline, a major customer demands a capability, and an internal build cycle of 12 to 18 months is too slow.

Consulting partners with relevant prior implementations can compress that timeline meaningfully, sometimes by 50% or more, by reusing architectural patterns and avoiding the discovery loops that internal teams have to work through from scratch.

The math of paying for acceleration usually favors the partner when time-to-market itself drives the business case.

How to Choose the Right AI Consulting Services Provider

The criteria below cover the dimensions that separate a partner who will deliver from one whose pitch outperforms their delivery:

1. Evaluate Industry Experience and Proven Case Studies

A provider that has solved a problem similar to yours, in your industry, with measurable outcomes, is dramatically more likely to deliver than one with generic AI credentials.

- Ask for case studies that include the specific business metric improved, the technical approach taken, and the post-deployment results 12 to 18 months later

- Request reference calls with two or three named clients in your industry

- Verify that the team proposed for your engagement is the same team that delivered the case study work

Red flag: vague references to “Fortune 500 clients” without specifics, or a refusal to provide referenceable contacts.

2. Assess Technical Expertise and AI Capabilities

The depth of the technical bench is the constraint on what a partner can actually deliver. Look for credible expertise across the full stack, including data engineering, classical machine learning, MLOps, generative AI, and the cloud platforms your environment runs on.

- Ask about specific frameworks, model architectures, and deployment patterns the team has worked with in the last 12 months

- Request resumes of the specific senior practitioners assigned to lead your engagement, with their direct project history

- Test technical depth in the evaluation by asking the proposed lead to walk through a deployment from data ingestion to monitoring

Red flag: heavy use of generic AI terminology without concrete project specifics, or a proposed lead who deflects technical questions to other team members.

3. Understand Their AI Development and Deployment Process

A defined, repeatable delivery process is what separates predictable engagements from open-ended ones.

Ask the provider to walk through their methodology end-to-end, covering:

- How do they scope use cases and validate business value before development begins?

- How they handle data discovery and quality assessment

- How do they validate models against production criteria before deployment?

- How they manage staged rollouts, monitoring, and rollback

- How do they structure handover and knowledge transfer at engagement close?

The answer should sound rehearsed in a good way, like a process they have run many times. A provider improvising the answer in real time is improvising on your engagement too.

4. Check Their Approach to Data Security and Compliance

Enterprise AI engagements involve sensitive data, including customer records, financial transactions, proprietary IP, and regulated information. The provider’s security and compliance posture is a hard requirement.

- Verify certifications relevant to your environment (SOC 2 Type II, ISO 27001, HIPAA, GDPR readiness, sector-specific frameworks)

- Ask about their data handling policies, including whether they work in your environment or extract data into theirs

- Confirm their approach to model training data isolation, especially for GenAI engagements, where prompt and output logs can leak sensitive information

- Review their incident response process and notification timelines

Red flag: missing or expired certifications, vague answers about where your data lives during the engagement, or no documented incident response process.

Cygnet.One’sGovernance, Risk Management, and Compliance service helps enterprises build the certifications, audit processes, and risk controls that AI engagements increasingly require, including ISO 27001, SOC 2, and sector-specific regulatory frameworks.

5. Review Communication, Transparency, and Collaboration Model

The day-to-day experience of working with a consulting partner is largely determined by how they communicate.

- Confirm cadence and format of status reporting, with named owners on both sides

- Verify that technical leads are accessible directly, not gated by an account manager

- Request examples of how they have surfaced bad news in past engagements and how they handled the recovery

- Confirm collaboration tooling integrates with your existing Slack, Jira, GitHub, or equivalent stack

Red flag: weekly slide decks as the primary status mechanism, limited direct access to technical leads, or pushback on transparency around in-flight work.

6. Compare Pricing Models and Engagement Flexibility

Pricing models for AI consulting range from fixed-price project work to time-and-materials engagements to outcome-based contracts. Each fits different scenarios.

- Fixed-price: works for tightly scoped engagements with clear requirements, like a strategy sprint or a defined POC

- Time-and-materials: works for exploratory engagements where scope evolves during discovery, like first-of-its-kind deployments or diagnostic work

- Outcome-based or hybrid: aligns incentives well but requires both sides to agree on measurable outcomes and verification methodology upfront

- Managed service or retainer: works for ongoing maintenance, monitoring, and optimization after initial deployment

The right partner offers multiple models and is transparent about the tradeoffs of each.

Red flag: a single pricing model offered regardless of engagement type, or pricing that anchors high during pitch and adjusts opportunistically once scope is locked in.

Conclusion

AI consulting has matured into a procurement decision that buyers can evaluate against real outcomes from prior enterprise implementations.

The enterprises that get the most from these engagements treat them as multi-year capability-building partnerships, with each deliverable explicitly designed to leave the internal team better equipped to manage the next phase.

The risk in these decisions sits less in the spending itself and more in selecting a partner whose capabilities, process, and commercial incentives are misaligned with the business outcomes the program needs to deliver.

The criteria above convert a long shortlist into a defensible decision, and the diligence they require pays back across every subsequent engagement the partner runs.

Selecting an AI consulting partner shapes whether enterprise AI delivers measurable returns or stalls in pilot mode.

Cygnet.One’sData Analytics & AI service combines strategy, custom development, integration, and managed AI operations across the full enterprise AI lifecycle.

Book a demo to discuss how Cygnet.One can support your AI roadmap and deployment priorities.