The average global cost of a data breach hit $4.88 million in 2024, according to the 2024 IBM Cost of a Data Breach Report.

A significant share of that exposure starts in a place most organizations overlook.

QA and staging systems routinely hold copies of production data, often without masking, access controls, or audit trails. That turns every unprotected test database into a compliance liability.

At the same time, QA teams depend on realistic data to catch real defects. When they work with incomplete, stale, or poorly controlled datasets, defects slip through, automation breaks, and releases get delayed for reasons that have nothing to do with the code.

Test data management (TDM) addresses both problems. It gives teams a structured way to create, secure, provision, and maintain data that is realistic enough for thorough testing and protected enough for regulatory compliance.

This guide covers what TDM involves, why it matters, its key components, common challenges, practical strategies, and how to evaluate the right tools.

What Is Test Data Management?

Test data management is the practice of creating, preparing, securing, provisioning, and maintaining the data that software testing depends on. It covers the full lifecycle of test data across functional, regression, integration, performance, and automation testing.

A mature TDM process allows QA teams to validate real-world scenarios without relying on uncontrolled production data, while also supporting regulatory compliance through techniques like data masking, anonymization, and synthetic data generation.

The data itself can take many forms, including masked production records, synthetic datasets, subsetted databases, edge-case data, role-based user profiles, and transactional histories. When TDM is handled properly, testing becomes more reliable, more repeatable, and significantly more secure.

Despite how straightforward TDM sounds in principle, many organizations underinvest in it until a compliance audit flags unmasked data in staging, or a production defect traces back to a test that ran on stale records. By that point, the cost is already real.

Why Test Data Management Matters for QA and Compliance

Test data management matters because poor data quality directly undermines software quality. When the data feeding your test suites is missing, stale, inconsistent, or unrealistic, QA teams struggle to test critical workflows, edge cases, and business rules the way they need to.

For QA teams specifically, a solid TDM process improves:

- Test coverage by providing datasets that represent the full range of user journeys, business rules, and edge cases an application needs to handle.

- Defect detection by surfacing bugs that only appear with specific data combinations, such as null values, boundary conditions, or invalid inputs.

- Automation reliability is achieved by keeping test data consistent across runs so that scripts produce stable, repeatable results instead of false failures.

- Regression testing speed is improved by making pre-validated datasets available on demand instead of requiring manual data preparation before each cycle.

- Environment readiness by ensuring that QA, staging, and performance environments have the right data loaded before testing begins.

- Release confidence by giving teams evidence that the software has been tested against realistic, production-representative scenarios.

These gains translate directly into fewer production incidents, shorter release cycles, and less time spent debugging issues that should have been caught earlier.

For compliance teams, the stakes are different but equally serious. Non-production environments frequently contain copies of production data. That means personal information, financial records, healthcare data, and business-sensitive details often sit in QA and staging systems with far less protection than the production databases they were copied from.

Without masking, anonymization, access controls, and audit trails, these environments can become a compliance risk. TDM helps organizations reduce that exposure by applying protections before data ever reaches a test system.

TDM helps organizations balance both needs at once: realistic data for better testing and protected data for safer compliance.

But TDM is not a single tool or a one-time setup. It is a set of coordinated practices, each responsible for a different stage of the data lifecycle, from identifying where sensitive data lives to controlling who can access it in a test environment.

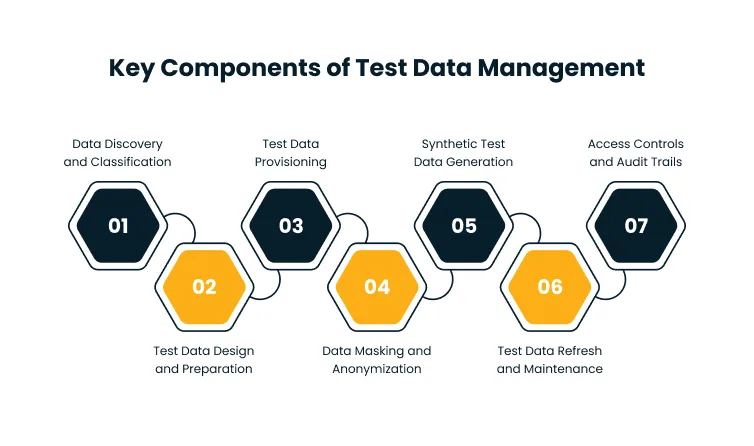

Key Components of Test Data Management

A test data management process is made up of several connected activities. Each one serves a specific purpose, and skipping any of them creates gaps that affect either testing quality, compliance posture, or both.

Together, these components form the foundation of test data governance, giving organizations a structured way to control how data is created, secured, and used across testing environments. Here is what a complete TDM process includes.

Data Discovery and Classification

The 2024 IBM Cost of a Data Breach Report found that 40% of breaches involved data stored across multiple environments, including public cloud, private cloud, and on-premises systems. When data is scattered like this, discovery and classification become important for preventing exposure in test environments.

Data discovery is where TDM starts. Before teams can provision or protect test data, they need to understand what data exists, where it lives, which systems contain sensitive information, and what data types are required for testing.

Classification takes that a step further. It helps teams separate sensitive data (like personal identifiers, financial records, and healthcare information) from non-sensitive data so they can apply the right protections to each type. Without this step, teams cannot mask, subset, or provision data effectively because they do not know what they are working with.

Test Data Design and Preparation

Test data design ensures that datasets are tied to real test scenarios, user journeys, and business rules rather than being random or ad hoc. Instead of pulling generic data and hoping it covers enough ground, QA teams prepare specific datasets for positive, negative, boundary, and edge-case testing based on what the application actually needs to validate.

Consider an insurance application. Meaningful test data for that system would include active policies, expired policies, rejected claims, high-value claims, and cases with missing customer documents. Each of those scenarios needs specific data characteristics to test properly.

Well-designed test data helps QA teams catch defects that generic datasets would miss entirely.

Test Data Provisioning

Test data provisioning is the process of delivering prepared data to the right testing environment at the right time. It helps QA, development, automation, and performance testing teams access data without waiting for manual database refreshes or one-off data requests.

Provisioning can be manual, automated, self-service, or integrated directly into CI/CD pipelines. The goal is always the same: make test data available quickly and consistently so that testing does not stall while teams wait for environments to be ready.

Data Masking and Anonymization

Data masking and anonymization protect sensitive production data before it reaches test environments. Masking replaces real values with realistic but safe alternatives, while anonymization removes or transforms identifiers so individuals cannot be recognized.

For example, real customer names, phone numbers, bank account details, and Social Security numbers get replaced with values that preserve the data’s structure and format without exposing actual identities. The masked data still behaves like production data in tests, but it carries none of the compliance risk.

This is one of the most important TDM components for regulated industries like banking, healthcare, insurance, and finance.

Synthetic Test Data Generation

Synthetic test data is artificially generated data that mimics real-world patterns without using actual production records. It is especially useful when teams need privacy-safe data, rare test scenarios, or large data volumes for automation and performance testing.

Synthetic data can help QA teams test situations that may not appear often in production, such as failed payments, unusual user behavior patterns, invalid inputs, or high-risk transaction sequences. It also eliminates the dependency on production data entirely for certain test types, which simplifies both provisioning and compliance.

Test Data Refresh and Maintenance

Test data has a shelf life. Over time, test environments accumulate duplicate, outdated, and inconsistent records that reduce testing accuracy and cause automation failures.

Regular refresh and maintenance help teams remove obsolete records, reset data after test runs, keep automation scripts stable, maintain consistency across environments, and reduce false test failures. Without a defined refresh cadence, QA teams spend more time troubleshooting data issues than validating application behavior.

Access Controls and Audit Trails

Access controls define who can view, modify, provision, or approve test data. Audit trails record how data is accessed, changed, and used across environments.

Together, these controls reduce unauthorized access, support compliance reviews, and create accountability across QA, development, DevOps, security, and compliance teams. They are especially important in organizations subject to regulations like GDPR, HIPAA, PCI-DSS, and SOX, where the ability to demonstrate data handling practices is a regulatory requirement.

On paper, these components are straightforward. In practice, test data responsibilities are often split across QA, DevOps, security, and database teams that operate on different timelines and priorities, which is where most TDM efforts start to break down.

Common Test Data Management Challenges

Many organizations struggle with test data because it sits across multiple systems, environments, and owners. Without a structured TDM process, QA teams often spend more time finding or fixing data than actually testing the application.

The most common challenges include:

- Lack of realistic test data. QA teams may not have data that reflects real business workflows, user behavior, or edge cases. Generic datasets miss the scenarios that matter most.

- Sensitive data exposure in test environments. Production data gets copied into QA or staging without masking or access controls, creating compliance risk that many teams are not even aware of.

- Slow or inconsistent test data provisioning. Testers wait days for data requests, database refreshes, or environment setup. This bottleneck delays testing cycles and pushes back releases.

- Complex data dependencies across systems. Enterprise applications often depend on connected systems like ERP, CRM, payment gateways, APIs, and legacy databases. Getting test data right across all of them is a coordination challenge.

- Stale or duplicate data. Old or reused datasets cause unreliable results and automation failures that are difficult to diagnose.

- Limited ownership and accountability. No clear team is responsible for maintaining, securing, and refreshing test data. Without defined ownership, data quality degrades over time.

These challenges slow down testing, increase compliance risk, and reduce confidence in the software being released.

The most effective TDM programs do not try to solve all of these at once. They start with the highest-risk gaps, like unmasked production data in QA or manual provisioning bottlenecks, and expand from there as processes mature.

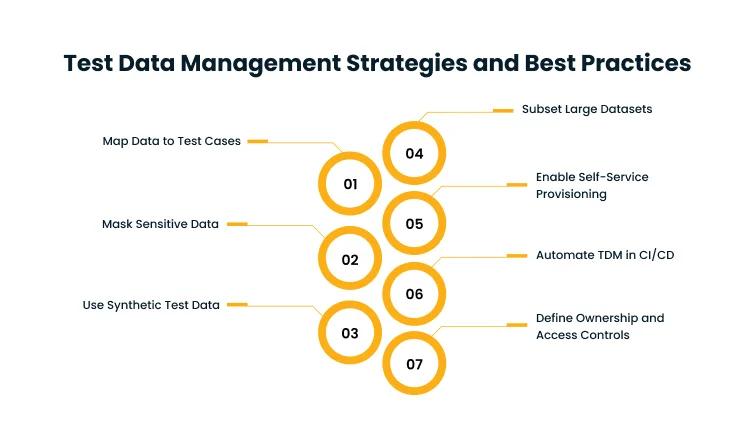

Test Data Management Strategies and Best Practices

Effective test data management requires both the right strategy and disciplined execution. The goal is to make test data realistic enough for QA, secure enough for compliance, and available enough to keep up with modern delivery speeds.

Whether teams build these capabilities internally or rely on dedicated TDM solutions, the following practices consistently make the biggest difference.

Map Test Data to Test Cases and Business Rules

Test data should be planned around specific test scenarios, not generated randomly. Each dataset should support a particular user journey, business rule, validation, or edge case.

For a payment application, that means including test data for successful payments, failed payments, refunds, expired cards, duplicate transactions, and high-value transactions. A clear mapping should define the test scenario, required data fields, data source, sensitivity level, preparation method, and expected result.

This kind of deliberate data design is what separates thorough testing from testing that looks thorough but misses critical paths.

Use Data Masking for Sensitive Production Data

When QA teams need realistic, production-like data but cannot expose sensitive values, data masking is the right approach. Masking preserves the format and behavior of the data while replacing confidential details with safe alternatives.

This is particularly important for regulated industries like banking, healthcare, insurance, retail, and finance, where test environments frequently contain personal or transactional information that falls under data protection regulations.

Use Synthetic Data for Controlled Test Scenarios

Synthetic data works best when teams need privacy-safe, controlled, or hard-to-find test scenarios. It is useful for testing new features, edge cases, negative scenarios, and large-scale automation runs.

It is especially valuable when production data is unavailable, restricted, incomplete, or too risky to use. Synthetic data generation tools can create large volumes of structurally realistic data without any dependency on actual production records.

Use Data Subsetting to Reduce Test Data Volume

Data subsetting creates smaller, representative datasets from larger databases. Instead of copying an entire production database into a test environment, teams extract only the data needed for specific tests.

This reduces storage costs, improves provisioning speed, and limits unnecessary data exposure. For organizations running hundreds of test environments, subsetting can also significantly reduce infrastructure spend.

Enable Self-Service Test Data Provisioning

Self-service provisioning allows QA and development teams to request or generate test data without filing tickets or waiting for database teams to process manual requests.

This improves testing speed and helps teams run regression, automation, and integration tests more efficiently. Strong access controls should still govern the process to prevent misuse, but the provisioning itself should not be a bottleneck.

Automate Test Data Management in CI/CD Pipelines

Automating TDM within CI/CD pipelines ensures that test data is created, provisioned, validated, and reset as part of every build and deployment cycle. This is especially important for automated regression testing, API testing, integration testing, and performance testing.

Automation removes the manual effort of preparing data before each test run and makes test execution more repeatable and consistent. For teams practicing continuous delivery, this is not optional. It is a prerequisite for keeping testing in sync with the pace of development.

According to the 2023 Gartner Press Release on Data Quality, Gartner estimated that through 2024, 50% of organizations would adopt modern data quality solutions to better support their digital business initiatives. Test data management automation is a key part of that shift.

Define Ownership, Access Controls, and Audit Trails

Every TDM process needs clear ownership. Without it, test data quality degrades, compliance gaps go unnoticed, and provisioning becomes a recurring source of delays.

A strong ownership model includes QA owners for test data requirements, data owners for source data approval, security teams for access and masking rules, compliance teams for regulatory alignment, and DevOps teams for provisioning workflows.

When everyone knows their role, test data management runs as a coordinated process rather than a series of ad hoc requests.

These strategies work at a small scale with manual effort, but they break down quickly as environments multiply, teams grow, and compliance requirements tighten. That is where purpose-built tooling becomes necessary.

TDM Tools and Selection Criteria

TDM tools help teams automate and manage activities like data discovery, masking, synthetic data generation, provisioning, subsetting, and audit tracking. The right tool depends on the organization’s testing maturity, compliance needs, system complexity, and automation goals.

Common TDM tool capabilities include:

- Data discovery and classification to scan connected systems, identify where sensitive data lives, and tag it by type and risk level before any provisioning happens.

- Data masking and anonymization to replace sensitive values with realistic alternatives that preserve data structure without exposing real identities or records.

- Synthetic test data generation to create privacy-safe datasets that simulate production patterns, including edge cases and high-volume scenarios that real data may not cover.

- Test data provisioning to deliver prepared datasets to the right environment at the right time, either through manual requests, self-service portals, or automated pipelines.

- Data subsetting to extract smaller, representative slices of production databases so teams avoid copying entire datasets when only a fraction is needed for testing.

- CI/CD integration to embed data preparation, validation, and cleanup directly into build and deployment workflows so test data is always in sync with the release cycle.

- Role-based access control and audit logging to restrict who can view or modify test data and maintain a record of every access event for compliance reporting.

- Support for cloud, on-premises, and hybrid environments to work across the mix of infrastructure most enterprise teams operate in today.

When evaluating TDM tools, teams should consider:

- Data source compatibility. Does the tool connect to your databases, cloud platforms, APIs, and legacy systems without requiring custom adapters for each one?

- Pre-QA data protection. Can it mask or anonymize production data before it ever reaches a test environment, not just after provisioning?

- Technique coverage. Does it support masking, synthetic generation, and subsetting together, or do you need separate tools for each approach?

- On-demand provisioning. Can QA teams request or generate test data themselves, or does every dataset require a manual handoff from a database team?

- DevOps integration. Does it plug into your CI/CD pipelines, test automation frameworks, and container orchestration tools without significant custom configuration?

- Compliance readiness. Does it produce audit trails, access logs, and reporting that satisfy your regulatory requirements (GDPR, HIPAA, PCI-DSS, SOX)?

- Enterprise scale. Can it handle the volume and complexity of data across multiple applications, business units, and connected systems without performance degradation?

For organizations where quality engineering spans functional, regression, performance, and automation testing across complex enterprise applications, the TDM solution needs to match that breadth. The best tool is not the one with the most features. It is the one that fits your QA process, data privacy requirements, and compliance obligations.

Conclusion

Test data management is essential for modern software testing. QA teams need reliable, realistic data to catch defects early and keep releases on track. Compliance teams need confidence that sensitive information is not sitting unprotected in test environments. A strong TDM approach delivers both.

By combining data discovery, masking, synthetic data generation, provisioning, access controls, and clear ownership, organizations can make testing faster, safer, and more reliable. The result is better test coverage, fewer data-related delays, more stable automation, and lower compliance risk across non-production environments.

The organizations that get TDM right spend less time fighting data issues and more time validating the software their customers depend on.

Want to improve QA outcomes without increasing data privacy risk? Book a demo with Cygnet.One to assess your current test data practices, identify compliance gaps, and build a practical TDM approach that supports secure, reliable testing at scale.

Test Data Management FAQs

The main purpose of test data management is to provide accurate, secure, and usable data for software testing. It helps QA teams test applications effectively using realistic datasets while protecting sensitive information through masking, anonymization, and access controls. TDM ensures that the right data is available in the right environment at the right time.

TDM supports QA by improving test coverage, reducing data-related test failures, enabling repeatable automation, and helping teams validate real-world scenarios more accurately. When test data is well-managed, QA teams spend less time troubleshooting data issues and more time testing application behavior.

TDM supports compliance by reducing the use of exposed production data in test environments. It applies practices like data masking, anonymization, synthetic data generation, access controls, and audit trails to ensure that sensitive information stays protected throughout the testing lifecycle.

Test data provisioning is the process of delivering prepared test data to QA, staging, automation, or performance testing environments when needed. It can be manual, automated, or self-service, and the goal is to eliminate delays caused by waiting for data access or environment setup.

Synthetic test data is artificially generated data that looks and behaves like real production data but does not contain actual records or sensitive personal information. It is useful for privacy-safe testing, edge-case scenarios, and high-volume automation runs where production data is unavailable or restricted.

Test data management is the broader process of managing all aspects of data used for testing, from discovery and design through provisioning and maintenance. Data masking is one technique within TDM that replaces sensitive values with safe alternatives before data is used in test environments.