Most infrastructure failures are not sudden. They accumulate from monitoring gaps that were tolerated, patch cycles that slipped, and capacity planning deferred to the next quarter. By the time a system goes down, the conditions that caused the failure have typically existed for weeks.

This pattern repeats across enterprises that have grown their IT footprint faster than their management practices could keep pace with. New cloud environments were added without governance. On-premise systems were extended without documentation. Security controls were implemented inconsistently as the stack grew more complex.

The infrastructure holds together until a single unmonitored dependency breaks the chain. IT Infrastructure Management is the discipline that addresses this systematically. It introduces structured monitoring, operational processes, security controls, and capacity planning to keep complex enterprise systems stable and aligned with business objectives.

This blog examines the infrastructure models organizations operate today, the management functions that keep them running, and the measurable outcomes that structured infrastructure management delivers.

What is IT Infrastructure Management?

IT Infrastructure Management is the process of administering, monitoring, and maintaining an organization’s core technology systems, including hardware, software, networks, and data storage.

Its purpose is to ensure that every technology component operates efficiently, remains secure, and can scale with organizational needs. This includes system monitoring, performance tuning, incident management, patch administration, and capacity planning.

Rather than responding to failures after they occur, IT Infrastructure Management takes a structured, proactive approach to keeping enterprise systems stable and aligned with business goals.

For mid-to-large enterprises, it represents the operational foundation that every other digital capability, from application delivery and cloud workloads to data pipelines and security systems, depends on to function reliably.

Why is IT Infrastructure Management critical for modern enterprises?

Modern enterprises run on continuous availability. A payment platform that goes down costs revenue by the minute. A manufacturing system that loses connectivity stops production lines.

The tolerance for downtime has reached near zero across industries, and that pressure lands squarely on IT infrastructure teams.

Availability is the most visible pressure, but three other forces make IT Infrastructure Management equally critical.

First, digital transformation is compressing timelines. Organizations are deploying new cloud workloads, onboarding SaaS platforms, and integrating AI capabilities faster than most IT teams were built to handle. Without structured infrastructure management, new deployments create unmonitored blind spots.

Systems go unowned, performance goes untracked, and security gaps compound over time.

Second, the attack surface has expanded significantly. Remote work, cloud adoption, and third-party integrations have introduced more entry points for attackers. Infrastructure management is the mechanism through which organizations enforce access controls, apply patches consistently, and detect anomalies before they escalate.

Third, cost efficiency has become a board-level concern. Cloud sprawl, idle compute resources, and over-provisioned storage quietly inflate IT budgets. Without visibility into how infrastructure is being utilized, organizations cannot optimize spend or make informed procurement decisions.

Organizations that approach infrastructure management as a strategic discipline rather than a maintenance function scale faster, recover more quickly, and spend less on corrective firefighting.

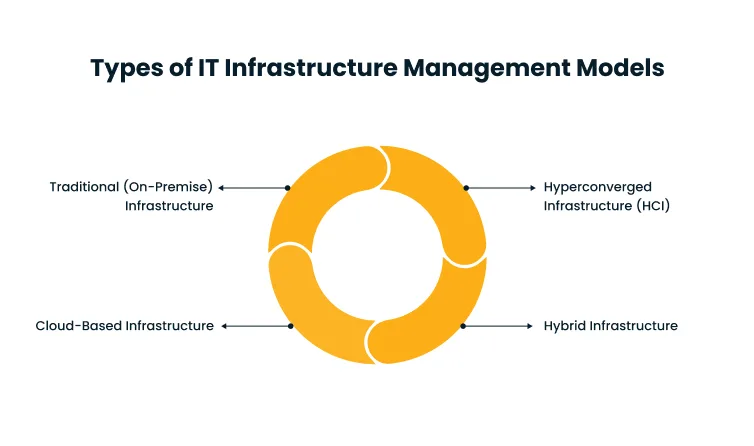

Types of IT Infrastructure Management Models

Organizations do not arrive at an infrastructure model by default. The choice is shaped by operational complexity, regulatory requirements, budget tolerance, and the pace at which the business needs to scale.

Each model carries distinct trade-offs, and for many large enterprises, the real question is not which model to choose but how to combine them intelligently as requirements evolve over time.

1. Traditional (On-Premise) Infrastructure

On-premise infrastructure means all computing resources, including servers, storage, and networking equipment, are physically located within the organization’s facilities and managed by its internal IT team.

The organization owns the hardware, controls the software stack, manages the network, and bears full responsibility for maintenance, security, and hardware refresh cycles.

The primary advantage is control. For industries with strict data sovereignty requirements, such as financial services, healthcare, and defense, among them, keeping data on-premise can be a regulatory necessity rather than a design preference.

Organizations also avoid dependency on third-party availability and the variable costs that come with cloud billing models.

The trade-offs are real. Capital expenditure for on-premise infrastructure is high. Hardware depreciates, requires regular refresh cycles, and demands skilled personnel to maintain.

Scaling up means procuring and deploying new hardware, which introduces lead times that cloud environments eliminate entirely. For enterprises with predictable, stable workloads and strong compliance requirements, on-premise infrastructure remains a defensible model.

For those prioritizing speed and flexibility, it introduces friction that compounds as the organization grows.

2. Cloud-Based Infrastructure

Cloud infrastructure shifts the physical layer to a third-party provider, with the organization consuming compute, storage, and networking as on-demand services. There is no hardware to own, no data center to operate, and no capital expenditure for baseline capacity.

The scalability case is straightforward. Cloud environments allow organizations to provision resources in minutes rather than months. A traffic spike, a new product launch, or a sudden increase in data processing load can be absorbed without pre-planned hardware procurement.

Pay-per-use billing converts infrastructure spend from a fixed cost to a variable one. Worldwide end-user spending on public cloud services is forecast to reach $723 billion in 2025, according to Gartner’s 2024 Study on Cloud Adoption, a 21.5% increase year-over-year.

The scale of adoption reflects how deeply cloud infrastructure has become embedded in enterprise operations globally.

The risks sit at a different layer. Cloud environments introduce dependency on provider uptime, create shared-responsibility security models that are frequently misunderstood, and can generate unexpected costs when usage is not governed properly.

Managing cloud infrastructure well requires governance frameworks, not just a billing account.

3. Hybrid Infrastructure

Hybrid infrastructure runs on-premise systems alongside cloud environments, connecting them through APIs, private networks, or purpose-built integration layers.

The underlying logic is workload placement: some data and applications belong on-premise for compliance or latency reasons, while others benefit from cloud scalability and managed services.

The operational complexity of hybrid infrastructure is higher than either model in isolation. IT teams must manage two distinct environments, maintain consistent security policies across both, and ensure that latency across the connecting layer does not degrade performance-sensitive applications.

Configuration drift between environments, where on-premise and cloud systems gradually diverge in security posture and operational standards, is one of the most common and costly problems hybrid teams encounter.

Hybrid infrastructure is not a transitional state for most large enterprises. It is a permanent operating model. Legacy systems, regulatory constraints, and long-running vendor contracts keep portions of infrastructure on-premise for years, sometimes indefinitely.

Organizations that manage hybrid environments with deliberate workload governance and unified monitoring consistently outperform those that treat the two environments as independent problems.

4. Hyperconverged Infrastructure (HCI)

Hyperconverged infrastructure consolidates compute, storage, and networking into a single software-defined platform, typically running on commodity hardware. Rather than managing separate silos, IT teams operate a unified system through a single management interface.

The operational benefit is significant. Provisioning new capacity in an HCI environment is a software operation rather than a physical one. Scaling up means adding a node to the cluster rather than purchasing and integrating separate hardware components.

This simplification reduces the expertise required to operate the infrastructure and compresses the time from procurement to deployment considerably.

HCI is particularly suited to edge computing deployments, branch offices, and virtual desktop infrastructure environments, where dedicated hardware for each function is impractical. It is also a common starting point for organizations beginning their journey toward software-defined data centers.

The trade-off is performance density: at very large scale, purpose-built specialized hardware typically outperforms converged systems on raw throughput.

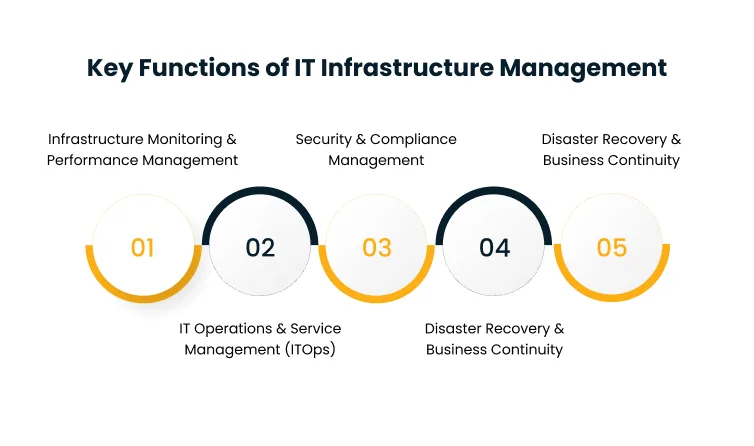

Key Functions of IT Infrastructure Management

Managing enterprise infrastructure is a collection of interconnected disciplines, each addressing a different dimension of system health, operational continuity, and organizational risk.

The functions below represent the core responsibilities that any structured IT infrastructure management practice must own, regardless of the underlying infrastructure model in use.

1. Infrastructure Monitoring and Performance Management

Monitoring is the function that gives infrastructure teams their operational awareness. Without it, performance degradation, resource saturation, and system failures remain invisible until they escalate into outages.

With it, teams can detect anomalies early, set thresholds that trigger alerts before users are affected, and accumulate the historical data needed to identify recurring failure patterns before they repeat.

Modern infrastructure monitoring spans more than server uptime checks. It covers application performance, network latency, storage I/O, database query times, and cloud resource utilization.

As infrastructure has grown more distributed, spanning on-premise data centers, cloud environments, and edge nodes, monitoring has evolved from agent-based tools collecting metrics from physical machines to observability platforms that correlate signals across the full stack in real time.

Performance management extends this further. Identifying a bottleneck is the first step. Determining whether it requires configuration tuning, resource scaling, or architectural change requires deeper analysis of usage patterns, historical baselines, and dependency maps.

Teams that treat monitoring as a detection mechanism and performance management as a diagnostic discipline build infrastructure that degrades gracefully rather than failing suddenly.

Cygnet.One’s infrastructure management service delivers 24/7 monitoring and AI-driven optimization across data center and multi-cloud environments, enabling IT teams to shift from reactive incident response to continuous operational visibility.

2. IT Operations and Service Management (ITOps)

IT Operations covers the daily execution layer of infrastructure management. This includes provisioning environments, responding to incidents, managing change, maintaining system configurations, and keeping service delivery aligned with SLA commitments.

It is the function most visible to the rest of the organization. When ITOps runs well, systems are available, tickets are resolved promptly, and changes are deployed without disrupting business processes.

The shift from reactive to proactive ITOps is where the real efficiency gains live. Reactive teams spend the majority of their time on incident response, chasing alerts, coordinating response calls, and applying fixes under pressure.

Proactive teams invest in automation, documented runbooks, and configuration management tooling that prevent common incidents from occurring or resolve them automatically before a human is paged.

ITSM frameworks, with ITIL being the most widely adopted, give ITOps teams a structured model for incident management, problem management, change management, and service request fulfillment.

These frameworks do not eliminate complexity, but they introduce process consistency that makes complexity manageable at scale. Organizations that align their ITOps practices with a mature ITSM framework consistently see lower mean time to resolution and greater change success rates over time.

3. Security and Compliance Management

Infrastructure is the primary target of most enterprise cyberattacks. Misconfigurations in cloud storage, unpatched server vulnerabilities, excessive access permissions, and unmonitored endpoints are among the most exploited vectors.

Security management within the IT infrastructure is embedded into every layer of the infrastructure

operations and must be treated as such.

This means patch management must be systematic rather than ad hoc. Access controls must follow least-privilege principles, enforced through identity and access management tooling. Network segmentation must limit lateral movement in the event of a breach.

Security monitoring must surface anomalous behavior before it becomes a confirmed incident rather than after.

According to IBM’s 2024 Data Breach Report, 40% of breaches involved data stored across multiple environments, including public cloud, private cloud, and on-premise systems.

These breaches cost more than $5 million on average and took 283 days to identify and contain. For enterprises managing hybrid infrastructure, this data underscores how a security gap in any single environment exposes the entire organization.

Compliance adds a second dimension. Regulated industries operate under frameworks such as PCI DSS, HIPAA, and ISO 27001 that mandate specific controls, audit trails, and reporting practices.

Infrastructure must be configured to meet those requirements, and that configuration must be demonstrably maintained over time. Cygnet.One’s Cybersecurity services cover identity access management, application security, data protection, and real-time threat detection, helping enterprises maintain a consistent security posture across complex, multi-environment infrastructure.

4. Disaster Recovery and Business Continuity

Disaster recovery addresses a practical question: if a system fails, how quickly can operations resume, and how much data is lost in the process? Two metrics govern this.

Recovery Time Objective (RTO) is the maximum acceptable time before a system must be restored. Recovery Point Objective (RPO) is the maximum acceptable amount of data loss measured in time.

These two numbers define what recovery actually means for a given system and must be agreed upon by IT and business stakeholders before a disaster, instead of during one.

Achieving low RTO and RPO requires investment in redundancy: geographically distributed backup systems, automated failover mechanisms, and regularly tested recovery procedures.

Testing is the element organizations most frequently neglect. A recovery plan that has never been executed under realistic conditions is not a recovery plan. It is a document that may or

may not hold together when most needed.

Business continuity extends this further to non-technical scenarios.

- What happens if a data center is physically unavailable?

- If key personnel are unreachable?

- If a supply chain disruption delays hardware replacement?

Organizations that treat business continuity as an IT problem alone typically under-prepare for the human and operational dimensions of recovery. Effective programs integrate infrastructure planning with broader organizational resilience frameworks that account for all the dependencies a recovery depends on.

5. Capacity Planning and Scalability

Capacity planning ensures infrastructure has enough resources to handle current workloads while staying ahead of growth. Done well, it heads off two costly failure modes. Under-provisioning causes performance degradation under load.

Over-provisioning wastes capital on idle resources that could be directed elsewhere. The discipline has grown more complex with the adoption of cloud and hybrid environments. On-premise capacity planning was largely a hardware procurement cycle.

Forecast demand, buy servers, deploy. Cloud capacity planning requires continuous governance of resource consumption across multiple services, regions, and accounts.

Auto-scaling helps absorb peak demand, but it does not replace intentional planning. Resources that scale up automatically without cost governance can produce end-of-month billing figures that negate the efficiency gains cloud environments are supposed to deliver.

Scalability planning is forward-looking, whereas capacity planning is operational. It asks whether the current infrastructure architecture can support the next order of magnitude of growth, not just next quarter’s workload increase.

Organizations expanding into new markets, launching digital products, or adopting AI workloads need to evaluate whether their infrastructure foundation is built to scale or will require re-architecture before growth targets become achievable.

Benefits of IT Infrastructure Management

The business case for investing in structured IT infrastructure management becomes clearest when organizations examine what poor infrastructure management actually costs.

Unplanned downtime, security incidents, compliance failures, and engineering teams stuck maintaining unstable systems rather than building new capabilities represent the real price of neglect.

The benefits below reflect what organizations gain when infrastructure management is executed with discipline and consistency.

1. Improved Uptime and Reliability

Proactive monitoring catches performance degradation before it escalates to outages. Automated failover mechanisms reduce recovery time when failures do occur.

Systematic patch management closes vulnerabilities before they are exploited in ways that cause downtime. Together, these practices raise infrastructure availability from a reactive outcome to an engineered one.

For enterprises operating customer-facing digital services, every percentage point of uptime improvement carries a measurable revenue impact. For internal operations, reliability means employees and business processes can depend on systems behaving consistently, which reduces the friction and overhead that accumulate when IT becomes a bottleneck rather than an enabler.

The distinction between organizations with high uptime and those that struggle is rarely technology. It is discipline. The tools for achieving reliability are widely available. The difference lies in whether infrastructure management practices are consistently applied and continuously improved, or treated as a checklist to revisit only when something breaks.

2. Better Cost Control

Unmanaged infrastructure generates waste in predictable ways. Servers run at low utilization because provisioning was done conservatively and never revisited. Cloud resources are spun up for projects and never decommissioned.

Software licenses are renewed automatically without validating whether the seats are still in active use.

IT infrastructure management creates the visibility needed to identify and eliminate these inefficiencies. Regular utilization reviews surface over-provisioned resources. Cloud tagging and cost allocation practices make it possible to attribute spend to business units and create accountability for what they consume.

License management programs ensure organizations pay for what they actually use. This is not about cutting IT budgets. It is about deploying them precisely.

Organizations with mature infrastructure cost management practices can typically absorb workload growth without proportional budget increases, freeing resources to invest in new capabilities rather than maintaining existing excess capacity.

3. Enhanced Security

A well-managed infrastructure reduces the attack surface through consistency. Every endpoint is patched on schedule. Access privileges are reviewed and revoked when no longer needed.

Security monitoring covers the full stack rather than leaving blind spots at the perimeter. Compliance controls are enforced programmatically rather than through manual processes that degrade over time.

Most enterprise breaches do not involve novel attack techniques. They involve known vulnerabilities that were not patched, credentials that were not rotated, and access that was never revoked.

IT infrastructure management closes those gaps systematically rather than on a case-by-case basis. For regulated industries, consistent infrastructure security management also reduces the burden of audits.

When controls are automated and documented, demonstrating compliance becomes a reporting exercise rather than a remediation sprint in the weeks before an audit date.

4. Faster Innovation

Infrastructure is either a constraint on innovation or an enabler of it. Organizations with well-managed, modular infrastructure can provision new environments quickly, run experiments without risking production stability, and integrate new technologies into existing systems with predictable results.

Those with fragile, poorly documented infrastructure spend engineering cycles on maintenance rather than new capabilities.

The connection between infrastructure maturity and deployment velocity is most visible in software delivery. Organizations that have invested in infrastructure as code, automated provisioning, and continuous delivery pipelines deploy to production far more frequently and with significantly lower failure rates.

The infrastructure layer becomes predictable enough that development teams can move fast without requiring IT sign-off at every step.

This is where managed infrastructure shifts from an operational concern to a strategic one. The speed at which an organization can test, deploy, and scale new capabilities is shaped as much by infrastructure management decisions as by the technologies it chooses to adopt.

Conclusion

IT infrastructure is the operational layer that determines how fast an organization can move, how resilient it is under pressure, and how much of its technology generates forward value rather than just sustaining the current state.

Organizations that treat infrastructure management as a discipline, investing in monitoring, automating operations, enforcing security systematically, and planning capacity ahead of demand, build a compounding advantage over time.

Their systems are more available, their teams spend less time firefighting, and their infrastructure becomes a platform for growth rather than a source of operational drag.

The challenge for most enterprises is building or sourcing the operational depth to execute it consistently across increasingly complex, hybrid environments. The gap between intent and execution is where the real strategic risk accumulates.

If your infrastructure management practice is stretched across siloed tools, manual processes, and reactive response cycles, Cygnet.One’s IT Managed Services team can help you build the operational discipline to change that.

From 24/7 monitoring to proactive incident management and compliance governance, we work with enterprise IT teams to stabilize and optimize the infrastructure their business depends on.

Book a consultation with Cygnet.One’s Managed IT team.

IT infrastructure includes hardware such as servers, storage devices, and networking equipment, software covering operating systems, middleware, and applications, data storage systems, and cloud services. Networks connecting these components, including LAN, WAN, and cloud connectivity, are also part of the stack. Together, these elements form the technology foundation that all business operations and digital services run on.

Its role is to ensure all technology systems operate efficiently, securely, and in alignment with business goals. This covers system monitoring, incident response, patch management, capacity planning, security enforcement, and disaster recovery planning. In practice, it is what keeps infrastructure from becoming either a source of operational risk or a constraint on the organization’s ability to grow.

Common categories include infrastructure monitoring platforms such as Datadog, Dynatrace, and Nagios, configuration management tools such as Ansible, Puppet, and Chef, and IT service management platforms such as ServiceNow and Jira Service Management. Cloud management consoles and SIEM systems for security monitoring also play central roles. Most enterprises use a combination of tools across different layers of their infrastructure stack.

IT operations focuses on the day-to-day execution layer, including managing incidents, processing service requests, deploying changes, and meeting SLA commitments. IT infrastructure management is broader, covering strategic planning, architecture decisions, capacity governance, and the long-term maintenance practices that determine how infrastructure is built and kept healthy. IT operations is one function within that larger discipline

Scalability determines whether infrastructure can absorb growth in users, data volume, transaction load, or geographic reach without requiring a full re-architecture. Systems built without scalability in mind force expensive, disruptive changes precisely when business momentum makes disruption most costly. Planning for scalability from the outset is significantly cheaper than retrofitting it under pressure.