Three months into a strategic analytics initiative, a retail enterprise discovered that 40% of the data feeding its dashboards was arriving with a 72-hour delay. The pipelines existed. The tools were modern. The architecture was an afterthought, built to move data but not to move it reliably at scale.

Data pipeline architecture is the structural foundation that determines whether an organization’s data systems can support the decisions its business needs to make. When that foundation is weak, everything built on top of it (reports, ML models, real-time applications) inherits the same fragility.

As data volumes grow and use cases diversify, the gap between pipelines built quickly and pipelines designed intentionally becomes increasingly difficult to close. Scalable data pipelines don’t emerge from accumulated tooling choices.

They result from deliberate architectural decisions like how data is ingested, how quality is enforced, how workloads are orchestrated, and how governance is embedded.

This guide examines what data pipeline architecture actually involves, why the design decisions at each stage matter, and how to approach building pipelines that perform at scale.

What is data pipeline architecture?

Data pipeline architecture is the structured design of systems that collect, process, and move data from multiple sources to storage or analytics platforms. It ensures raw data is transformed into usable insights through automated, scalable data workflows.

At its core, it involves three interconnected stages:

- Data ingestion: Collecting from diverse sources, including databases, APIs, event streams, and files

- Data transformation and processing: Cleaning, enriching, and structuring raw data into formats fit for consumption

- Storage and delivery: Persisting data in systems designed for the queries and use cases that depend on it

The architecture defines how the data moves reliably at scale, accounting for failures, schema changes, volume spikes, and latency requirements. Unlike ad hoc data movement scripts, a well-defined architecture treats data as a product with contracts, quality standards, and governance embedded from the start.

Why data pipeline architecture matters

Data pipeline architecture determines how efficiently, reliably, and securely data flows across an organization. A well-designed architecture ensures that data is always available, trustworthy, and ready for analysis, which directly impacts business performance and decision-making.

1. Enables Data-Driven Decision Making

Analytics is only as reliable as the data feeding it. When pipelines are poorly architected, stitched together with brittle scripts and manual handoffs, the data reaching dashboards and reports is often stale, incomplete, or inconsistent.

A well-designed architecture ensures that accurate, timely data is available when decisions need to be made, without requiring engineering intervention for every refresh cycle.

2. Supports Scalable Data Pipelines

Most organizations don’t build for scale until scale is already a problem, and by then, rearchitecting under load is expensive and risky.

Scalable data pipelines are built with distributed processing, partitioned storage, and modular pipeline components that allow individual stages to scale independently as demand grows. The result is a system that handles increasing data volumes and velocity without requiring a full rebuild.

3. Improves Data Reliability and Quality

Data quality degrades at every handoff point. Without schema validation, deduplication logic, and error handling embedded in the pipeline, bad data propagates downstream and surfaces as incorrect analytics, failed ML models, or compliance violations.

Structured pipelines enforce quality contracts at each stage, catching anomalies before they compound into broader problems. For organizations managing data across fragmented systems, embedding quality management at the pipeline level is what separates consistent analytics from unpredictable outcomes.

Cygnet.One’s data engineering and management practice integrates data quality management into pipeline architecture design, ensuring datasets contribute to trustworthy and consistent business outcomes.

4. Enhances Data Accessibility Across Teams

When data engineering and analytics operate on separate, disconnected systems, the result is duplicated effort, inconsistent metric definitions, and constant bottlenecks.

Centralized pipeline architecture creates a shared data layer, where engineering, analytics, and business teams work from the same source of truth. This removes the friction between data production and data consumption, and reduces the time data teams spend fielding questions about why numbers don’t match across reports.

5. Ensures Data Governance and Compliance

Regulations like GDPR and HIPAA don’t just require that data be protected. They require that organizations demonstrate how data moves, who accessed it, and under what conditions.

Pipeline architecture that treats governance as an afterthought creates audit risk. Architectures that enforce access controls, data lineage tracking, and retention policies at the infrastructure level make compliance a structural property rather than a manual process.

According to a 2024 Gartner Study on Data and Analytics Governance, 80% of data and analytics governance initiatives will fail by 2027, largely because organizations treat governance as a reactive process rather than a structural one.

Building governance into pipeline infrastructure, rather than layering it on afterward, is also significantly more cost-effective. Cygnet.One’s data engineering and management service delivers governance frameworks built around accuracy, compliance, and role-based accountability, with end-to-end control over data lineage, archival policies, and integration reliability.

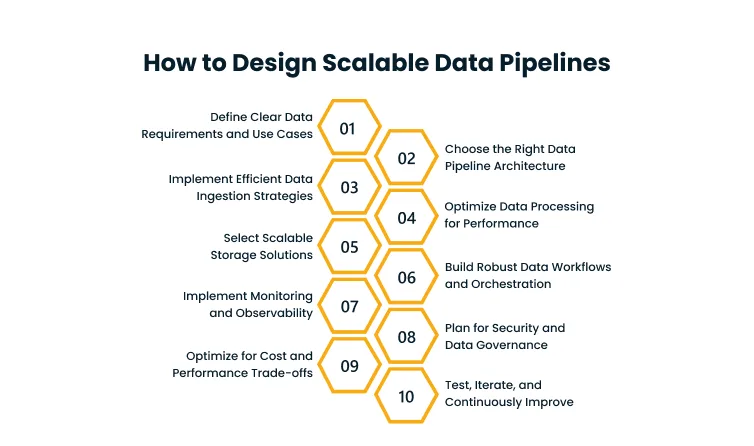

How to design scalable data pipelines

Designing scalable data pipelines is about building systems that can handle growing data volumes without sacrificing performance or reliability. It requires careful planning across architecture, processing, and storage to ensure pipelines remain efficient as complexity increases.

STEP 1: Define Clear Data Requirements and Use Cases

Before selecting tools or patterns, map the terrain.

- What data sources exist?

- What latency is acceptable (seconds, minutes, hours)?

- Who are the consumers, and what format do they need?

Starting with use cases rather than technology prevents over-engineering for requirements that don’t exist and under-designing for ones that do.

STEP 2: Choose the Right Data Pipeline Architecture

The choice between batch, streaming, or hybrid depends on latency and processing needs.

Batch architectures process large volumes of data at scheduled intervals. They suit reporting and analytics workloads where near-real-time is unnecessary and cost efficiency matters.

Streaming architectures process events as they arrive, suited for fraud detection, real-time monitoring, or customer-facing personalization. Hybrid architectures combine both, giving teams flexibility while adding operational complexity.

Choosing wrong creates irreversible technical debt. A streaming system built when batch would suffice adds cost and complexity for no gain. A batch system where real-time is required fails the business case entirely.

STEP 3: Implement Efficient Data Ingestion Strategies

Ingestion is where pipelines most frequently break. Sources change schemas without warning, volumes spike unexpectedly, and API rate limits create bottlenecks.

Scalable ingestion relies on message queues and event streaming platforms to decouple producers from consumers, absorbing volume spikes without cascading failures downstream.

Change Data Capture (CDC) is effective for database replication. API connectors handle SaaS sources. File-based ingestion covers bulk loads.

The design principle to embed from the start: ingestion should be idempotent, meaning safe to re-run without producing duplicate data.

STEP 4: Optimize Data Processing for Performance

Transformation logic is where most pipeline performance problems originate. Sequential, single-threaded processing doesn’t scale. Distributed processing frameworks allow transformation workloads to be parallelized across compute clusters, dramatically reducing processing time for large datasets.

Techniques like predicate pushdown, partition pruning, and incremental processing avoid full table scans and reprocessing data that has already been handled.

STEP 4: Select Scalable Storage Solutions

Storage selection determines what queries are possible and at what cost. Data lakes offer low-cost, flexible storage for raw and semi-structured data. Data warehouses deliver optimized query performance for structured analytical workloads.

The modern data lakehouse pattern combines both, storing data in open formats on object storage while supporting warehouse-style querying.

The principle: store once, query many times. Redundant storage across disconnected systems inflates cost and creates consistency problems.

STEP 5: Build Robust Data Workflows and Orchestration

Pipelines are not linear sequences. They are directed acyclic graphs of dependencies. A job that fails halfway through should not restart from the beginning. Orchestration tools manage scheduling, retry logic, dependency resolution, and alerting.

They provide visibility into pipeline state (which jobs ran, which failed, and why) without requiring engineers to piece together logs from disparate systems.

STEP 6: Implement Monitoring and Observability

A pipeline that runs silently is a pipeline that fails silently. Monitoring covers infrastructure metrics like throughput, latency, and error rates. Observability goes deeper, providing the ability to ask arbitrary questions about pipeline behavior using logs, traces, and metrics. Together, they reduce the mean time to detection and resolution when something goes wrong.

STEP 7: Plan for Security and Data Governance

Security at the pipeline level means encryption in transit and at rest, credential management through secret vaults rather than hardcoded values, and network segmentation. Governance means role-based access control, column-level masking for sensitive fields, and data lineage tracking that maps every field from source to consumer.

Both must be designed from the start. Retrofitting security into an existing pipeline architecture is significantly more expensive than building it correctly at the outset.

STEP 8: Optimize for Cost and Performance Trade-offs

Compute, storage, and data transfer all carry costs that scale with volume. Common inefficiencies include:

- Scanning full tables when partitioned reads would suffice

- Storing all data in hot-tier storage when cold-tier would serve most queries

- Running transformation workloads on oversized clusters

Cost optimization is a continuous process of profiling, right-sizing, and eliminating unnecessary data movement.

STEP 9: Test, Iterate, and Continuously Improve

Pipelines are not deployed once and forgotten. Data sources evolve, business requirements change, and scale assumptions break. Integration tests validate end-to-end behavior. Unit tests cover transformation logic.

Load tests surface bottlenecks before they surface in production. A feedback loop between monitoring data and pipeline refinement is what separates pipelines that degrade from ones that improve over time.

Best practices for building reliable data pipelines

Building reliable data pipelines requires more than just getting data from one place to another. It involves applying proven practices that ensure consistency, resilience, and long-term maintainability as data systems grow and evolve.

1. Data Quality & Validation

Quality checks must be embedded in the pipeline, not applied after the fact. Schema validation catches structural changes at ingestion. Row-count reconciliation detects data loss between pipeline stages. Deduplication logic prevents double-counting.

Data contracts (formal agreements between producers and consumers about data shape and semantics) prevent silent breaking changes from propagating downstream.

According to a 2025 Gartner Study on Data Analytics & Governance, 63% of organizations either do not have or are unsure whether they have the right data management practices for AI.

Poor data quality embedded in pipeline architecture is a primary driver of that gap.

2. Monitoring & Observability

Infrastructure metrics are necessary but not sufficient. Data-level observability matters:

- Are row counts within expected ranges?

- Are null rates for critical fields within tolerance?

- Is processing latency within SLA?

Automated alerts on anomalies catch problems before they reach downstream consumers. Dashboards should surface pipeline health, not just uptime.

3. Security & Compliance

Role-based access should be enforced at the data layer, not just the application layer. Column masking protects PII without requiring separate data copies. Audit logs capture who accessed what and when.

Retention policies automate the deletion of data that exceeds its regulatory window, removing the need for manual compliance reviews.

4. Cost Optimization Strategies

Storage tiering moves infrequently accessed data to cheaper storage classes, reducing cost without reducing availability.

Query optimization reduces compute consumption per analysis. Deduplicating data at ingestion avoids storing and processing the same record multiple times. Regularly auditing pipeline usage identifies scheduled workloads that are no longer consumed by any downstream system.

Conclusion

The way an organization designs its data pipeline architecture determines what it can actually do with data, not just at its current scale but as requirements grow more complex and volumes increase. A pipeline that performs well today without a design built for growth will fail at the worst possible time: when the business depends on it most.

The fundamentals remain consistent regardless of stack: ingest reliably, transform with quality controls, store for the queries that matter, orchestrate with visibility, and govern with intent. What changes is how those fundamentals are implemented as tools evolve and architectures modernize.

Organizations that treat pipeline architecture as infrastructure to build once and maintain minimally accumulate technical debt that eventually constrains their analytics and AI capability. According to a 2025 Gartner Study on Data Analytics & Governance, 60% of AI projects unsupported by AI-ready data will be abandoned. That’s not a modeling problem or a tooling problem. It’s a pipeline architecture problem, and it starts at design time.

Those that treat pipelines as a product, with continuous improvement built into the operating model, compound the value of their data over time.

Designing pipelines that scale, govern, and perform requires architecture decisions made with full visibility into your data environment. Cygnet.One’s Data Engineering and Management practice works with enterprises to assess, design, and implement data pipeline architectures built for scale, compliance, and AI readiness.

Book a demo to see how Cygnet.One approaches pipeline architecture for your specific data environment.