An enterprise signs a cloud contract, hires data scientists, and launches a generative AI pilot that impresses leadership in the demo. Six months later, the system hasn’t reached production.

The data infrastructure the model needs doesn’t exist in the right form. The engineering team is stretched across three other initiatives. No one has defined what “success” looks like clearly enough to get the deployment approved. This is the modal enterprise AI experience in 2026.

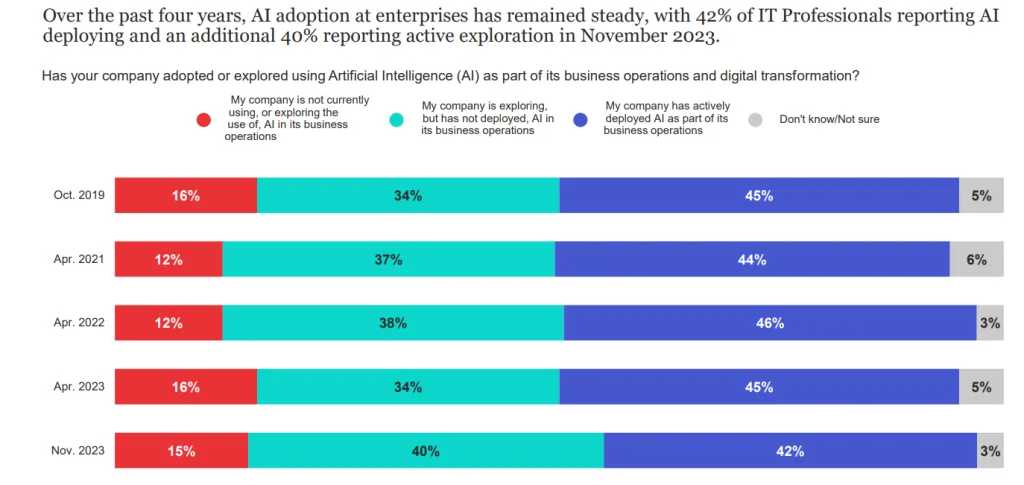

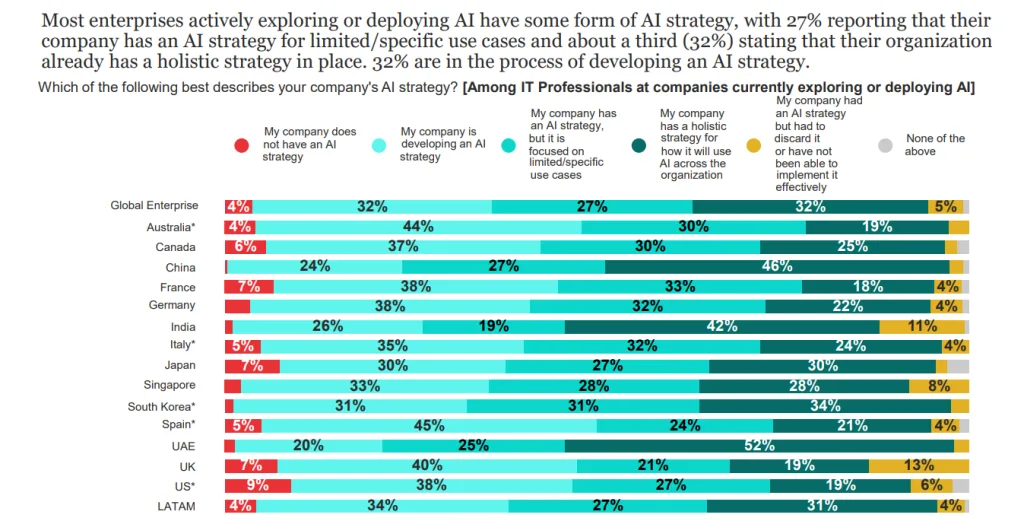

According to the 2024 IBM Global AI Adoption Index, 42% of large enterprises have actively deployed AI, while another 40% are still exploring or experimenting without having deployed.

The gap between those two groups is defined by a set of specific, recurring organizational barriers.

In this blog, we cover what those barriers are, why they tend to compound, and what organizations that have crossed them actually did differently.

By the end, you’ll have a clear picture of where AI adoption breaks down and a structured approach to addressing each failure point before it stalls your next initiative.

What are the top AI Adoption Challenges in 2026?

Enterprises across every sector are investing in AI, but the distance between a proof-of-concept and a production-grade system that delivers business value is wider than most planning exercises acknowledge.

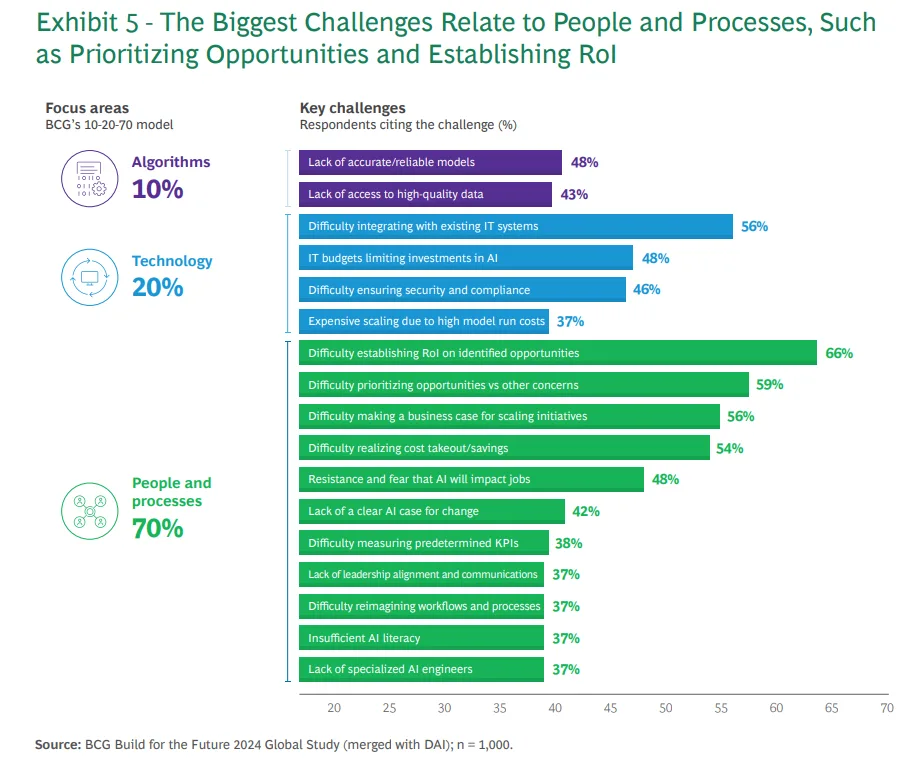

According to the 2024 BCG Report “Where’s the Value in AI?,” 74% of companies have yet to show tangible value from their AI investments.

The reasons cluster consistently around strategy, culture, data infrastructure, talent, and organizational readiness, and they tend to compound. An organization with weak data governance that also lacks AI talent will find that each problem amplifies the other.

1. Lack of Clear AI Strategy and Business Alignment

AI initiatives that begin without a defined business objective rarely produce useful outcomes. Teams run experiments, build models, and demonstrate technical capability, but without a clear connection to a business problem that matters, those outputs don’t generate decisions or economic value.

It results in a collection of disconnected AI pilots that each show promise in isolation but never graduate to production because no one can articulate what success looks like at scale.

Business alignment requires a shared understanding across business and technology teams of which outcomes AI is expected to improve, how improvement will be measured, and what the acceptable timeline for results is.

Without that shared language, AI strategy becomes a technical roadmap that the business doesn’t recognize as its own, and projects stall at the handoff between proof-of-concept and deployment.

2. Poor Data Quality and Data Availability Issues

AI models learn from data, and the quality of a model’s output is directly bound by the quality of its training data.

Incomplete records, inconsistent formatting across source systems, duplicate entries, missing labels, and stale data introduce systematic errors that are difficult to detect and expensive to correct once a model is deployed.

Data availability compounds the quality problem. Many enterprises hold their data in siloed systems across departments, business units, or acquired entities.

Accessing and consolidating that data for AI training requires data pipelines, governance policies, and often significant engineering work that wasn’t scoped into the original project estimate.

Organizations frequently discover that the data they assumed they had is either inaccessible, insufficient in volume, or structured in ways that don’t support the model they intended to build.

According to the 2025 Gartner Survey on Data Management Practices for AI, organizations will abandon 60% of AI projects through 2026 due to the lack of AI-ready data. Data infrastructure is a precondition for AI that most teams don’t fully appreciate until a project is already in trouble.

3. Shortage of Skilled AI Talent

Data scientists, machine learning engineers, MLOps practitioners, and AI architects with production-grade experience are scarce, and competition for them across enterprises is intense.

Enterprises that do manage to hire AI talent often face a secondary challenge with retaining them in environments where model deployment is slow, data infrastructure is inadequate, and organizational support for AI is inconsistent.

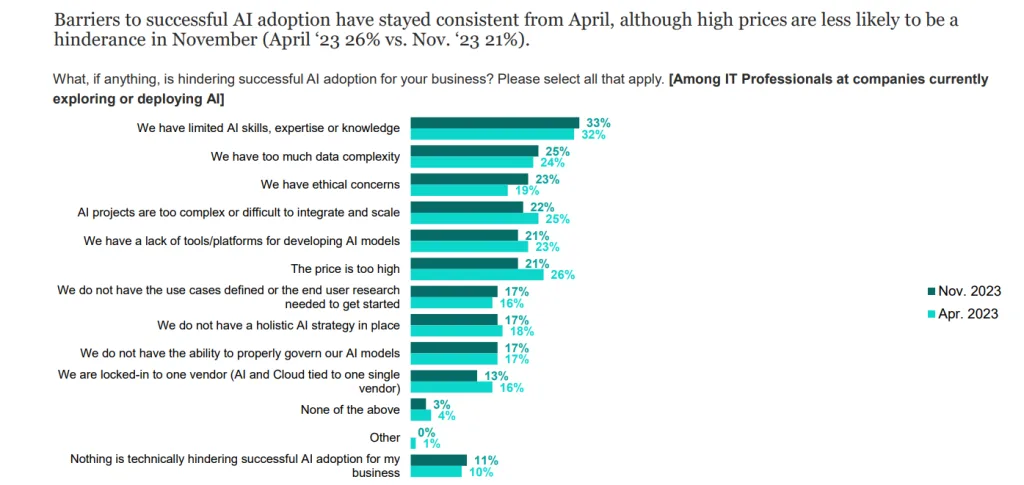

According to the 2023 IBM Global AI Adoption Index, 33% of enterprises cite limited AI skills and expertise as the top barrier to AI adoption, ranking ahead of data complexity (25%), ethical concerns (23%), and integration difficulty.

The shortage extends beyond technical roles.

- Product managers who understand how to scope AI projects

- Business analysts who can translate operational problems into machine learning tasks, and

- Change management professionals who can drive the adoption of AI-assisted workflows

are equally important and equally hard to find. Organizations that think about the talent gap only in terms of engineering capacity tend to underinvest in the roles that connect AI systems to the business processes they’re meant to improve.

4. Integration with Legacy Systems

Most enterprises run core business processes on systems that were not designed with AI in mind. ERP platforms, CRM systems, custom-built operational tools, and databases on aging infrastructure lack the APIs, data formats, and latency characteristics that AI applications require.

Integrating an AI model into a workflow that runs on a 15-year-old system isn’t impossible, but it requires middleware development, data transformation pipelines, and often significant rearchitecting of the data layer

AI systems that depend on data from legacy sources inherit the reliability characteristics of those sources. If an upstream system has data quality issues, experiences downtime, or changes its output format without notice, the AI application built on top of it degrades or fails.

Organizations that don’t map these dependencies explicitly before deployment tend to discover them during incidents rather than in testing.

Cygnet.One’s Enterprise Integration practice addresses this by providing middleware, API management, and event-driven architecture solutions for reliable data connections.

These solutions connect AI layers with legacy systems, pre-built connectors for SAP, Oracle Dynamics, and Salesforce, reducing engineering overhead.

5. Resistance to Change and Organizational Culture

Employees who understand that an AI system will change how their work is measured, structured, or evaluated, but who were not involved in that decision, respond with skepticism, workaround behavior, and selective engagement that effectively neutralizes the system’s intended effect.

The concerns driving resistance are often legitimate. Job displacement fears, loss of professional autonomy, and uncertainty about how AI-generated outputs will affect accountability structures are real organizational dynamics that don’t dissolve because leadership issues a communication about transformation.

AI adoption that doesn’t address these concerns directly, through transparent communication, involvement in design, and clear policies about how AI will and will not be used to evaluate individual performance, tends to stall at rollout regardless of how well-built the underlying system is.

Organizations that handle culture effectively treat it as a design constraint for the AI deployment.

They involve frontline employees in identifying use cases, use early pilots to demonstrate value without creating displacement anxiety, and build feedback loops that give employees genuine influence over how AI tools are refined.

6. Scalability and Infrastructure Limitations

Many AI projects perform well at pilot scale and fail to replicate those results in production. Pilot environments are controlled, where data volumes are managed, edge cases are limited, and latency requirements are relaxed.

Production environments present different constraints entirely, and the gap between the two consistently exposes infrastructure assumptions that the pilot stage never tested.

Training and serving large AI models requires substantial compute capacity, often including GPUs for deep learning workloads.

Storing and processing the data volumes that production AI generates requires a scalable data infrastructure. Monitoring models in production for performance drift, data drift, and fairness issues requires MLOps tooling that many organizations haven’t invested in when the pilot begins.

Organizations that treat infrastructure as an afterthought during the pilot stage find that scaling AI becomes a separate engineering problem to be solved from scratch, rather than a continuation of what the pilot proved.

The cost of retrofitting infrastructure for production after a successful pilot is typically multiples of what it would have cost to build it into the initial architecture.

How to Overcome AI Adoption Challenges?

The barriers to AI adoption are not unique to any single organization. Every enterprise that has moved from AI experimentation to production-scale deployment has navigated some version of these same problems.

The organizations that succeed consistently do so because they approach each barrier with a structured response rather than treating it as an obstacle to route around. The strategies below address each major challenge at its root.

1. Build a Clear AI Strategy and Roadmap

An AI strategy is a documented set of business objectives that AI will be used to improve, the use cases selected to address those objectives, the success metrics that will determine whether each initiative is working, and the governance structure that will manage decisions about expansion, modification, or discontinuation of AI projects.

Building that strategy starts with business leadership. The technology team’s role is to assess feasibility and architect solutions.

Defining what business outcomes matter, and in what priority order, is a leadership conversation that requires input from operations, finance, customer experience, and risk functions.

Organizations that hand AI strategy entirely to the technology team tend to produce technically coherent plans that the business doesn’t own, and projects stall when they require cross-functional cooperation to move forward.

A useful AI roadmap maps use cases against two dimensions:

- Business value

- Implementation feasibility.

High-value, high-feasibility use cases go first. Complex use cases with unclear value go last or not at all.

2. Invest in Data Quality and Data Infrastructure

Data quality improvement typically sits outside the scope of the AI project that depends on it. That is precisely why it gets deferred until a project is already in trouble.

The more effective approach is to treat data infrastructure as a prerequisite for AI investment rather than a parallel workstream. It means:

- Auditing existing data for completeness, consistency, and accessibility before selecting AI use cases.

- Building or procuring the data pipelines, cataloging tools, and governance processes that make data reliably available for model training and inference.

- Establishing data ownership at the organizational level, so that questions about data quality have accountable owners rather than diffusing across teams with no clear resolution path.

Organizations that invest in data infrastructure before selecting AI use cases tend to have shorter development cycles, more reliable model performance, and lower total cost than those that try to solve data problems mid-project.

3. Upskill Teams and Partner with AI Experts

Addressing the talent gap through hiring alone is slow and expensive. A more effective approach combines targeted internal upskilling with strategic external partnerships that provide immediate capability while building internal knowledge alongside delivery.

Internal upskilling programs should be role-differentiated:

- Engineers need exposure to ML frameworks, data pipeline tooling, and model evaluation methodologies.

- Business analysts need enough AI literacy to scope projects accurately and recognize when a model’s output is unreliable.

- Leaders need a sufficient understanding of AI capabilities and limitations to make informed investment and governance decisions.

Generic AI awareness training that doesn’t map to how each role will actually engage with AI systems produces minimal behavioral change.

According to the 2024 Gartner Forecast on GenAI Workforce Requirements, generative AI will require 80% of the engineering workforce to upskill through 2027.

Cygnet.One’s Data Analytics and AI Services supports organizations at this intersection, providing AWS-certified specialists and data scientists who accelerate AI implementation.

They also build internal team capabilities alongside project delivery, drawing on 500+ completed data and AI engagements across industries.

4. Start with High-Impact Use Cases

The most effective way to build organizational confidence in AI is to deliver results quickly on a problem the business actually cares about.

High-impact use cases share a few consistent characteristics:

- The business problem is well-defined

- The data required is available and reasonably clean

- Success can be measured without ambiguity, and

- The output connects directly to a decision or action someone in the organization takes.

Organizations that have demonstrated AI value in one area find it easier to secure budget, talent, and cross-functional cooperation for the next initiative.

Those who start with technically interesting but business-peripheral problems tend to find that each subsequent project requires rebuilding the same organizational support from scratch.

A few markers help identify strong starting points:

- The problem currently consumes significant manual effort

- The outcome of the AI system is easily verifiable by the people using it

- Failure is recoverable and doesn’t carry customer-facing risk

5. Implement Strong AI Governance and Compliance

Systems deployed without clear data usage policies, auditability requirements, bias testing protocols, and access controls create liability, regulatory risk, and the kind of high-profile failures that set AI programs back by years.

Effective AI governance establishes clear ownership for each deployed model, including:

- Who approved it

- What data it was trained on

- How its performance is monitored, and

- Under what conditions will it be retrained or retired?

It defines the human oversight checkpoints for AI-assisted decisions, particularly in high-stakes domains like credit, hiring, healthcare, and legal compliance.

A feedback mechanism ensures that problems identified in production have a clear path to resolution rather than requiring the entire AI organization to mobilize each time.

Organizations that build governance into AI projects from the start find that it accelerates adoption.

Teams are more willing to use AI systems they understand and trust, and regulators view proactive governance as a sign of organizational maturity rather than a liability to investigate.

Conclusion

The most common misdiagnosis in a failing AI program is treating an organizational readiness problem as a technology problem.

Teams that aren’t getting results from AI search for a better model, a more capable platform, or a more sophisticated algorithm, when the actual constraint is data that isn’t structured for AI, a governance process that doesn’t exist, or a strategy that hasn’t been tied to a business outcome anyone owns.

AI adoption challenges are often resolved by organizational decisions like:

- Who owns data quality?

- How are use cases selected and scoped?

- What does governance mean in practice for each deployed model?

Getting those decisions right before selecting technology is what separates organizations that extract durable value from AI from those that purchase tooling without results.

For organizations working to move AI initiatives from pilot to production, Cygnet.One’s Data Analytics and AI service provides the strategy, data infrastructure, and engineering expertise needed to close the gap between vision and deployment. Book a demo to see how Cygnet.One’s approach to AI adoption translates into measurable business outcomes.

FAQs

1. What are the main challenges in AI adoption?

The primary challenges of AI adoption are poor data quality, a shortage of skilled AI talent, a lack of a clear business-aligned strategy, integration difficulties with legacy systems, organizational resistance to change, and inadequate infrastructure for scaling.

2. Why do AI projects fail?

Most AI projects fail for one of three reasons:

- The data required to train reliable models isn’t ready

- The project lacks a defined business objective that connects technical output to operational decisions, or

- The infrastructure and talent needed to move from pilot to production weren’t in place when the project began.

Strategy gaps and culture gaps account for a significant share of failures that are often misattributed to the technology itself.

3. How can businesses overcome AI adoption challenges?

The most effective approach to overcome AI adoption challenges combines a few specific steps, including:

- Define measurable business objectives before selecting technology

- Treat data infrastructure as a prerequisite rather than a parallel workstream

- Start with use cases where data is available, and success is measurable

- Invest in role-differentiated upskilling, and

- Build AI governance into projects from the outset rather than retrofitting it after deployment.

4. Is AI adoption expensive for businesses?

Initial investment in AI adoption varies significantly depending on use case complexity, data readiness, and infrastructure requirements. Organizations that start with high-impact, well-scoped use cases typically see faster payback and lower overall cost than those that pursue broad, ambitious programs from the outset.

5. Do small businesses face AI adoption challenges?

Yes, often more acutely than large enterprises. Small businesses typically have smaller data volumes, fewer dedicated technical staff, and tighter budgets for infrastructure. They also face the same data quality and strategy alignment challenges. The mitigation strategy is similar: start with a single high-impact use case, partner with external specialists for capabilities the business doesn’t have internally, and build governance practices at a scale appropriate to the organization’s current size.