The fastest way to ruin a “modern” system is to split one big problem into twenty smaller problems that still behave like one big problem.

That is the heart of a distributed monolith. It looks like microservices. It deploys like microservices. It bills like microservices. But it fails like a monolith, only now the failure is harder to trace.

If you are doing AWS application modernization, this is the trap that sneaks in when teams rush from “one codebase” to “many services” without changing how boundaries, data, and validation work. This blog breaks down why a distributed monolith happens, how to spot it early, and how to design service boundaries and data ownership on AWS, so your system stays modular in real life, not just on a diagram.

What is Distributed monolith?

Let’s answer it plainly: what is a distributed monolith?

It is a system split into services that are still tightly coupled through synchronous calls, shared data, shared releases, or shared assumptions. You no longer have one deployment unit. You have many. But you still have one change unit. One “all-or-nothing” upgrade path. One cascading failure path.

Here’s a quick contrast.

Monolith

- One process, one deployment

- Internal calls are cheap and reliable

- Coupling is obvious

Distributed monolith

- Many deployments, many endpoints

- Calls go over the network

- Coupling is hidden until production

In AWS application modernization, a distributed monolith is usually created by copying the monolith’s internal boundaries into “services” without rethinking the seams.

A simple diagram you can use in reviews

Monolith (clear coupling)

[ UI + Orders + Payments + Inventory + Shipping ]

| one deploy | one DB |

Distributed monolith (hidden coupling)

UI -> Orders -> Payments -> Inventory -> Shipping

| | | |

DB? DB? DB? DB?

(or one shared DB behind all of them)

If that chain must succeed end-to-end for basic actions, you have a distributed monolith.

Why does it occur during modernization on AWS?

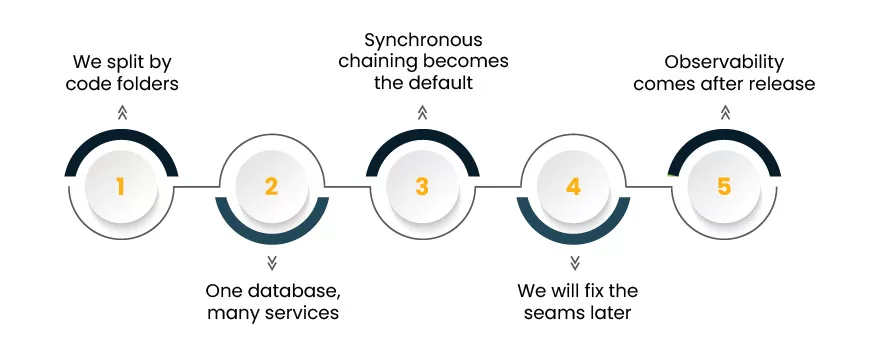

Most teams do not choose a distributed monolith. They drift into it through common monolith to microservices challenges.

1) “We split by code folders”

A service boundary based on package structure often mirrors old assumptions. That reproduces the same coupling, now with network calls.

2) One database, many services

Shared tables make services depend on each other’s schema. That is one of the most common cloud-native architecture mistakes.

3) Synchronous chaining becomes the default

One service calls the next, and the next, and the next. Latency piles up. Retries amplify load. Partial failures become full failures. These are classic microservices pitfalls AWS teams hit when they treat HTTP calls like in-process method calls.

4) “We will fix the seams later”

Later rarely comes. Once traffic and org structure settle, changing boundaries becomes expensive. This is where AWS application modernization needs discipline early.

5) Observability comes after release

Without tracing, dependency maps, and error budgets per interaction, coupling stays invisible. Another of the big cloud-native architecture mistakes.

Warning signs you are building a distributed monolith

Use this list in design reviews. A few items are normal. Many items together mean danger.

- Every user request triggers 6+ synchronous service calls.

- A “small” change needs coordinated releases across teams.

- Multiple services read and write the same tables.

- Schemas change weekly and break unrelated areas.

- One service outage takes down multiple user journeys.

- You rely on retries instead of resilience patterns.

- Testing requires a full environment to validate anything meaningful.

- Teams argue about ownership because boundaries are unclear.

If these feel familiar, pause and ask again: what is a distributed monolith in your context? It is not about service count. It is about change friction and failure spread.

Service boundary design that prevents tight coupling

A clean boundary is not “Orders Service because we have orders.” A clean boundary is a unit that can change without asking five other teams for permission.

In AWS application modernization, treat boundaries as “change boundaries” first, “deployment boundaries” second.

A practical boundary method: the “two lists”

For each candidate service, write two lists:

List A: What this service owns

- Business decisions it makes

- Data it is the system of record for

- Events it publishes

List B: What this service must not know

- Internal rules of other services

- Other services’ database structures

- UI-specific workflows

If List B is hard to write, your boundary is fuzzy. Fuzzy boundaries lead straight to a distributed monolith.

Design patterns that help on AWS

These reduce coupling without fancy theory.

- Event-first integration for key state changes (SNS, EventBridge)

- Asynchronous workflows for long-running steps (Step Functions)

- Queue-based buffering at boundaries (SQS)

- Clear contracts and versioning (API Gateway, schema registry approaches for events)

Many early mistakes in microservices design emerge when teams ignore cloud-native architecture concepts and benefits and default to synchronous chaining. Events and queues give you room to decouple.

A diagram of healthy boundaries

[Orders] –publishes–> (OrderPlaced event) –> [Payments]

| |

owns order state owns payment state

|

(OrderStatus API for reads, not internal rules)

This kind of design keeps a failure in Payments from corrupting Orders.

Data ownership: the part teams skip, then regret

A distributed monolith is often just “shared data with extra hops.”

If you want microservices that stay independent, you need strict data ownership. This is one of the hardest monolith to microservices challenges, and also the most important.

The rule that prevents 80% of shared-data coupling

Only one service is allowed to write a given business fact.

Examples:

- Only Orders writes “order state”

- Only Payments writes “payment state”

- Only Inventory writes “stock state”

Other services may read via:

- an API designed for consumers, or

- events that carry the minimum required facts, or

- a read model built from events

Common anti-patterns to avoid

These are repeated cloud-native architecture mistakes.

- Shared database as “integration.”

- Foreign keys across service boundaries.

- Direct reads of another service’s tables “just for reporting.”

- A single “common” schema used by all services.

What to do instead on AWS?

- For cross-service read needs, use purpose-built read models.

Example: A Customer Order Summary table built from OrderPlaced and PaymentCaptured events.

- For analytical needs, push events into a lake or warehouse pattern, rather than querying OLTP tables across teams.

This is still AWS application modernization, but now the goal is clear: fewer shared assumptions, fewer shared writes, fewer forced coordination moments.

Validation before growth: how to prove you did not create a distributed monolith

Most teams validate correctness. Fewer validate coupling. That is how a distributed monolith survives until traffic and business urgency expose it.

Below is a validation package you can run before you add more services, more regions, or more traffic paths.

1) Dependency map and “blast radius” review

Create a simple dependency map for top user journeys. Count synchronous hops. Identify where failures cascade.

Checkout journey

UI -> Orders -> Payments -> Inventory -> Shipping

Target: break the chain with async steps or compensations

UI -> Orders -> (workflow) -> Payments

|

Inventory via async reservation

If your journey needs a perfect chain, you are still in microservices pitfalls AWS territory.

2) Contract tests, not only integration tests

Integration tests require a full environment. Contract tests validate that service A can rely on service B’s interface without knowing internals.

- API contracts for REST endpoints

- Event schema contracts for messages

- Backward compatibility rules with versioning

This reduces the biggest monolith to microservices challenges: coordinated releases.

3) Data ownership tests

Automate checks that catch illegal access patterns early:

- No service writes to another service’s tables

- No “shared schema” migration without governance

- No hidden cross-service joins

This directly blocks a distributed monolith from forming through data shortcuts.

4) Resilience tests for partial failure

Ask these questions in game days:

- What happens if Payments is slow for 2 minutes?

- What happens if Inventory returns stale information?

- What happens if an event is delayed?

A system that only works when everything is healthy is a distributed monolith in waiting. This is also one of the most frequent cloud-native architecture mistakes in early microservices.

5) Operational validation: tracing and ownership

Make sure you can answer, in minutes:

- Which service caused the user-visible failure?

- Who owns the fix?

- Which dependency is the top source of latency?

If you cannot, you are living the hardest part of what is a distributed monolith: confusion during incidents.

A practical modernization path that avoids the trap

If you are actively modernizing workloads, following a structured AWS migration success path reduces architectural drift, here is a path that avoids “big bang microservices” and reduces microservices pitfalls AWS.

Step 1: Start with one boundary that already exists in the business

Pick an area with clear ownership and fewer cross-team dependencies. Not the most central workflow.

Step 2: Extract with a “strangler” approach

Route specific functionality to the new service while the rest stays in the monolith. Keep the interface stable.

Step 3: Move data ownership with the service

Do not extract the code and keep the tables shared. That is how a distributed monolith is born.

Step 4: Shift integrations to events where it makes sense

Not everything must be event-driven, but core state changes usually benefit from it.

Step 5: Add validation gates before adding more services

Run the coupling checks described above. This reduces monolith to microservices challenges later.

Quick checklist for design reviews

Use this as a short gate in architecture reviews.

- Does this service have a clear “system of record” dataset?

- Are there any shared tables or cross-service writes?

- Can this service deploy without coordinating with 3+ other teams?

- Are synchronous calls limited and purposeful?

- Are long-running workflows asynchronous with compensation?

- Do we have contract tests for APIs and events?

- Can we trace the top user journeys end-to-end?

- Do we know the incident owner for each dependency?

If you fail several items, revisit boundaries and data. Otherwise, the odds of a distributed monolith go up fast, and the clean-up becomes one of the toughest monolith to microservices challenges you will face.

FAQs

What is a distributed monolith, in one sentence?

What is a distributed monolith? It is a set of services that still behave like one tightly coupled system, where changes and failures spread across boundaries.

Are microservices always the answer on AWS?

No. Many microservices pitfalls AWS come from adopting services when the domain is not ready, or when teams lack clear ownership. Sometimes a modular monolith is the right intermediate step in AWS application modernization.

What is the quickest way to create a distributed monolith?

Shared data and synchronous call chains. Those two choices produce most cloud-native architecture mistakes tied to coupling.

What is a distributed monolith, and how do I confirm I have one?

What is a distributed monolith in practice? If a small change needs many coordinated releases, and incidents cascade across services, you likely have one. Map the top journeys and count synchronous hops.

Closing thoughts

A successful AWS application modernization effort is not judged by how many services you create. It is judged by how cleanly teams can change, test, and ship without stepping on each other.

If you keep boundaries tied to ownership, keep data tied to one system of record, and validate coupling early, you avoid the most expensive version of modern architecture: a distributed monolith that looks impressive, but behaves like the past.