Designing data contracts is a key part of modern data engineering services, where clear agreements are created between teams that produce and use data. These agreements define schema structure, data quality expectations, and acceptable ways data can change over time. When teams work with defined contracts, data behavior becomes consistent across systems.

Most organizations already have pipelines, dashboards, and monitoring in place as part of their data analytics services ecosystem. These layers help teams see what is happening. However, they do not define how data is expected to behave. Reliability issues often begin where expectations are unclear or undocumented.

Data contracts address this gap by establishing shared rules before data moves across teams. These rules guide how datasets are created and consumed. Moreover, changes become easier to track because they follow defined patterns rather than informal assumptions.

This clarity improves enterprise data reliability because teams operate with the same understanding of data structure and behavior. When expectations are defined early, teams spend less time validating outputs and more time using them with confidence.

To see why this matters, it helps to first understand where reliability issues begin inside teams.

Why does data break between teams even when pipelines are running?

Most data issues do not begin with a system failure. Pipelines continue to run, and jobs complete without errors. Dashboards refresh on schedule, which creates the impression that everything is working as expected. The problem usually appears when someone notices that the output no longer aligns with what they anticipated.

This situation often traces back to small changes that were never fully communicated. One team adjusts a dataset to improve clarity or performance. Another team continues using that dataset with the previous assumptions. The system remains functional, yet the shared understanding has already shifted.

This gap becomes easier to see when you look at how these changes typically appear in real environments:

- A field name is updated to match internal standards

- A data type changes to support a new use case

- A source system behaves differently across environments

Each of these changes makes sense on its own. Together, they create a pattern where expectations slowly drift apart. Over time, this drift introduces friction that affects how teams interact with data.

The impact becomes more visible when analysts start validating outputs more frequently. Engineers spend additional time investigating issues that are not clearly defined. This is the point where data contracts in analytics teams begin to feel necessary rather than optional.

How do data contracts create clarity and trust across teams?

The main value of data contracts comes from replacing assumptions with clear definitions. Instead of expecting teams to interpret data in the same way, contracts establish a shared understanding that everyone can rely on.

This does not remove complexity from the system. It reduces uncertainty around how that complexity behaves. When expectations are documented, teams have a reference point that helps them understand what has changed and why.

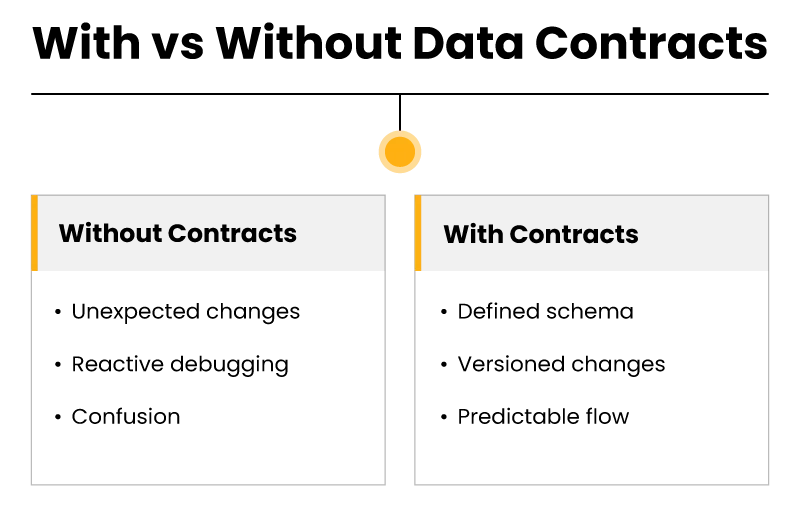

The difference becomes clearer when viewed side by side:

| Without Data Contracts | With Data Contracts |

| Data structure is implied | Data structure is defined |

| Changes appear unexpectedly | Changes follow agreed rules |

| Teams react to issues | Teams align before changes |

This shift changes how conversations happen between teams. Instead of asking what went wrong, teams begin discussing how to manage change more effectively. That change in focus reduces confusion and builds trust over time.

Trust does not come from eliminating every issue. It comes from knowing that issues will be visible and manageable when they occur. Once this level of clarity is established, the next step is applying it within real workflows.

How are data contracts implemented in real data pipelines and analytics teams?

The transition from concept to practice often starts at the source, where data is first created or ingested. This is where teams define the structure and behavior of datasets before they move through the pipeline.

Defining expectations at the source

The producing team sets clear expectations around what the dataset contains. This includes field names, data types, and required attributes. These definitions are not left open to interpretation.

- Fields are documented with consistent naming conventions

- Required attributes are identified early in the process

- Optional elements are introduced with a clear context

This approach reduces ambiguity from the beginning. Downstream teams receive data that matches a known structure rather than an assumed one.

Managing schema evolution without disruption

As systems evolve, data structures need to change. This is where schema management in data pipelines becomes closely linked to contracts. Instead of allowing silent changes, contracts introduce a controlled way of managing evolution.

This approach ensures that improvements do not create unintended consequences. Changes become visible, and teams have time to adjust before those changes affect production environments.

Supporting analytics teams with stable inputs

Analytics teams depend on consistent data to produce reliable insights. When upstream data changes unexpectedly, it disrupts reporting workflows and creates additional effort.

This is where data contracts in analytics teams play a practical role in improving AI data analytics outcomes. They reduce the frequency of unexpected changes and provide a stable foundation for analysis.

Instead of repeatedly validating data, analysts can focus on interpreting results. Reports become more stable, and decision-making becomes faster.

Maintaining flexibility while enforcing structure

Contracts introduce structure, yet they should not limit necessary change. Teams still need the ability to evolve their systems as requirements change.

Some organizations handle this by introducing validation checks within pipelines. Others rely on versioning strategies that allow gradual transitions. The exact approach varies, although the principle remains consistent.

Contracts should make change predictable rather than restrictive.

How do data contracts improve enterprise data reliability over time?

The impact of data contracts becomes more noticeable as teams begin to rely on them consistently. It does not happen immediately, and it usually develops through repeated use.

At first, teams notice fewer unexpected issues. Then they begin to trust their data more. Over time, this trust changes how decisions are made across the organization.

| Before Contracts | After Contracts |

| Data issues appear frequently | Pipelines behave more predictably |

| Teams question outputs often | Confidence in data improves |

| Resolution takes longer | Issues are understood faster |

This shift affects more than technical workflows. It influences how teams plan and act. When data feels reliable, teams move with greater confidence and less hesitation.

This is where enterprise data reliability becomes part of everyday operations rather than a separate goal. Reliable data supports decision-making without constant validation, which allows teams to focus on outcomes rather than troubleshooting.

What does this look like in a real organization?

To make this more concrete, consider a retail company that relies on multiple data sources for inventory and sales reporting. The engineering team updates a dataset to include a new pricing field. The change improves internal reporting, although it is not communicated clearly to the analytics team.

The analytics team continues using the dataset with the previous assumptions. Reports begin showing unexpected variations in revenue calculations. No alerts are triggered because the pipeline is still functioning.

Without data contracts, the issue takes time to identify. Teams need to trace the change manually and adjust their logic accordingly.

With data contracts, the change would follow a defined process. The new field would be introduced with clear documentation, and downstream teams would be aware of the update before it affects reporting.

This example highlights how small changes can create larger impacts when expectations are not aligned. It also shows how contracts reduce that risk by making changes visible.

Why are data contracts becoming a standard practice?

As organizations scale their data systems, the number of interactions between teams increases. Each interaction introduces the possibility of misalignment. Without a structured way to manage expectations, these interactions become harder to control.

This is why data contracts are increasingly seen as part of modern data architecture. They provide a way to manage complexity without slowing down development.

They also support collaboration between teams. When expectations are shared, teams can work more independently without creating unexpected dependencies.

Over time, this approach becomes part of how systems are designed. Contracts are no longer added later. They are considered from the beginning.

FAQs

What are data contracts in simple terms?

They are agreements that define how data should look and behave between teams.

Why does data break between teams?

It usually breaks because expectations are not clearly defined or communicated.

How do data contracts improve reliability?

They reduce ambiguity and make changes predictable, which improves trust in data.

Where are data contracts used in analytics teams?

They are used to ensure that upstream data remains consistent for reporting and analysis.

How do data contracts relate to schema management?

They help control how schemas change so that pipelines remain stable.