Introduction

Deploying agentic AI for customer engagement is a fundamentally different challenge from adding a chatbot or enabling a single AI feature. Where conversational AI responds to prompts, agentic systems act autonomously — orchestrating multi-step workflows across live CRM, ERP, and support data while coordinating LLMs, workflow engines, escalation logic, and governance controls simultaneously.

When implementation is done poorly, the consequences are severe. Fragmented data pipelines produce inconsistent agent decisions. Uncontrolled agent actions violate policies or regulatory requirements. Poor escalation handoffs leave customers stranded between automation and human support. These failures erode trust faster than any traditional service gap—Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls.

This guide covers each deployment stage — from readiness assessment and prerequisites through step-by-step rollout, validation, and common failure points. It's written for enterprise technology leaders and CX heads planning a production-grade implementation.

TL;DR

- Agentic AI autonomously orchestrates multi-step customer workflows end-to-end—well beyond what chatbots can do

- Three preconditions matter: clean data, integration-ready CRM/ERP/support platforms, and a tightly scoped pilot use case

- Use a phased roadmap: narrow pilot first, validate thoroughly, then scale deliberately

- Track resolution time, escalation rate, first-contact resolution, and CSAT from day one

- Most implementations fail by underestimating data and organizational readiness before go-live

Before You Begin: Prerequisites for Agentic AI Implementation

Agentic AI is only as effective as the data and systems it can access. Before implementation begins, three critical elements must be in place: use case clarity, data readiness, and system integration capability.

Use Case Clarity and Business Objective Alignment

Starting without a defined, scoped use case is the most common reason pilots fail. The best initial use cases are high-volume, predictable workflows where the AI agent can handle 70% or more of interactions end-to-end without human input. Examples include:

- Order status inquiries and tracking

- Billing and payment support

- Returns and refund processing

- Appointment and field-service scheduling

- Basic account updates (address, phone, password resets)

Define success metrics in advance: target resolution time, acceptable escalation ceiling, and minimum CSAT threshold. Avoid complex, judgment-heavy interactions for your first deployment—save those for later phases once the foundation is proven.

Data Readiness Assessment

Agentic AI requires real-time or near-real-time access to structured data from CRM, order history, product catalogs, and ERP records. Over time, it also needs unstructured data like past chat transcripts and support tickets. Gartner forecasts that organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026.

Data readiness checklist:

- Can the AI agent query complete customer history?

- Does your CRM expose APIs for real-time access?

- Are product and order records in a consistent, queryable format?

- Are records normalized across systems, or are there conflicting data sources?

Incomplete or inconsistent data produces unreliable agent decisions. An IBM case study found that an AI scheduling tool fed with inaccurate shift data had an 84% human override rate—a near-total failure of the autonomous system.

System Integration Readiness

The AI agent functions as an orchestration layer, requiring live API connections to CRM, ERP, helpdesk, communication channels, and knowledge bases. Assess whether these systems are API-accessible or whether integration work must come first.

Existing integration infrastructure is a meaningful accelerator. Enterprises that have already completed 250+ ERP integrations—as Cygnet.One has done across 35 countries and sectors including BFSI—can connect an AI orchestration layer significantly faster, with lower technical risk. Organizations without that foundation should plan additional time for integration groundwork before the AI layer goes live.

Governance and Escalation Framework

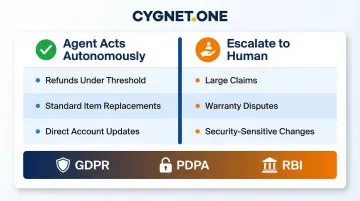

Before any agent goes live, define what the AI can and cannot do autonomously. Examples:

- Process refunds under ₹50,000 autonomously, but route larger claims to specialists

- Initiate replacements for standard items, but escalate warranty disputes

- Update account information directly, but require human review for security-sensitive changes

These autonomy boundaries determine where the agent acts and where a human takes over — skipping this step is a primary cause of compliance failures post-launch. Compliance requirements including data residency rules, audit trails, role-based access controls, and sector-specific regulations (GDPR, PDPA, RBI guidelines for BFSI) must be built into the design, not retrofitted after deployment. A 2026 Deloitte report found that only one in five companies has a mature governance model for autonomous AI agents.

Step-by-Step Implementation Roadmap for Agentic AI

A production-ready agentic AI deployment typically takes 4–6 months for an initial use case, with scaling across subsequent quarters. Implementation follows a defined sequence — skipping pilots or rushing integration testing creates failures that cost far more to unwind than to prevent.

Phase 1: Scope and Architecture Design

Finalize the use case, define agent architecture (single-agent or multi-agent), select the LLM backbone, and map which systems the agent will access and what actions it will be permitted to take. Decisions made here constrain everything downstream.

Key outputs:

- Defined autonomy boundaries and escalation thresholds

- System integration map (which APIs, which data sources)

- Success metrics and KPI targets

- Risk assessment and compliance checklist

Phase 2: Data Consolidation and Pipeline Setup

This phase is the most critical work: consolidating fragmented data sources, establishing API connections to CRM, ERP, and support platforms, and building data pipelines that feed the agent reliably. Structured data should be stabilized before adding unstructured sources like chat transcripts.

The 2025 MuleSoft Connectivity Benchmark Report reveals that the average enterprise manages approximately 897 applications, with only 29% integrated. This fragmentation is why 80% of IT leaders cite data integration as the single most significant obstacle to AI success.

Phase 3: Agent Training and Knowledge Base Configuration

Configure the agent's knowledge base (product information, policies, FAQs, resolution workflows), train it on historical interaction data, and define conversational flows. The agent should be trained on how customers actually phrase requests—not idealized language—using real support transcripts.

Include edge cases identified by frontline agents who understand the unusual phrasings and policy grey areas that no AI architect will anticipate from a requirements document alone.

Phase 4: Controlled Pilot Deployment

The pilot is a narrow, boundary-defined test: one channel, one use case, real but limited traffic. Define what "success" looks like before launch—target resolution rate, acceptable escalation ceiling, CSAT floor. Monitor intensively during this phase.

A 2024 Zendesk survey found that organizations using a staged migration path saw a 17% increase in customer satisfaction compared to big-bang approaches.

Phase 5: Integration Testing and Edge Case Simulation

Before scaling, stress-test the agent against edge cases: CRM outages, incomplete customer records, unusual request phrasing, and peak load scenarios. Verify that the agent gracefully degrades—queues or escalates—rather than failing hard when systems are unavailable.

Test failure scenarios explicitly in staging before any scaling event. This is where you identify and fix integration weaknesses that would otherwise surface in production.

Phase 6: Phased Expansion and Continuous Learning Configuration

Scale responsibly: expand use cases one at a time using the same integration infrastructure. Enable continuous learning—configure feedback loops so the agent improves from resolved interactions and escalation patterns.

McKinsey reports that 23% of organizations are already scaling an agentic AI system in at least one business function. Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by end of 2026 — up from less than 5% in 2025.

Post-Go-Live: Validation and Performance Monitoring

Agentic systems can appear to work while silently making poor decisions. Systematic monitoring is essential, not optional.

Core Metrics to Track

Monitor these primary performance indicators from day one:

- First-Contact Resolution (FCR) rate - Shows effectiveness. Forrester TEI studies report 20% improvements in FCR for successful deployments.

- Average Handle Time (AHT) - Reveals efficiency. Case studies show 40-60% reductions in AHT.

- Escalation rate - Indicates gaps in agent capability or data access. Track by request type and time of day.

- CSAT/NPS delta - Measures customer experience impact versus pre-deployment baseline.

- Cost-per-interaction - Quantifies financial efficiency gains.

Together, these indicators give you a layered view of where the system is delivering — and where it needs work.

Human Oversight and Audit Reviews

Plan for regular human review of agent-resolved interactions throughout the first 60–90 days — this is where silent failure patterns surface. Reviewers should look for:

- Incorrect decisions that were not escalated

- Policy violations

- Interaction patterns indicating the agent consistently misunderstands a request type

Failures caught here inform retraining before they compound.

Iterating Based on Real-World Patterns

If customers phrase a common request in ways the agent doesn't recognize, broaden language coverage. If escalation spikes at specific times or for specific request types, investigate whether the agent needs additional data access or updated decision rules.

Treat iteration as an ongoing discipline. Set a weekly review cadence for the first month, then shift to bi-weekly — analyzing patterns, adjusting configurations, and closing gaps before they affect customer experience at scale.

Common Implementation Pitfalls and How to Fix Them

Pitfall 1: Fragmented Data Producing Inconsistent Agent Decisions

Problem: The agent gives different answers to similar queries because it's drawing from inconsistent or siloed data sources.

Likely cause: Data consolidation was treated as a post-deployment task rather than a prerequisite. Multiple sources (CRM, ERP, legacy databases) were not normalized before the agent went live.

Fix: Pause agent on the affected query type, conduct a data audit, normalize records across sources, and reconnect the pipeline before re-enabling. Establish a data quality monitoring dashboard going forward.

Pitfall 2: Poorly Defined Escalation Boundaries Leading to Customer Frustration

The agent either escalates too aggressively — driving up agent workload with no self-service benefit — or handles cases it shouldn't, resulting in policy violations or incorrect resolutions on complex issues. The root cause is usually escalation thresholds defined too broadly or narrowly during design, without input from frontline agents who understand edge cases.

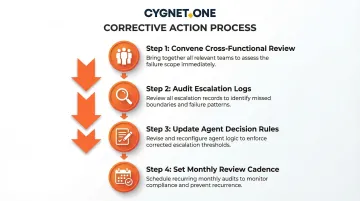

To fix this:

- Convene a cross-functional review (CX, operations, compliance) to audit real escalation logs from the pilot

- Identify cases handled incorrectly — both over-escalated and under-escalated

- Update the agent's decision rules with specific examples drawn directly from those cases

- Set a review cadence (monthly initially) to adjust thresholds as edge cases accumulate

Pitfall 3: Integration Failures Under Load or During System Downtime

The agent fails completely or behaves erratically when a connected system (CRM, ERP) is temporarily unavailable or slow. This typically happens because integrations were tested only under normal conditions, with no fallback behavior defined for partial system unavailability.

The fix is graceful degradation logic. When a connected system goes down, the agent should:

- Queue the interaction rather than proceeding with incomplete data

- Notify the customer of a delay with a clear expected timeframe

- Route the case to a human agent automatically

Test failure scenarios explicitly in staging before go-live or capacity scaling.

Pro Tips for a Successful Agentic AI Rollout

Three principles consistently separate successful rollouts from stalled ones:

Start with the clearest data trail, not the highest-volume problem. Data richness matters more than transaction count for the first deployment. A well-documented use case with clean historical data will outperform a high-volume use case built on fragmented records.

Bring frontline agents in early. They know the edge cases, unusual phrasings, and policy grey areas that no AI architect will catch from a requirements document. That institutional knowledge directly sharpens training data quality and escalation protocols — two areas where gaps surface fastest in production.

Document as a living practice, not a one-time task. Record agent decision boundaries, integration configurations, training data sources, and escalation protocols from day one. Update that documentation with every iteration cycle — it speeds up debugging, satisfies audit requirements, and keeps the team aligned when people change roles.

Frequently Asked Questions

How is agentic AI different from a traditional chatbot or conversational AI?

Agentic AI autonomously executes multi-step workflows across multiple systems (CRM, ERP, support platforms) to complete tasks end-to-end. Traditional chatbots follow scripted decision trees, and conversational AI generates responses without taking action. Agentic systems perceive intent, make decisions, and act—without human prompting at each stage.

What level of data readiness is needed before implementing agentic AI for customer engagement?

The agent needs reliable API access to core systems (CRM, order data, product catalog) with normalized, consistent records. Structured data must be stable first—unstructured sources like chat transcripts can be added progressively as the deployment matures. Without this foundation, agent decisions will be unreliable.

How long does a typical agentic AI implementation take for customer engagement?

A scoped pilot use case typically takes 3–6 months from readiness assessment to live deployment. Scaling across additional use cases extends over subsequent quarters, depending on data complexity and integration depth.

Can agentic AI be deployed without replacing existing CRM or ERP systems?

Yes. Agentic AI operates as an orchestration layer on top of existing systems via APIs—it does not require replacing infrastructure. Integration work is required, but existing technology investments are preserved. Enterprises with mature API integration already in place typically move from pilot to production faster.

What are the biggest compliance and security risks in agentic AI customer engagement?

The three primary risks are unauthorized data access (if role-based controls are misconfigured), regulatory non-compliance (if decisions lack explainable audit trails), and data residency violations (if customer data flows to non-compliant environments). All three must be resolved at the architecture stage—retrofitting controls post-deployment is significantly more costly.

How do you measure ROI from agentic AI in customer engagement?

Measure against a pre-deployment baseline across four metrics:

- Average handle time reduction

- First-contact resolution rate improvement

- Cost-per-interaction decrease

- CSAT score improvement

Forrester TEI research reports 396% ROI for successful agentic AI deployments, with gains visible across all four metrics within the first year.