Introduction

B2B sales organizations are drowning in manual prospecting, inconsistent follow-up, and data-blind decision-making. Sales reps spend 27% of their time dealing with bad data instead of selling. Meanwhile, contact information decays at 22.5% annually, turning CRM databases into graveyards of outdated leads. The promise of AI agents isn't incremental improvement—it's a fundamental shift in how revenue workflows operate, from lead identification through deal closure.

This transformation is already underway. Early adopters are deploying specialized AI agents that don't just automate tasks—they make decisions, adapt strategies, and execute multi-step workflows with minimal human oversight. Yet the rush to deploy is outpacing the readiness to succeed: over 40% of agentic AI projects will be canceled by 2027, most due to avoidable implementation failures.

What separates the implementations that deliver from those that stall comes down to three things: understanding what AI agents actually do, knowing which foundations must be in place before deployment, and recognizing the failure patterns early enough to sidestep them. That's what this guide covers.

TL;DR

- AI agents act as specialized, decision-making entities handling lead generation, qualification, outreach, and customer success with minimal oversight

- Successful deployment requires clean data, integrated tech stacks, and defined human-AI handoff points established upfront

- A phased approach (pilot → validate → scale) consistently outperforms full-funnel deployment

- Biggest failures stem from poor data quality, misaligned handoffs, and lack of MLOps governance

- AI agents free salespeople from low-value tasks, redirecting their focus to complex deals and relationship-driven decisions that require human judgment

What AI Agents Actually Do in B2B Sales

Beyond Traditional Automation

Traditional sales automation follows fixed if-then rules triggered by user actions—send an email when a lead downloads a whitepaper, create a task when a deal stage changes. AI agents are fundamentally different. They're goal-directed systems capable of multi-step reasoning, contextual awareness, and adaptive decision-making. They don't just respond to prompts—they plan sequences of actions, execute them across multiple systems, and adjust based on outcomes.

Roughly seven in ten sellers currently use general-purpose AI for tactical tasks like drafting emails or summarizing calls. The future state embeds agents directly into core sales workflows, where they operate with full contextual awareness across every deal, customer interaction, and pipeline stage.

Five Specialized Agent Types in B2B Sales

Based on BCG's enterprise deployment framework, successful B2B sales implementations typically deploy five interconnected agent types:

Orchestration Agents coordinate the entire system — breaking revenue goals into workflows, managing handoffs between specialized agents, and ensuring leads move to the right stage at the right time.

Lead Generation Agents score and prioritize targets using first-party behavioral signals (website visits, content downloads) alongside third-party intent data like funding announcements, technology stack changes, and hiring patterns. Targeting models update continuously based on conversion outcomes.

Qualification Agents map buying groups, identify decision-makers, and assess account fit in real time. They determine which leads warrant human attention and route the rest into autonomous nurture sequences.

Deal Conversion and Pricing Agents draft proposals, pull in inputs from legal and finance, and optimize pricing based on deal size, competitive context, and customer segment — cutting coordination delays that typically stall mid-funnel deals.

Customer Success Agents monitor product adoption, flag churn risk when usage drops, and surface expansion opportunities before they go cold.

How Agents Connect Across Your Tech Stack

Each agent draws from — and writes back to — the broader sales technology stack: CRM systems, intent data platforms, ERP systems, communication tools, and marketing automation. Organizations with mature integration infrastructure (such as those with 250+ ERP integrations already in place) are better positioned to feed agents accurate, real-time data across finance, operations, and customer touchpoints.

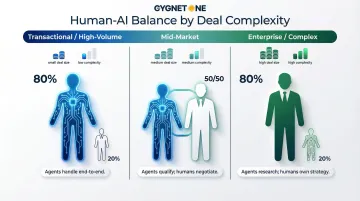

Human-AI Balance by Deal Complexity

The degree of agent autonomy should vary based on deal characteristics:

- High-volume, transactional deals: Agents operate autonomously from initial outreach through contract generation

- Mid-market accounts: Agents handle qualification and scheduling; humans lead discovery and negotiation

- Enterprise, complex sales: Agents provide research, competitive intelligence, and deal coordination; humans own strategy and relationship-building

Getting this balance right matters more than maximizing automation — over-automating enterprise deals erodes trust, while under-automating transactional ones wastes rep capacity on work agents can handle reliably.

Before You Deploy: Prerequisites for AI Agent Implementation

Data Readiness Is Non-Negotiable

AI agents are only as reliable as the data they operate on. With B2B contact data decaying at 22-30% annually and the average CRM containing 90% incomplete contacts with 25%+ duplicates, launching agents on typical CRM infrastructure is a recipe for embarrassing failures.

Common data quality failures include:

- Agents prospecting existing customers due to outdated CRM records

- Duplicate outreach to the same contact across multiple records

- Misrouted leads caused by stale territory assignments

- Inaccurate lead scores based on incomplete engagement history

Required data foundation:

- Unified, clean CRM dataset with regular hygiene protocols

- Elimination of silos between marketing, sales, and finance systems

- Real-time data feeds (not batch updates from 24 hours ago)

- Automated validation rules that flag incomplete or suspicious records

Organizations that skip data cleanup before deployment consistently see the same failure modes: agents triggering duplicate outreach that prompts opt-outs, deals misrouted to the wrong rep, and reps who stop trusting the system and run manual processes in parallel — canceling out every efficiency gain.

Tech Stack Integration Requirements

An agent that can't access real-time pricing, inventory, or customer financial data will make decisions based on stale context — and your buyers will notice. Before selecting an agent platform, confirm your stack can support live connectivity across:

- CRM systems (Salesforce, HubSpot, Microsoft Dynamics)

- Marketing automation (Marketo, Pardot)

- Intent data platforms (Bombora, 6sense, ZoomInfo)

- Pricing and CPQ systems

- ERP and financial systems

Pre-deployment integration audit:

Map your current technology stack and identify integration gaps before committing to an agent platform. Organizations with mature ERP integrations — established data pipelines, governance frameworks, and real-time connectivity — have a meaningful head start here.

Define Human-AI Handoff Rules

Ambiguity about when agents hand off to human reps creates broken buyer experiences. Define these boundaries before going live:

- Qualification thresholds: What signals trigger human involvement? (e.g., company size >500 employees, deal value >$50,000, competitive displacement scenario)

- Escalation triggers: When should agents immediately route to a human? (e.g., customer complaint, pricing exception request, technical question beyond agent knowledge)

- Ownership clarity: Which rep receives the handoff, and what context does the agent provide?

Run a 30-day pilot with a single rep segment to pressure-test escalation logic — particularly edge cases — before scaling to the full team.

Assess Team Readiness and Change Management

Only 35% of sales professionals completely trust their organization's data accuracy. That distrust extends directly to AI outputs.

The result: reps double-check every recommendation, run legacy processes in parallel, or bypass the system entirely — and every efficiency gain disappears.

Change management requirements:

- Frame agents as deal support tools, not performance monitors

- Provide hands-on training showing agents improving outcomes

- Redesign roles and incentives to reward AI-augmented behaviors

- Address concerns about job security directly and honestly

BCG's 10/20/70 rule allocates 70% of implementation effort to people and processes, 20% to technology and data, and only 10% to algorithms. Organizations that invert this ratio consistently fail.

Compliance and Governance for Regulated Industries

In BFSI, healthcare, and government sectors, AI agents must operate within strict guardrails:

- Data privacy: GDPR, CCPA, and sector-specific regulations (HIPAA, FINRA)

- Communication compliance: Recording requirements, disclosure obligations

- Audit trails: Reconstructable logs of all agent decisions and actions

- Human oversight: Mandatory approval gates for high-risk actions

The EU AI Act requires human oversight for high-risk AI systems, including those making decisions about customer interactions. Organizations serving European markets must build these controls from day one.

How to Implement AI Agents in B2B Sales: A Step-by-Step Guide

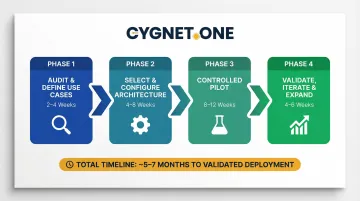

Most AI agent rollouts that fail do so at the same point: they move from pilot to full deployment before validating that the agent actually performs as expected in real conditions. A deliberate four-phase sequence prevents that. Here is how each phase works and what it requires.

Phase 1: Audit and Define Use Cases

Start by mapping where AI agents will actually add value — before selecting any platform or vendor.

- Map your current sales workflow from lead capture through renewal

- Identify high-friction, high-volume tasks where manual effort is greatest

- Prioritize use cases where:

- Data is already structured and accessible

- The cost of agent error is low

- Success is measurable with clear metrics

- Start with outreach sequencing, lead scoring, or meeting scheduling — not complex negotiation

Plan for 2–4 weeks here. Rushing this phase typically means the wrong use cases get automated first.

Phase 2: Select and Configure Your Agent Architecture

Once use cases are defined, the build-vs-buy decision shapes everything downstream.

Build vs. Buy Decision:

- Buy off-the-shelf: Faster deployment, proven capabilities, lower upfront cost

- Build custom: Required for proprietary data models, unique workflows, or competitive differentiation

- Hybrid: Use platforms for orchestration and common functions; build custom agents for specialized needs

Once the build approach is set, configuration follows these steps:

- Connect agents to CRM, intent data sources, and communication tools

- Define goals and success metrics per agent type (for example, Lead Gen Agent target: 30% increase in qualified pipeline)

- Set escalation rules and human oversight thresholds

- Configure data validation rules to prevent acting on stale information

This phase typically runs 4–8 weeks, depending on integration complexity.

Phase 3: Run a Controlled Pilot

A controlled pilot tests agent performance in real conditions without exposing your full pipeline to early-stage errors. Define the pilot scope tightly:

- Deploy 1-2 agent types (typically Lead Generation + Qualification)

- Define a specific prospect segment (for example, mid-market manufacturing firms in India or Southeast Asia)

- Set a time window (8-12 weeks)

- Establish baseline metrics: response rates, conversion rates, time-to-engagement, pipeline value

Keep human oversight high during this phase. Review agent decisions daily for the first two weeks, then weekly.

The pilot itself runs 8–12 weeks. Do not compress this window — patterns in agent behavior often only emerge after 6+ weeks of operation.

Phase 4: Validate, Iterate, and Expand

Before scaling, answer these questions honestly based on pilot data:

- Did agents meet or exceed baseline metrics?

- Where did they underperform, and why? (Data gaps? Misconfigured rules? Wrong escalation triggers?)

- What feedback did sales reps provide?

- What buyer experience issues emerged?

Iterate before scaling. Common adjustments include:

- Refining qualification criteria

- Adjusting handoff timing

- Expanding data sources

- Retraining agent models based on pilot results

Allow 4–6 weeks for analysis and iteration. Once validation confirms measurable value, that evidence becomes the foundation for scaling — both the business case for leadership and the configuration baseline for the next agent rollout.

Validating Success and Scaling AI Agent Performance

Define Success Metrics

Track these key indicators post-deployment:

- Qualified pipeline volume: MQLs and SQLs generated through agent-assisted outreach

- Time-to-engage: Minutes (not hours) from lead capture to first meaningful interaction

- SDR capacity freed: Hours recaptured weekly from manual prospecting and data entry

- Deal velocity: Measurable reduction in average sales cycle length

- Customer response rates: Email open rates, meeting acceptance, and reply rates combined

Benchmark against realistic targets. Forrester's study of Salesloft's agentic workflows documented a 46-50% improvement in conversion-to-opportunity rates and 152% more pipeline by Year 3.

MLOps: Keeping Agent Performance from Degrading

Tracking metrics tells you where you stand. MLOps (Machine Learning Operations) determines whether that performance holds over time — and one-time deployments almost always drift without it:

- Model monitoring: Review agent performance metrics weekly to catch drift early

- Data pipeline maintenance: Confirm data sources stay accurate and accessible

- Drift investigation: If email response rates drop from 18% to 12%, diagnose whether model degradation or shifting market conditions is the cause

- Scheduled retraining: Refresh models quarterly using recent conversion data

Without MLOps governance, degradation goes unnoticed until it surfaces as lost deals or escalating complaints — by which point recovery costs more than prevention would have.

When to Expand Agent Scope

Signals that indicate readiness to scale from pilot to full multi-agent ecosystem:

- Stable data pipelines with <5% error rates

- Rep adoption above 70% (measured by daily active usage)

- Validated ROI from pilot phase (measurable lift in pipeline or efficiency)

- Executive sponsorship and budget allocation for full deployment

Most organizations move from pilot to full multi-agent deployment in 6-12 months when these conditions are met.

Common AI Agent Implementation Pitfalls and How to Fix Them

Poor Data Quality Undermining Agent Decisions

Problem: Agents acting on stale, siloed, or incomplete CRM data lead to misrouted leads, duplicate outreach, and embarrassing errors like prospecting existing customers.

Root Causes:

- CRM records not updated after job changes (22-30% annual decay rate)

- Duplicate records splitting activity history

- Siloed data between marketing, sales, and finance systems

Fix:

- Implement mandatory data hygiene protocols before go-live

- Unify CRM, marketing automation, and ERP data into a single source of truth

- Establish automated validation rules (flag records without email verification in 90+ days)

- Assign data quality ownership to Revenue Operations, not IT

For organizations managing high-volume invoice or financial workflows — where structured transaction data is already centralized — that same data can be applied directly to validate CRM records and fill account context gaps before agents ever touch a lead.

Misaligned Human-AI Handoff Points

Clean data alone won't prevent breakdowns. Where the handoff happens — and how — determines whether agents amplify your pipeline or frustrate it.

Problem: Agents hand off to reps too early (flooding them with unqualified leads) or too late (burning prospects with over-automation before human touch).

Symptoms worth watching for:

- Reps report receiving low-quality leads from agent queues

- Prospects flag feeling "spammed" by automated sequences

- Conversion rates fall after the handoff point

Fix:

- Define explicit qualification thresholds: company size, engagement score, buying stage

- Identify engagement signals that trigger human involvement — pricing page visits, competitor mentions, demo requests

- Pilot handoff boundaries with a small segment before scaling

- Gather rep and buyer feedback to refine triggers

Adoption Failure Due to Rep Resistance

Even well-calibrated handoffs fail if reps won't trust the system. This is the pitfall most implementation plans underestimate.

Problem: Sales reps distrust agent output, double-check every recommendation, or bypass the system entirely — cutting into the efficiency gains you expected.

What's usually driving it:

- Insufficient training on how agents actually make decisions

- Fear that AI signals a path to replacing sales roles

- Clunky integration that requires separate logins or unfamiliar interfaces

- No visibility into why an agent flagged or ranked a lead

To fix this, start with transparency. Train reps on how agents work, then show — don't just tell — by running a pilot where agents demonstrably improve outcomes. Integrate outputs into tools reps already use: CRM alerts, Slack notifications, email drafts. Address job security concerns head-on: agents are built to eliminate low-value tasks, not eliminate sales teams.

Pro Tips for Long-Term AI Agent Success in B2B Sales

Embed Agents Into Existing Workflows

AI agents that live inside the tools sellers already use—CRM, email, Slack—achieve significantly higher adoption rates than those requiring separate logins or dashboards. The less friction at the point of use, the faster reps adopt and trust the recommendations.

Example: Instead of requiring reps to check a separate dashboard for lead scores, surface agent recommendations directly in Salesforce opportunity records or as Slack notifications.

Treat Intent Signal Expansion as Continuous Improvement

The competitive edge of AI agents grows as they learn to recognize more predictive buying signals:

- Pricing page visits and product comparison behavior

- Funding announcements and executive hiring (signals of budget availability)

- Technology stack changes (signals of platform evaluation)

- Invoice volume trends (signals of business growth or contraction)

Assign ownership of intent model improvement to a dedicated Revenue Operations team member. Review and expand signal sources quarterly — stale signal libraries quietly erode agent accuracy over time.

Document, Review, and Govern

Maintain comprehensive governance:

- Log every agent decision, action, and escalation for traceability

- Review agent performance against revenue targets each quarter

- Define escalation procedures for behavior outside expected parameters, such as unusually low conversion rates or compliance violations

For regulated industries — BFSI, healthcare, financial services — documented governance is non-negotiable. It's also what turns early skeptics into long-term advocates inside the organization.

Frequently Asked Questions

What is the difference between AI agents and standard CRM automation in B2B sales?

CRM automation follows fixed if-then rules triggered by user actions (e.g., send email when lead downloads content). AI agents are goal-directed systems capable of multi-step reasoning: they decide not just when to act, but what action to take based on live data inputs, contextual awareness, and continuous learning.

How long does it typically take to implement AI agents in a B2B sales process?

A controlled pilot with one or two agent types typically takes 6–12 weeks from audit to initial results. Scaling to a full multi-agent ecosystem takes 6–12 months, depending on data readiness, tech stack complexity, and change management scope.

Will AI agents replace B2B sales development reps?

AI agents automate repetitive SDR tasks (cold outreach, lead qualification, data entry), but human sellers remain essential for complex deal strategy, trust-building, and high-stakes negotiations. The SDR role evolves toward AI orchestration and higher-value engagement rather than disappearing.

What data infrastructure is required before deploying AI sales agents?

Essential requirements include a unified, clean CRM dataset; integration between sales, marketing, and finance systems; real-time data pipelines (not batch updates); and defined data governance protocols to prevent agent errors from stale or siloed inputs.

How do you measure ROI from AI agents in B2B sales?

Track qualified pipeline growth, time-to-engage, SDR hours recaptured, deal velocity, and response rates against pre-deployment baselines. Forrester documented 236% three-year ROI in successful implementations.

What are the biggest risks of deploying AI agents in B2B sales too quickly?

The most damaging risks are poor data quality (such as contacting existing clients as cold prospects), buyer experience breakdowns from over-automation, rep resistance due to weak change management, and model drift that goes undetected without MLOps governance.