Introduction

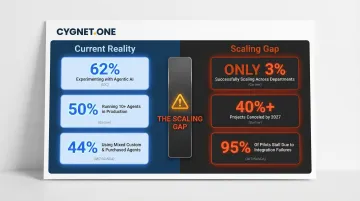

Enterprise leaders face a critical tension in 2026: AI agents are no longer experimental technology confined to innovation labs—they're now a board-level priority with real budget behind them. Yet the gap between pilot success and production-scale deployment has proven difficult to close. According to a November 2025 IDC/AWS survey of over 900 organizations, only 3% of companies are successfully scaling agentic AI across multiple departments, even as 62% are actively experimenting with these technologies.

This disconnect matters. While Gartner projects that 40% of enterprise applications will integrate task-specific AI agents by the end of 2026, most enterprises remain stuck at the proof-of-concept stage—unable to convert promising pilots into production systems that deliver measurable returns.

This guide offers a practical framework for enterprises planning or accelerating AI agent deployment in 2026. It covers the full lifecycle: planning, integration architecture, governance design, and scaling strategy.

The guidance is especially relevant for regulated industries—finance, BFSI, and operations-heavy sectors—where the stakes for reliability, audit readiness, and compliance are highest.

TLDR

- Scaling AI agents enterprise-wide in 2026 demands architecture decisions, governance frameworks, and defined ROI—not just stronger models

- The biggest blockers are agent sprawl, integration gaps, and skills shortfalls—not model capability

- Successful enterprise deployment starts with use case prioritization, before any platform is selected

- Governance, security, and compliance must be designed into deployment from the start—retrofitting them rarely works

Why 2026 Is the Inflection Point for Enterprise AI Agent Deployment

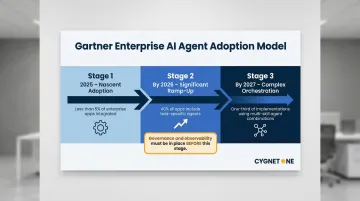

2026 marks a clear turning point: enterprises are moving from evaluating AI agents to committing to multi-agent, cross-functional deployments. Gartner's August 2025 research indicates that by the end of 2026, 40% of enterprise applications will be integrated with task-specific AI agents—a dramatic acceleration from less than 5% in 2025. IDC data shows 65% of organizations expect full deployment by 2027, which means architecture choices, vendor selection, and governance design need to be locked in now.

The Emergence of the Agentic Enterprise

The "agentic enterprise" marks a structural departure from how AI has been deployed so far. Unlike the fragmented, siloed deployments of 2023–2024—where individual departments ran isolated chatbots or automation scripts—the agentic enterprise features AI agents as connected actors operating simultaneously across CRM, ERP, finance, compliance, and customer-facing systems.

These agents don't just automate tasks; they reason, adapt, and take multi-step actions dynamically. They handle ambiguity, learn from context, and make decisions within defined boundaries—a sharp contrast to traditional RPA, which requires highly structured inputs and follows rigid, pre-scripted workflows.

Why Finance, Tax, and Operations Lead the Shift

Industries like finance, tax compliance, and operations are at the leading edge of this transformation for three reasons:

- High-volume, rule-governed workflows: These domains involve repetitive, data-intensive processes where agents can deliver measurable impact quickly

- Clear ROI metrics: Cost per transaction, cycle time reduction, and error rates provide unambiguous success measures

- Regulatory pressure: Compliance mandates create forcing functions that drive investment in automated, auditable processes

For example, Deloitte's AI Dossier for Banking & Capital Markets details how multiple AI agents work continuously on intraday liquidity optimization, resolving breaks in real-time by matching payment confirmations, Nostro statements, ledger entries, and settlement messages. The agents request clarifications, attach evidence, and escalate only true exceptions to human operators—freeing trapped cash and lowering intraday buffers.

Core Challenges Enterprises Face When Deploying AI Agents at Scale

Agent Sprawl: The New Enterprise Risk

Agent sprawl—the uncontrolled, ungoverned proliferation of AI agents—is now identified as one of the primary scaling risks enterprises face. Microsoft frames it as "the next identity sprawl," where decentralized creation of autonomous agents creates a new risk vector. Gartner warns that "ungoverned sprawl" leads to projects with unclear ROI and high cancellation rates.

The scale of this proliferation is significant. IDC data from November 2025 shows that 50% of organizations already had 10 or more agents in production, and 44% use a mix of custom-built and purchased agents. This complexity creates severe operational impacts:

- Observability gaps: Teams lose visibility into what agents are doing, where they're accessing data, and how they're making decisions

- Access control risks: Agents accessing enterprise data at machine speed without proper oversight create security vulnerabilities

- Duplication and fragmentation: Different business units build redundant agents that don't interoperate, creating costly maintenance overhead

Integration Complexity: The Primary Cause of Failure

Integration complexity—encompassing APIs, legacy systems, and data access—consistently ranks as the primary reason for failed or canceled enterprise AI agent initiatives. Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027, citing that "integrating agents into legacy systems can be technically complex."

The business impact is measurable. An IDC FutureScape report from January 2026 warns that companies without AI-ready data foundations will suffer a 15% productivity loss by 2027. A July 2025 Harvard Business Review survey reinforces this: only 15% of companies believe their data and systems are fully ready for agentic AI.

Most telling is an MIT/NANDA study from August 2025 reporting that approximately 95% of generative AI pilots stall due to flawed enterprise integration—not issues with the AI models themselves. The barrier to scale is integration readiness, not model capability.

Skills and Organizational Readiness Gaps

A 2025 IDC study commissioned by AWS found that 55% of organizations cite lack of skilled personnel as the single greatest implementation challenge, and 67% believe their users require more skills training.

Three roles are in particularly short supply:

- AI/ML engineers: A late 2023 Gartner survey revealed that 56% of software engineering leaders identified this as the most in-demand role for 2024

- Prompt engineers: 2025 Forrester data shows only 26% of employees know what prompt engineering is and how to use it

- Governance specialists: IDC predicts that by 2027, half of all AI-enabled enterprise applications will mandate new oversight positions dedicated to governance, risk, and accountability

That gap between high experimentation (50% of organizations with 10+ agents) and low scaling success (only 3% scaling across departments) reflects more than a technical shortfall. Enterprises are underinvesting in agent workflow design, operational governance, and the organizational change management needed to sustain deployments at scale.

Best Practices for Planning and Prioritizing AI Agent Use Cases

Start with Use Case Inventory, Not Platform Selection

The most common mistake enterprises make is selecting an AI agent platform before understanding what problems they're solving. Instead, begin by mapping all candidate workflows against two dimensions:

Automation potential: Assess whether the workflow is rule-based, high-volume, and data-rich. These characteristics indicate strong agent suitability.

Business impact: Evaluate potential improvements in cost, cycle time, and compliance risk. Prioritize the intersection of both dimensions.

Gartner's Value vs. Feasibility Framework assesses use cases on business value against implementation feasibility — including AgentOps maturity and governance readiness. Deloitte's Value-to-Effort Matrix applies two additional filters: Time to Value (under 12 weeks) and stakeholder readiness, helping teams deprioritize high-effort, low-return candidates early.

Define Agent Scope and Boundaries Before Deployment

Design agents with clearly bounded action spaces — what an agent can read, write, trigger, or approve. This prevents unintended lateral actions across systems.

Narrow-scope agents (lower risk, faster trust-building):

- Process specific document types

- Reconcile defined data sets

- Extract information from structured sources

Broad-scope agents (higher value, higher risk):

- Orchestrate multi-system workflows

- Make financial decisions above thresholds

- Interact directly with customers

The principle of "bounded autonomy" constrains agents to have just enough autonomy to deliver value while remaining reliable and safe. Teams that apply this principle consistently report sharply compressed delivery timelines — in some cases cutting deployment cycles from weeks to under 24 hours.

Once scope is defined, the next question is sequencing: which use cases come first, and in what order?

Build a Use Case Sequencing Roadmap

Structure phased deployment — starting with internal-facing, lower-stakes tasks before progressing to customer-facing or decision-critical workflows. Gartner's research illustrates a clear staged rollout model:

Stage 1 (2025): Nascent adoption with less than 5% of enterprise apps having integrated agents

Stage 2 (by 2026): Significant ramp-up to 40% of apps including integrated, task-specific agents

Stage 3 (by 2027): One-third of implementations combining agents with different skills to manage complex, cross-application tasks

For enterprise teams, this staging means governance frameworks, observability tooling, and agent trust models should all be in place before Stage 2 — not retrofitted afterward.

Domain knowledge shapes how well that roadmap holds in practice.

Involve Domain Experts Alongside Technology Teams

Use cases designed by IT alone often miss the nuance of domain workflows. In finance and compliance contexts specifically, the agent must understand not just the data but the regulatory logic behind it.

In tax compliance, for instance, an agent processing invoices must handle GST validation rules, HSN code classification, and jurisdiction-specific withholding requirements — not just data extraction. Domain experts ensure agents encode business rules correctly and surface edge cases before they reach production.

Establish a Center of Excellence to Govern Use Case Selection

Accenture found that companies aligning AI and business strategy through a Center of Excellence (CoE) see 2.2× higher revenue growth and a 37% lift in EBITDA on average. The mechanism is straightforward: a CoE prevents agent sprawl and enforces enterprise-wide standards before the cost of fragmentation compounds. McKinsey recommends structuring the CoE to report directly to the CEO during initial phases to maintain strategic alignment and minimize resource waste.

The CoE's mandate includes:

- Establishing governance and risk management frameworks

- Defining technical standards for interoperability (such as MCP and A2A protocols for agent-to-agent communication)

- Overseeing the end-to-end agent lifecycle

- Managing memory, multi-agent collaboration, and observability

- Monitoring latency, accuracy, and uptime against production SLAs

Integration and Architecture Best Practices for Enterprise AI Agents

Design for the Agentic Enterprise, Not Point Solutions

AI agents deliver measurable value only when connected to the live data environment—ERP, CRM, financial systems, compliance databases. Agents operating on static data or isolated APIs recreate the information-silo problems enterprises have spent decades trying to solve.

The architectural principle is straightforward: agents must access the same real-time data that humans use to make decisions. This requires deep integration with core enterprise systems, not superficial API connections to data snapshots.

Adopt an Orchestration Layer to Manage Multi-Agent Workflows

An agent orchestration framework manages task delegation between specialized agents, handles failures, and maintains state across sessions. Ad hoc agent deployment without orchestration is the primary cause of agent sprawl.

Two key open standards are emerging:

Model Context Protocol (MCP): Announced in November 2024, MCP provides a universal language for agents to discover and securely connect with external tools and data sources.

Agent2Agent (A2A) protocol: Governed by the Linux Foundation, A2A serves as a trusted communication bus for distinct agentic applications to interoperate, discover each other's capabilities via "Agent Cards," and delegate tasks.

Production-grade orchestration patterns address the non-deterministic nature of AI agents through sophisticated failure handling (exponential backoff for rate limits, circuit breakers for service degradation), management of long-running tasks via heartbeating, and robust cancellation propagation to prevent orphaned processes.

Prioritize API-First, Modular Integration Architecture

Ensure AI agent infrastructure connects to existing systems through well-governed APIs rather than direct database access or custom connectors. This reduces fragility and simplifies governance.

NIST and major cloud providers align on these core requirements:

- API hardening with strong authentication and rate limits

- "No direct admin by default" policy

- Comprehensive logging for auditability

- Self-describing, business-ready APIs that agents can discover and use

AWS promotes an AI Agent Gateway pattern that acts as a control boundary, validating agent intent, enforcing authorization with Policy as Code, and delegating execution to isolated, ephemeral environments. This architecture enforces principles of Least Privilege (no direct infrastructure access), Ephemeral Execution, and Observability by Default.

For enterprises in finance and compliance, integration depth matters as much as agent capability. Cygnet.One, for instance, has completed 250+ ERP integrations across SAP, Oracle, and Microsoft Dynamics — the kind of pre-built connectivity that shortens deployment timelines on complex agentic workflows.

Plan for Data Quality and Access Governance Before Agents Go Live

AI agents are only as reliable as the data they act on. Gartner reports that data availability and quality is a top barrier to AI adoption, directly impacting the ability of agents to transition from prototype to production. Only about 40% of AI prototypes successfully move into production, primarily due to data issues.

Before deploying agents on critical workflows, enterprises must:

- Audit data completeness across all systems the agent will access

- Establish access controls that limit agent permissions to necessary data only

- Ensure real-time data availability, not batch updates that create stale information

- Validate data quality through automated checks and exception handling

The stakes are concrete: an IDC FutureScape report warns that by 2027, companies without high-quality, AI-ready data foundations will absorb a 15% productivity loss as agentic systems fail to perform.

Build Observability and Rollback Capabilities into the Architecture

In regulated industries, auditability of automated decisions is a hard compliance requirement. Agent monitoring must be built into the architecture from the start — not retrofitted after deployment.

Required observability capabilities include:

- Comprehensive, immutable audit trails of all agent activities

- Real-time monitoring of key performance and security metrics

- End-to-end traceability of agent decisions and actions

- Automated alerting for anomalies or policy violations

- Rollback mechanisms to reverse agent actions when errors are detected

For Human-in-the-Loop (HITL) escalation, a common pattern starts a timer upon human handoff. If no response arrives within the defined SLA, the workflow automatically escalates to a manager or auto-rejects the task — keeping the process moving without manual intervention.

Governance, Security, and Compliance in Enterprise AI Agent Deployment

Treat Governance as Architecture, Not Policy

Governance cannot be an afterthought or a policy document—it must be embedded in the technical design of the agent. This includes role-based access controls, action logging, approval workflows for high-stakes decisions, and defined escalation paths to human agents.

The NIST AI Risk Management Framework (AI RMF 1.0) provides a structured lifecycle for managing AI risks:

GOVERN: Create a centralized inventory of all AI agents, define enterprise-wide policies, and set role-based access controls.

MAP: Document all systems and data sources the agent interacts with, identify potential risks, and analyze the business case.

MEASURE: Track and evaluate risks through comprehensive audit trails, monitor key performance and security metrics, and regularly test for algorithmic bias.

MANAGE: Implement human-in-the-loop oversight mechanisms, develop incident response plans for agent-related failures, and continuously review logs to tighten controls and close gaps.

Security Considerations Specific to AI Agents

AI agents introduce distinct security risks beyond standard software. The OWASP Top 10 for Large Language Model Applications identifies critical vulnerabilities:

- Prompt Injection: Attackers embed malicious instructions within prompts, hijacking the agent's goal-oriented behavior to perform unauthorized actions or exfiltrate sensitive data.

- Insecure Plugin/Tool Design: Security vulnerabilities in external tools let attackers manipulate agents into data deletion, privilege escalation, or remote code execution.

- Excessive Agency: Agents with excessive permissions create a large "blast radius" — a compromised agent can cause widespread harm. Strict least-privilege controls are the primary mitigation.

- Sensitive Information Disclosure: Agents may inadvertently reveal sensitive data in responses. Strong output filtering and data handling protocols are non-negotiable.

- Tool Misuse: Attackers trick agents into using legitimate, authorized tools for malicious purposes — for example, weaponizing a

send_emailtool to run phishing campaigns.

Finance, HR, and legal deployments face the highest exposure. Conduct threat modeling before go-live, not after.

Compliance Readiness for Regulated Industries

Enterprises in BFSI, healthcare, or cross-border operations must map AI agent actions against applicable regulatory frameworks — covering data residency, audit trail requirements, and decision explainability mandates.

For EU operations, the EU AI Act (Regulation (EU) 2024/1689) imposes significant obligations for AI agents classified as "high-risk":

- Continuous Risk Management System throughout the agent's lifecycle

- Strict Data Governance for training and testing data

- Comprehensive Technical Documentation and Record-Keeping with automatic logging of all events

- Transparency requirements, disclosing that users are interacting with an AI

- Mandatory Human Oversight to ensure agents can be intervened with, stopped, or overridden

- Requirements for Accuracy, Robustness, and Cybersecurity

For GDPR compliance, Article 22 grants individuals the right not to be subject to decisions based solely on automated processing that have legal or similarly significant effects. This mandates mechanisms for human intervention, allowing individuals to express their point of view and contest the agent's decision.

Enterprises operating across multiple jurisdictions need AI deployment partners who understand multi-regulatory environments by design — not as a retrofit. Cygnet.One, for instance, holds compliance recognition across India (GSTN), UAE (FTA), UK (HMRC), Saudi Arabia (ZATCA), and Belgium (BOSA), meaning agent architectures built on such platforms can incorporate jurisdiction-specific controls from day one.

Define Human-in-the-Loop Thresholds

Not every agent action carries the same risk. The right human-in-the-loop threshold shifts based on confidence scores, action type, and business impact — and defining those thresholds upfront prevents costly missteps at scale.

Require human approval for:

- Financial transactions above defined thresholds

- Regulatory filings and compliance submissions

- Customer-impacting decisions with legal implications

- Actions where agent confidence scores fall below established thresholds

Allow autonomous operation for:

- Routine data processing and extraction

- Standard reconciliations with high confidence

- Internal workflow automation with low business risk

- Actions with established rollback mechanisms

Measuring Success and Scaling AI Agents Across the Enterprise

Move Beyond Containment Rate: Define Enterprise-Specific Success Metrics

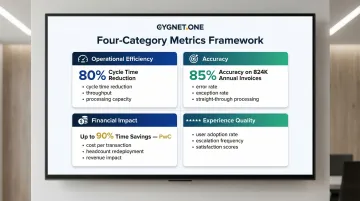

The right KPIs for AI agents depend on the workflow. Establish a framework of metrics categories:

Operational efficiency: Cycle time reduction, throughput improvement, processing capacity. For example, in procure-to-pay processes, AI agents for invoice extraction and purchase order matching have reduced cycle times by up to 80%.

Accuracy: Error rate reduction, exception rate, straight-through processing rate. Thermo Fisher Scientific achieved 85% accuracy and 53% straight-through processing on 824,000 annual invoices using AI-driven invoice processing.

Financial impact: Cost per transaction, headcount redeployment, revenue impact. PwC reports that agentic capacity creation can lead to as much as 90% time savings in key processes, redirecting up to 60% of a team's time from transactional tasks to higher-value work.

Experience quality: User adoption rate, escalation frequency, satisfaction scores. These signal whether agents are delivering value or creating friction in daily workflows.

Establish a Feedback Loop from Deployed Agents Back into the Design Process

Monitoring data from live agents should continuously inform prompt refinement, workflow adjustments, and boundary recalibration. Without structured feedback loops, performance gaps compound quietly until they become operational problems.

Implement continuous improvement mechanisms:

- Analyze escalation patterns to identify where agents struggle

- Review error logs to refine prompts and decision logic

- Track confidence scores to adjust autonomy thresholds

- Incorporate user feedback to improve interaction design

Plan for Organizational Change Alongside Technical Scaling

Scaling AI agents means redefining human roles—not eliminating them. Enterprises that invest in change management, retraining, and transparent communication about what agents handle versus what humans own scale faster and with less internal resistance.

An empirical study analyzing 200 B2B AI deployments between 2022 and 2025 found that companies allocating 25% or more of their total AI project budget to training activities achieved 2.4 times higher median ROI compared to companies making zero investment in training.

Key change management practices:

- Communicate transparently about which tasks agents will handle and which remain human responsibilities

- Invest in retraining programs that help employees transition from transactional work to strategic, analytical roles

- Create clear career paths that show how AI agents augment rather than replace human capabilities

- Involve employees in agent design and feedback loops to build ownership and trust

That transition doesn't happen automatically. Change management is what converts freed-up capacity into measurable business value—without it, the hours saved tend to disappear into unstructured work rather than strategic output.

Frequently Asked Questions

What is the difference between AI agents and traditional RPA or automation in enterprise settings?

AI agents use reasoning, memory, and planning loops to handle ambiguous, multi-step workflows — adapting to context where traditional RPA cannot. RPA follows static scripts that demand structured inputs and stable environments; agents handle variability. The most effective pattern uses agents as the orchestration layer and RPA as the execution layer for repetitive, bounded tasks.

How do enterprises prevent AI agent sprawl during deployment?

Establish a Center of Excellence (CoE) to enforce standards, require use case registration before any agent goes live, and implement orchestration frameworks like MCP or A2A. The CoE maintains a centralized agent inventory and governs lifecycle from deployment through decommissioning.

What governance frameworks should enterprises put in place before deploying AI agents?

Core governance requirements include role-based access controls, immutable audit logs, human-in-the-loop thresholds for high-stakes decisions, and clear agent ownership. Embed these in the technical architecture from day one — governance built into design holds; governance added as policy after the fact rarely does.

How long does enterprise AI agent deployment typically take from pilot to production?

Timelines vary by complexity. Simple, bounded use cases with mature technology stacks can go from pilot to production in weeks. Cross-system, multi-agent deployments in regulated environments often take several months, with integration readiness and compliance validation being the primary variables. A study of 200 B2B deployments found a median time-to-value (breakeven point) of 8 months.

What skills gaps do enterprises most commonly face when deploying AI agents?

Common gaps include agent workflow design, prompt engineering, MLOps, and change management — but governance ownership is often the hardest to fill. IDC predicts dedicated AI risk and accountability roles will be mandatory for half of all AI-enabled applications by 2027, yet most enterprises haven't created them yet.

How should enterprises calculate ROI from AI agent deployments?

Combine hard cost savings — processing time, error rates, headcount redeployment — with strategic value like compliance accuracy and speed to insight. Forrester's Total Economic Impact methodology provides a structured approach covering benefits, costs, flexibility, and risk. Start tracking at the pilot stage so the business case for scale is built on real data, not projections.