Introduction

AI workflow automation promises massive efficiency gains for regulated industries—faster invoice processing, real-time risk decisions, automated reporting. Yet every automated action touching sensitive financial, tax, or patient data carries regulatory weight. Non-compliance penalties can reach €20 million under GDPR, and a single systemic failure can disrupt business continuity across entire supply chains.

The challenge is acute for organizations operating across multiple jurisdictions. A workflow that satisfies GDPR in Germany may simultaneously violate Saudi Arabia's data localization requirements or India's GST real-time reporting obligations.

When AI systems process millions of transactions monthly, even a single misconfigured data access rule can trigger systemic compliance failure at scale.

That's the problem this guide addresses: which regulatory frameworks apply, what compliance risks AI automation introduces, and how to architect workflows that are both powerful and audit-ready across jurisdictions.

TLDR

- AI workflow automation in regulated industries must embed compliance from the start, not add it as an afterthought

- Key frameworks to address: GDPR, HIPAA, GST/e-invoicing mandates, and VAT regimes like ZATCA, HMRC, and FTA

- Top compliance risks include data leakage, weak audit trails, unauthorized access, and jurisdictional mismatches across multi-country deployments

- A compliance-first build requires data minimization, role-based access, end-to-end audit logging, and vendor agreements like BAAs and DPAs

Why Regulated Industries Face Unique AI Compliance Challenges

Regulated industries—finance, BFSI, healthcare, manufacturing, government—operate under overlapping, jurisdiction-specific frameworks that general-purpose AI tools are not built to handle. The real challenge is satisfying GST mandates in India, VAT rules in UAE, ZATCA requirements in Saudi Arabia, and GDPR in Europe—simultaneously, within a single automated workflow.

The Data Sensitivity Problem

Regulated workflows handle legally protected data: PHI, financial transaction records, tax filings, credit assessments, invoice data with PAN/VAT IDs. AI systems that ingest this data to automate processes automatically inherit all compliance obligations tied to it.

Under HIPAA, any AI vendor that creates, receives, maintains, or transmits Protected Health Information is a business associate and must sign a Business Associate Agreement (BAA). Sharing PHI without one is a direct HIPAA violation. GDPR similarly requires a formal Data Processing Agreement (DPA) before personal data flows to third-party AI models.

Volume and Velocity at Scale

When AI processes millions of transactions monthly, isolated failures become systemic. India's UPI system processes over 20 billion digital payments in a single month, while the U.S. CMS handles over one billion Medicare claims annually. At this scale, even a single missing encryption step or misconfigured access rule can create compliance failures affecting millions of records. The Financial Stability Board and Bank for International Settlements warn that AI risks in finance can become system-wide due to herding behavior from market participants using similar algorithms and concentration risk from reliance on a few third-party AI providers.

The Accountability Gap

Unlike human-executed workflows with clear decision-makers, AI-automated workflows must demonstrate explainability and auditability to regulators. Under the EU AI Act, AI systems for credit scoring and lending are classified as "high-risk." Article 14 mandates robust human oversight. A designated natural person must be able to:

- Understand the system's capabilities and limitations

- Monitor for anomalies and unexpected behavior

- Guard against automation bias in decision-making

- Correctly interpret outputs in context

- Override or reject the system's output with final authority

India's Reserve Bank of India sets equally stringent requirements for regulated entities:

- Board-approved AI policies covering governance, ethics, and accountability

- Models that are "Understandable by Design" with consistent, unbiased, verifiable outcomes

- Mandatory approval from the Board's Risk Management Committee before credit model deployment

- Independent validation required before go-live and annually thereafter

Cross-Border Complexity

These governance obligations don't operate in isolation—they collide across borders. Enterprises operating across multiple geographies face directly conflicting requirements. GDPR's Chapter V requires companies to assess destination country laws and suspend data transfers if adequate protection isn't guaranteed. This creates direct conflict with real-time e-invoicing and tax reporting mandates in countries like India (GSTN), Saudi Arabia (ZATCA), and the UAE, which require near-real-time data submission to government portals. AI workflows must be architected to resolve these conflicts without manual intervention.

Key Regulatory Frameworks That Govern AI Workflow Automation

GDPR (EU)

GDPR classifies health data, financial identifiers, and behavioral data as special categories requiring heightened protection. Its "privacy by design" principle means compliance controls must be embedded into AI workflow architecture from inception, not patched in later. Article 83 sets maximum fines at €20 million or 4% of total worldwide annual turnover, whichever is higher.

Article 33 requires controllers to notify supervisory authorities within 72 hours of becoming aware of a personal data breach—a tight window for organizations processing high transaction volumes.

Article 22 prohibits fully automated decisions with significant individual impact, such as automatic credit application refusals. This requires human-in-the-loop design for AI workflows in lending, insurance, and credit scoring. Even when exceptions apply (contract necessity, legal authorization, or explicit consent), controllers must implement safeguards including the right to obtain human intervention, express viewpoints, and contest decisions.

Enforcement is real: the French DPA fined Clearview AI €20 million in October 2022 for unlawful processing of personal data to build its facial recognition system, while the Dutch DPA imposed €30.5 million on the same company in September 2024.

HIPAA and Healthcare AI

HIPAA applies to any AI system that processes, stores, or transmits Protected Health Information—including workflow automation tools used by healthcare providers or their business associates. Core compliance obligations include:

- Executing a Business Associate Agreement with every AI vendor

- Implementing role-based access controls and audit logs

- Encrypting PHI at rest and in transit

- Conducting regular risk assessments

The "minimum necessary" standard (45 CFR 164.502(b) and 164.514(d)) requires covered entities and business associates to limit PHI use, disclosure, and requests to the minimum amount needed for the intended purpose. AI vendors must design systems to prevent unnecessary data exposure.

The financial stakes are significant: healthcare data breaches carry the highest average cost at $10.93 million per incident according to IBM's 2023 Cost of a Data Breach Report—more than double the global average of $4.45 million.

Tax Compliance Mandates (GST, VAT, ZATCA, PEPPOL)

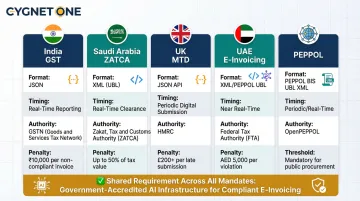

For finance and commercial enterprises, AI workflow automation intersects directly with tax authority mandates. Each represents a government-enforced technical specification with precise data format, timing, and certification requirements.

India's GST e-invoicing requires real-time Invoice Reference Number (IRN) generation and submission to authorized Invoice Registration Portals in standardized JSON format. As of April 1, 2025, taxpayers with aggregate annual turnover exceeding ₹10 crore face a 30-day time limit for reporting older invoices. Invoices without valid IRNs are considered invalid under Rule 48(4), leading to penalties and loss of input tax credit for recipients.

Saudi Arabia's ZATCA Phase 2 mandates end-to-end cryptographic signing and real-time reporting. Taxpayers must generate invoices in UBL XML format with UUID and invoice hash, apply ECDSA digital signatures, and receive cryptographic stamps from the FATOORA platform upon clearance. Penalties start at SR 5,000 for failure to issue electronic invoices and SR 10,000 for deleting or amending invoices after issuance.

UK's Making Tax Digital requires businesses to maintain digital records and use MTD-compatible software connecting directly to HMRC systems via APIs. MTD for VAT is mandatory for all VAT-registered businesses, while MTD for Income Tax Self Assessment becomes mandatory from April 2026 for sole traders and landlords with qualifying income over £50,000.

UAE's Electronic Invoicing framework uses a 5-corner model referencing the PEPPOL network, requiring XML payloads with UUIDs for each invoice. Businesses must onboard with an Accredited Service Provider to connect to the network. Implementation is phased—entities with revenue of AED 50 million or more must go live by January 1, 2027.

PEPPOL compliance is increasingly mandated for Business-to-Government transactions in the EU, Singapore, Australia, and Japan, with countries like UAE referencing it for B2B transactions. The network operates on a 4-corner model where certified Access Points exchange UBL XML documents on behalf of senders and receivers.

AI workflows automating invoice generation, reconciliation, or tax reporting must be built on infrastructure certified by these authorities—not just generically compliant.

Financial Sector-Specific Regulations

India's Digital Personal Data Protection (DPDP) Act, enacted August 11, 2023 with phased commencement from November 13, 2025, directly applies to AI systems processing digital personal data. Companies using AI tools are "Data Fiduciaries," while AI vendors are "Data Processors."

Fiduciaries must engage processors only under valid contracts, remain ultimately responsible for compliance, implement reasonable security safeguards to prevent breaches, and notify both the Data Protection Board and affected individuals in the event of a breach.

The Most Common AI Compliance Risks in Regulated Workflows

Data Leakage and Model Exposure

AI systems trained on or given access to regulated data can inadvertently expose that data through model outputs, API responses, or log files. Feeding regulated data into third-party AI models without appropriate contractual safeguards is a major compliance violation.

The European Data Protection Board has clarified that if processing is found unlawful, supervisory authorities can impose corrective measures up to and including ordering erasure of the entire dataset and the AI model itself. For enterprises processing millions of transactions, that outcome represents an existential operational risk.

Inadequate Audit Trails

Regulators in every major jurisdiction require organizations to demonstrate what decision was made, by whom or what system, using what data, and when. Many off-the-shelf AI automation tools do not generate the granular, tamper-evident logs needed for tax authority audits, GDPR inquiries, or financial regulator inspections.

A compliant audit trail must contain:

- India (GSTN): Standardized JSON payload, unique IRN (hash), IRP's digital signature on complete JSON, and digitally signed QR code with GSTINs, invoice details, and IRN

- UK (HMRC): Digital links showing an unbroken digital journey from initial business record to final VAT return submission via API, with no manual re-keying

- Saudi Arabia (ZATCA): Generated XML invoices, corresponding QR codes, computerized records of all invoices, system audit logs, and protection against alteration or undetected deletion

Retention periods vary: 6 years for GST records in India, 6 years for HMRC records in the UK, 6 years for ZATCA e-invoicing logs, and 6 months for EU AI Act high-risk system logs.

Jurisdictional Mismatches in Multi-Country Deployments

A single AI automation layer deployed globally without region-specific configuration can trigger simultaneous non-compliance across multiple jurisdictions. Common collision points include:

- A GDPR-compliant workflow in Germany that routes data through servers prohibited under Saudi Arabia's data localization rules

- An automated invoice workflow built for India's real-time GST reporting that lacks the structured XML outputs required under ZATCA Phase 2

- A unified EU data retention policy that conflicts with India's 6-year GST record mandate or shorter retention windows in other markets

Each jurisdiction has its own ruleset. A configuration that satisfies one regulator may actively violate another.

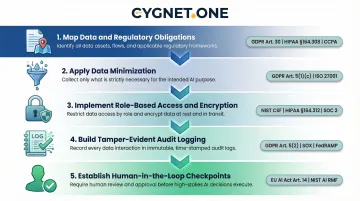

How to Build a Compliance-First AI Workflow: Step-by-Step

Step 1 — Map Data and Regulatory Obligations Before Automating

Before deploying AI in any regulated workflow, document every data element the workflow will touch, classify it by sensitivity (PHI, PII, financial record, tax data), and map it to the applicable regulation in each jurisdiction. This inventory drives every access control, encryption decision, and audit requirement in the steps that follow.

Step 2 — Apply Data Minimization and Purpose Limitation

Configure AI workflows to access only the data fields required for the specific task. An AI reconciling invoices does not need access to employee payroll records. Limit data scope both at collection and at inference time. This directly satisfies GDPR Article 5(1)(c), which mandates that personal data must be "adequate, relevant and limited to what is necessary," HIPAA's minimum necessary standard, and India's DPDP principles.

Step 3 — Implement Role-Based Access Controls and Encryption

Define who (or what system) can access regulated data, under what conditions, and for what purpose. Encrypt data at rest and in transit. For tax workflows, ensure that API connections to government portals (IRP, ZATCA, HMRC) use only authenticated, encrypted channels.

GDPR and HIPAA don't mandate specific algorithms by name, but both require "appropriate technical and organisational measures" reflecting the "state of the art." NIST is the primary standard-setting body for defining what that means in practice:

- AES-256 encryption for data at rest (NIST FIPS 197)

- TLS 1.3 for data in transit (NIST SP 800-52 Rev. 2)

- SHA-256 hashing and ECDSA digital signatures for e-invoicing, as required by ZATCA

Step 4 — Build Tamper-Evident Audit Logging Into Every Workflow Step

Every AI-executed action on regulated data must generate a timestamped, immutable log entry. This includes invoice generation events, tax filing submissions, credit decisions, or data access events. Logs must be retained for the duration required by applicable regulation.

Step 5 — Establish Human-in-the-Loop Checkpoints for High-Stakes Decisions

Audit logs capture what happened — but for decisions that materially affect individuals or businesses (loan approvals, tax assessments, credit scoring), logging alone isn't enough. AI should generate the recommendation, but a qualified human reviewer must authorize the final action. This satisfies GDPR Article 22, RBI lending guidelines, and EU AI Act requirements for high-risk AI systems. Define clear decision thresholds that trigger human review, such as credit amounts above specified limits or risk scores outside acceptable ranges.

How to Select an AI Automation Platform for Regulated Industries

Must-Have Compliance Criteria for Vendor Selection

The platform must hold relevant regulatory certifications for jurisdictions in which you operate. For India, look for IRP/GSP approval from GSTN. For Saudi Arabia, ZATCA recognition. For UK, HMRC recognition. For EU/Malaysia, PEPPOL certification. The platform must offer SOC 1/SOC 2 compliance, data residency options, and the ability to sign DPAs or BAAs.

For tax automation specifically, look for government-accredited infrastructure—not just API-connected tools. Cygnet.One, for example, holds simultaneous recognition across ZATCA (Saudi Arabia), FTA (UAE), HMRC (UK), MDEC (Malaysia), and BOSA (Belgium), and processes over 55 million transactions monthly with 99% uptime. This multi-jurisdictional accreditation means enterprises can deploy a single platform across regions rather than managing fragmented, jurisdiction-specific systems.

Evaluate ERP Integration Depth and Auditability

A compliant AI platform must integrate directly with existing ERP and financial systems without creating data silos or shadow processes that escape audit coverage. While specific statistics on compliance failures from integration gaps are proprietary, regulatory bodies acknowledge this risk through their guidance.

ZATCA provides SDKs and sandboxes, while GSTN defines detailed SLAs and failure metrics for integration partners—resources created specifically to address the compliance risk that complex system integrations introduce.

Evaluate whether the platform offers pre-built, certified integrations (e.g., 250+ ERP integrations) versus custom-coded connections that carry their own compliance risk. Cygnet.One's architecture includes embedded payment-block logic and validation modules directly within ERP systems, allowing finance teams to make payment decisions from within the ERP cockpit without toggling between systems—ensuring compliance controls remain integrated into core financial processes.

Assess Ongoing Compliance Monitoring and Update Mechanisms

Tax laws, data protection regulations, and e-invoicing mandates change frequently—sometimes with short implementation windows. Recent examples across key markets include:

- India: CBIC has progressively lowered e-invoicing turnover thresholds through multiple notifications

- Saudi Arabia: FATOORA Phase 2 began in January 2023 and continues rolling out in waves

- UAE: E-invoicing framework published detailed guidelines in February 2026 with phased implementation through 2027

- UK: Making Tax Digital follows a multi-year roadmap from 2026 through 2028

The platform must have a demonstrated track record of updating its compliance controls in response to regulatory changes and notifying customers proactively. Cygnet.One, for instance, continuously monitors regulatory updates and implements changes to ensure systems remain compliant with the latest GST and government requirements under its Annual Maintenance Contract.

Frequently Asked Questions

What AI software is HIPAA compliant?

HIPAA-compliant AI software must be certified by an accredited body, willing to sign a Business Associate Agreement, and capable of implementing encryption, role-based access, and audit logging for PHI. Healthcare organizations should verify certifications rather than accept vendor self-declarations.

What is GDPR compliance in AI systems?

GDPR compliance in AI means building privacy-by-design into every layer: collecting only necessary data, establishing lawful bases for processing, and enabling individual rights such as access and deletion. Article 22 also requires human oversight for fully automated high-stakes decisions, and organizations must maintain detailed processing records throughout.

Which regulations apply to AI workflow automation in financial services?

Key frameworks include GDPR (EU), India's DPDP Act and RBI guidelines on algorithmic lending, ZATCA e-invoicing rules (Saudi Arabia), HMRC Making Tax Digital (UK), and FTA VAT regulations (UAE). Sector-specific mandates like SEBI or Basel III apply depending on organization type.

How do audit trails support AI compliance in regulated industries?

Audit trails create tamper-evident records of every AI decision, data access event, and workflow action. They must capture what data was used, what action was taken, when, and by which system or user—and be retained for the legally required period (six years for most tax records under frameworks like HMRC and equivalent mandates).

Can AI automate multi-jurisdictional tax compliance simultaneously?

Yes, but only when the AI platform is built on jurisdiction-aware logic and holds certifications from each relevant tax authority (ZATCA for Saudi Arabia, GSP/IRP for India, PEPPOL for EU). Generic AI tools lacking government accreditation cannot reliably handle real-time mandate requirements across multiple jurisdictions.